Beyond the Hype Cycle: A Pragmatist’s Guide to Resisting Premature Abstraction and Choosing Simplicity as a Core Architectural Feature

1 Introduction: The Parable of the Two Architects

Imagine two talented software architects, Alex and Maria, each deeply skilled but with fundamentally different approaches. Both seek software excellence, but their journeys diverge sharply when confronting the inevitable complexity of software architecture.

1.1 The Pattern Purist: Alex’s Story

Alex loves patterns. He’s fluent in microservices, event sourcing, CQRS, and service meshes. Every new project is a playground of architectural purity—no coupling, fully distributed, and painstakingly future-proof.

Recently, Alex took charge of a straightforward departmental application. Instead of a simple CRUD approach, Alex implemented microservices complete with asynchronous event sourcing, CQRS read and write models, and an Istio-powered service mesh. The solution was textbook-perfect but turned out to be complex beyond necessity. As new developers joined the team, onboarding time skyrocketed, and simple changes became surprisingly expensive.

Does Alex’s scenario sound familiar?

1.2 The Pragmatic Simplifier: Maria’s Story

Maria takes the opposite path. She begins every project by asking a crucial question: “What problem are we actually solving today?”

Her latest assignment—a departmental application similar to Alex’s—was addressed differently. Maria chose a modular monolithic architecture, opting for clear boundaries, minimal dependencies, and modern yet simple tooling. When her team needed additional complexity, Maria incrementally evolved the architecture without disruption.

Maria’s application was delivered ahead of schedule, easily maintained, and straightforward for new developers.

Wouldn’t you prefer Maria’s outcome?

1.3 The Core Dilemma

The difference between Alex and Maria highlights a fundamental dilemma architects face: when should we leverage patterns and complex architectures, and when should simplicity reign supreme?

This article isn’t about condemning patterns—they’re incredibly valuable. Instead, it shines a light on the pitfalls of premature abstraction. Overusing design patterns can unintentionally complicate projects, resulting in technical debt, reduced maintainability, and inflated costs.

The goal here is to equip architects with the insights necessary to choose simplicity confidently and appropriately, rather than instinctively chasing the latest trends or best practices.

2 The Siren Song of Decoupling: Why We Worship at the Altar of Patterns

Before diving deeper, let’s unpack why patterns—particularly decoupling strategies—are attractive, even seductive. Understanding their allure helps us appreciate the balancing act required to avoid their misapplication.

2.1 The Promised Land: Benefits of Decoupling and Architectural Patterns

Decoupling and modern architectural patterns undeniably promise significant benefits:

-

Scalability: Decoupled components can scale independently. If a single service faces increased demand, it can scale without impacting the broader system.

-

Resilience: Decoupled architectures isolate faults. If one component fails, the rest remain unaffected. Your “blast radius” shrinks, minimizing downtime and disruptions.

-

Maintainability: Smaller, focused components mean teams understand and manage code more easily, keeping codebases clean and maintainable.

-

Team Autonomy: Jeff Bezos popularized the idea of the “two-pizza team.” Decoupled services naturally align with this vision, empowering small teams to own entire features.

-

Technology Diversity: Patterns empower architects to choose the right technology for each problem rather than a restrictive, one-size-fits-all approach.

This combination can seem irresistible, but have you considered the downsides that come with the complexity?

2.2 The Common Language: Patterns as a Shared Vocabulary

Design patterns offer a powerful advantage—they create a common language among developers and architects. Patterns from the Gang of Four (GoF) like Singleton, Strategy, or Observer, alongside modern cloud-native patterns such as Circuit Breakers, Service Meshes, and CQRS, simplify complex conversations.

When someone says, “Let’s use CQRS for this module,” everyone immediately understands the intent behind it—separating read and write concerns clearly.

But here’s the catch: a shared language doesn’t always equate to shared understanding of when a pattern is truly justified. Just because you can easily discuss CQRS doesn’t mean it’s the right pattern to use for every scenario.

2.3 Resume-Driven Development (RDD): The Uncomfortable Truth

Now let’s address the elephant in the room—Resume-Driven Development (RDD). It’s the tendency to choose trendy patterns or technologies not necessarily because the project demands them, but because architects and developers want to boost their personal marketability.

Consider a team that introduces Kubernetes for a small-scale internal app, not because containers solve a business need, but because Kubernetes looks impressive on LinkedIn. While career growth is understandable, this approach creates friction, unnecessary complexity, and hidden costs.

Is your architecture influenced by the desire for novelty over necessity?

3 The Complexity Tax: Unpacking the Hidden Overhead of “Perfect” Architectures

When crafting software systems, architects often chase the vision of a perfect architecture. But every decision carries a hidden cost—a complexity tax—paid not just in dollars, but in productivity, developer satisfaction, and maintainability. To make better decisions, architects must understand the true nature of complexity.

3.1 Distinguishing Complexity: Essential vs. Accidental

Fred Brooks famously introduced the concepts of essential and accidental complexity in software engineering. Essential complexity is inherent—it’s the unavoidable difficulty that arises from the business domain itself. For instance, handling financial compliance in banking software or real-time bidding in an advertising system is inherently complex. You can’t simplify these realities away.

Accidental complexity, however, is entirely self-inflicted. It emerges from technical choices that add layers of abstraction, unnecessary interactions, and complicated deployments that don’t directly address business needs. For example, choosing to build a highly distributed, event-sourced system for a straightforward departmental application introduces accidental complexity.

The architect’s goal is clear: Model essential complexity but ruthlessly eliminate accidental complexity.

Are you confident in your ability to differentiate between these two forms of complexity in your current project?

3.2 The Four Horsemen of Pattern Overhead

Complexity in architecture brings significant hidden overhead. Let’s explore the four main categories that architects must consider carefully before adopting complex patterns:

3.2.1 Cognitive Overhead

Every additional service, abstraction, and component adds cognitive overhead—the mental energy developers must expend to understand, debug, and enhance the system. Imagine onboarding a new team member to a monolithic app with clearly defined modules versus onboarding them to an architecture with 50 microservices, asynchronous messaging, and eventual consistency. The latter demands a far higher cognitive load, significantly delaying productivity.

Debugging distributed systems also amplifies cognitive overhead exponentially. Rather than a straightforward stack trace in one location, debugging might require tracing requests across logs from multiple services.

Ask yourself: How many mental models must your developers juggle every day? Are you trading developer productivity for architectural elegance?

3.2.2 Development & Tooling Overhead

Complex architectures typically lead to increased boilerplate code, sophisticated CI/CD pipelines, and the need for specialized tooling. For instance, adopting microservices often demands additional infrastructure such as:

- API Gateways (e.g., Kong, Azure API Management)

- Message Queues (e.g., Kafka, RabbitMQ)

- Service Discovery (e.g., Consul, Eureka)

- Container Orchestration (e.g., Kubernetes, Docker Swarm)

Each of these requires custom configurations, expertise, and ongoing maintenance. While such tools provide value in genuinely complex, distributed contexts, they become burdensome overhead if misapplied.

Is your current architecture weighed down by unnecessary tooling?

3.2.3 Operational Overhead

The third overhead arises from operations. Every new service must be monitored, secured, patched, deployed, and scaled independently. Teams often underestimate the cost of operational complexity until they find themselves drowning in alerts, runbooks, and patch cycles.

This operational complexity has driven the rise of Platform Engineering, a discipline entirely focused on abstracting complexity and creating self-service platforms for developers. While platform teams can ease operational burdens, their very existence often signals architectural overcomplexity.

Consider this: Does your architecture force you to hire a dedicated operations or platform team just to manage complexity introduced prematurely?

3.2.4 Performance Overhead

Finally, decoupled architectures carry inherent performance overhead due to network latency, serialization/deserialization, and the complexity of distributed transactions. While a local method call takes nanoseconds, network calls—even internal ones—can introduce tens to hundreds of milliseconds per interaction.

Imagine a simple order-processing scenario: a monolithic application may execute this instantly, while a distributed microservice architecture could require multiple service hops, significantly increasing latency.

Have you measured the true performance costs your architecture introduces? Are these costs justified by tangible business value?

4 The Architect’s Heuristic Toolkit: A Framework for Deciding “When”

Given these overheads, architects need practical heuristics—a toolkit—to decide when complex patterns genuinely add value versus when simplicity is the better choice. Let’s explore essential heuristics that empower architects to make balanced, informed decisions.

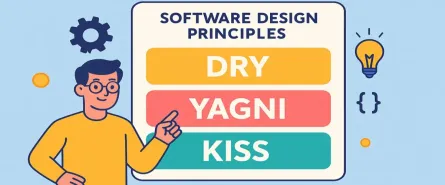

4.1 The YAGNI Litmus Test (You Ain’t Gonna Need It)

YAGNI is your most powerful heuristic. It simply asks: “Are we solving a real, present-day problem or a hypothetical future scenario?”

Architects often justify complexity by anticipating future requirements. But complexity should only enter the system when the need clearly emerges. By postponing architectural decisions until they’re genuinely needed, you minimize accidental complexity.

Always ask yourself: Is this architectural complexity necessary today, or are you speculatively solving tomorrow’s problem?

4.2 Evaluating the Business Domain

Your business domain’s structure is critical in deciding whether patterns like microservices or event-driven architectures are appropriate. Consider two primary aspects:

Bounded Contexts: If your domain’s bounded contexts naturally align into independent, autonomous areas, then patterns like microservices may provide clear value. But if contexts are highly interdependent and chatty, requiring transactional consistency, a simpler monolith or modular monolith could avoid accidental complexity.

Volatility: How frequently do specific parts of your system change independently? A volatile subsystem that evolves quickly benefits significantly from isolation. Conversely, stable, core domains rarely justify additional complexity and often perform best within simpler structures.

Do you clearly understand how your business contexts align with your architecture choices?

4.3 Team Dynamics and Cognitive Load

Your team’s size, skills, and organization should directly inform your architectural decisions:

Team Size & Skillset: A small, less experienced team managing a highly distributed architecture leads to inevitable burnout and operational nightmares. Align complexity with your team’s current capabilities, gradually evolving as experience grows.

The “Inverse Conway Maneuver”: Rather than designing an ideal architecture and reshaping your team structure around it, use your current organizational capabilities to drive architectural choices. Begin with simpler structures and evolve your architecture naturally as your teams mature.

Reflect honestly: Does your current architecture fit your team’s real-world skills, size, and dynamics?

4.4 The Performance Budget

Finally, clearly define your acceptable performance budget. Many internal or departmental applications benefit far more from a simple, performant monolithic application than a sophisticated, distributed system laden with latency.

Here’s an illustrative example:

In-process Call (minimal latency):

// Simple local method call

var result = CalculateInvoiceTotal(invoice);Distributed Call (network overhead):

// Distributed service call

var httpClient = new HttpClient();

var result = await httpClient.GetFromJsonAsync<InvoiceTotal>("https://invoice-service/api/calculate");The distributed call might take tens or hundreds of milliseconds, while the local call is nearly instantaneous.

Is the added latency and complexity worth it in your context? For most internal or departmental applications, the answer is often a resounding no.

5 Real-World Battlegrounds: Case Studies in Simplicity

Theory is important, but real architectural lessons are forged in the heat of actual projects. Let’s examine three telling case studies—each a scenario many architects have witnessed firsthand. In each, we’ll see the cost of unnecessary complexity and the clarity that emerges when teams refocus on simplicity.

5.1 Case Study 1: The Prematurely Decomposed Startup

The Scenario A promising new B2B SaaS company sets out to build a customer appointment management platform. The founders anticipate rapid growth and decide to architect for scale from day one.

The Over-Engineered Approach Instead of starting small, the team deploys a full suite of microservices. There are separate services for Users, Appointments, Notifications, and Payments. Each communicates through a message bus, such as Kafka or RabbitMQ. Every API call routes through an API Gateway for “proper” edge security and management.

At first, the design feels robust and future-ready. Each service has its own deployment pipeline. Codebases are neatly separated. Patterns like event-driven communication and eventual consistency are implemented, often with more ambition than experience.

The Pain Points This elegance doesn’t last. Quickly, cracks appear:

-

Testing is Complex: End-to-end testing becomes convoluted, requiring all services and infrastructure to be spun up locally or in CI. Simulating customer journeys means configuring multiple stubs and faking dozens of inter-service events.

-

Deployment is Risky: Even a minor change to, say, the Appointments module, now demands coordinated releases and version management across at least two or three services. Hotfixes, once a matter of a single code push, become nerve-wracking rollouts.

-

Debugging is Painful: A missing notification? The cause could lurk in the Appointments service, the message bus, or the Notifications service itself. Developers spend more time chasing logs across systems than solving actual problems.

-

Velocity Drops: What began as a sprint becomes a slog. New features are bottlenecked by integration complexity. The “startup speed” vanishes under architectural weight.

The Pragmatic Refactor: The Modular Monolith Eventually, reality demands a change. The team pauses and rethinks.

- The solution: Merge the codebases into a single application, but maintain strict module boundaries within the code (Users, Appointments, etc.).

- Communication shifts to in-process function calls—no message bus required.

- Local development and testing become fast and predictable.

- A single deployable artifact simplifies releases.

Importantly, the modular monolith keeps the door open for future decomposition. If and when growth truly arrives, modules can be extracted as services with minimal disruption. Meanwhile, the product launches faster, is easier to evolve, and is far less expensive to maintain.

Takeaway: Premature decomposition isn’t just wasteful—it can threaten the very speed and flexibility that startups need to survive. Always build for today’s scale, not tomorrow’s theoretical needs.

5.2 Case Study 2: The Service-Oriented Quagmire

The Scenario A well-established finance company needs a new reporting feature. This feature will aggregate customer and transaction data spread across three existing backend services.

The Over-Engineered Approach Rather than revisiting the data model or considering business realities, the team builds an “aggregator” microservice. This service makes synchronous API requests to the other three services, joins data in memory, and serves the aggregated result to reporting tools and dashboards.

The Pain Points

-

Brittle by Design: The reporting feature is now a house of cards. If any of the upstream services are slow or unavailable, the entire reporting endpoint fails. Users experience frequent outages and timeouts.

-

Unpredictable Performance: The performance of the reporting API is determined by the slowest dependency. This leads to inconsistent user experiences and spikes in support tickets.

-

Complex Debugging: Issues can originate from any upstream service. Tracking the source of incorrect or missing data becomes an exercise in frustration.

-

Operational Load: Monitoring and alerting become complex. Each service dependency requires its own health checks and dashboards.

The Pragmatic Refactor: The Simple Data Cache After repeated failures, the team reassesses:

- They switch to a scheduled batch job (nightly or hourly) that pulls necessary data from the source services or directly from their databases (where security policies permit).

- The job denormalizes and aggregates the data into a single, simple read model, such as a PostgreSQL reporting table or an Elasticsearch index.

- The reporting “service” now becomes a straightforward API layer, reading pre-aggregated data with low, predictable latency.

With this change:

- Reporting is resilient to upstream failures. If a source service is down during a batch run, the previous batch data is still available.

- Performance is orders of magnitude better.

- Debugging shifts to checking the batch pipeline, not three live services.

Takeaway: Not every use case requires real-time, distributed aggregation. For reporting, simplicity and reliability almost always trump perfect “real-time” data, especially when that real-time complexity introduces significant risk.

5.3 Case Study 3: The Serverless Synchronicity Trap

The Scenario An online retailer modernizes its order-processing system using a serverless architecture. The workflow for processing an order is straightforward: OrderPlaced → ValidateInventory → ProcessPayment → ScheduleShipping.

The Over-Engineered Approach A well-meaning team sets up each workflow step as its own Lambda (or Azure Function). These functions call each other synchronously, passing the payload along as they go.

The Pain Points

-

Distributed Monolith: Instead of modular, independently scalable services, the architecture is now a fragile, tightly-coupled chain. Any failure in one function causes the whole process to fail.

-

Retry Headaches: Timeouts or errors in functions (especially in payment processing) make retries difficult. There’s no central orchestrator managing workflow state or handling compensation logic.

-

Escalating Costs: As functions wait on each other (especially on slow I/O or external services), serverless billing continues to accrue. In peak periods, costs spike unexpectedly.

-

Observability Challenges: Tracing issues through the invocation chain becomes complex. Debugging why an order didn’t complete can involve sifting through logs from multiple serverless functions.

The Pragmatic Refactor: Orchestration with a State Machine Recognizing the pain, the team adopts a managed workflow orchestrator:

- On AWS, they move the process to Step Functions; on Azure, they use Durable Functions.

- The state machine now coordinates the steps, handling retries, timeouts, and error paths.

- Each function is refocused on a single responsibility, while the state machine manages transitions, state, and compensation logic.

This change:

- Improves resilience: Individual failures can be retried automatically.

- Reduces cost: Long-running or idle states no longer consume compute resources.

- Boosts observability: Centralized state makes tracing and debugging easier.

- Lifts business logic into configuration, reducing code complexity.

Takeaway: Serverless unlocks power and flexibility, but without proper orchestration, you risk creating a distributed monolith—trading one set of problems for another. Simplicity emerges when you use the right abstraction at the right layer.

6 The Middle Path: Architecting for Evolution, Not Revolution

Choosing simplicity doesn’t mean giving up on modern architectural aspirations. Rather, it means architecting your software with evolution in mind—designing intentionally to allow your system to grow, scale, and, if necessary, decompose naturally over time. Let’s examine practical strategies for following this “middle path.”

6.1 The Power of the Modular Monolith

Architects often view monoliths negatively, associating them with unmanageable legacy codebases. But modular monoliths are different—they combine the simplicity of a monolithic structure with the architectural clarity necessary to support future decomposition.

Establish Clear Module Boundaries

A modular monolith organizes code into clearly defined, self-contained modules. Each module encapsulates related functionality, sharing as little information with other modules as possible.

For example, consider a typical e-commerce system. You might have distinct modules for:

- Orders

- Inventory

- Payments

- User Accounts

Each module manages its own data and exposes functionality through well-defined APIs.

Public vs. Private APIs

The key is distinguishing between public APIs (interfaces modules expose to other modules) and private APIs (internal implementation details hidden from external modules). This boundary ensures modules can evolve independently, minimizing unintended coupling.

Here’s a quick C# example demonstrating this separation clearly using namespace structures:

// Public API exposed by the Orders module

namespace ModularEcommerce.Orders.Public;

public interface IOrderService

{

Task<Order> CreateOrderAsync(OrderRequest request);

}

// Private Implementation Details hidden within the module

namespace ModularEcommerce.Orders.Internal;

internal class OrderProcessor : IOrderService

{

public async Task<Order> CreateOrderAsync(OrderRequest request)

{

// Implementation details...

}

}This encapsulation ensures that future changes within modules won’t ripple through the whole system.

Avoiding Tangled Dependencies

Strict rules about module interactions prevent “spaghetti code.” Tools like .NET’s built-in access modifiers (internal vs. public), and specialized tools like NDepend, ArchUnit, or SonarQube can enforce architectural rules and detect unwanted dependencies.

The end result is a monolith that’s easy to reason about, test, and maintain—yet structured to allow future extraction into separate services if needed.

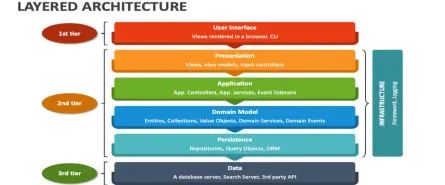

6.2 Vertical Slicing: Organizing by Feature, Not Technical Layer

Traditional software architectures often organize code horizontally—by technical layers such as UI, API, Data Access. This layering seems logical initially but quickly leads to code scattering and coupling across unrelated features.

Vertical slicing, instead, groups related logic by feature, not by technical layer. A vertical slice contains everything necessary to implement a single feature end-to-end, including UI components, APIs, domain logic, and database interaction.

Consider this example structure:

|-- Features

| |-- PlaceOrder

| | |-- PlaceOrderController.cs

| | |-- PlaceOrderCommandHandler.cs

| | |-- PlaceOrderValidator.cs

| | |-- OrderRepository.cs

| |-- CancelOrder

| | |-- CancelOrderController.cs

| | |-- CancelOrderHandler.csEach feature remains isolated. If you later need to scale, optimize, or even extract it into a separate service, you can do so without navigating unrelated code scattered across multiple directories.

Vertical slicing aligns your architecture closely with your business domain, ensuring that features remain cohesive and independently maintainable. It’s an architectural choice that naturally paves the way for future decomposition without premature complexity.

6.3 Simplicity as a Prerequisite for Decoupling

Ironically, to effectively decouple, you first need simplicity. Attempting to break apart a tangled “Big Ball of Mud” into services is notoriously painful and frequently doomed. Monoliths with unclear boundaries and scattered dependencies simply don’t decompose well.

To position your system for successful decoupling, you must first:

- Simplify your current codebase

- Clearly delineate boundaries between modules

- Eliminate shared mutable state and unnecessary complexity

Think of it as cleaning and organizing your garage before trying to move—it’s much easier to pack clearly labeled boxes than to untangle a mess at the last minute.

If your goal is eventual microservices or another distributed architecture, start by simplifying your monolith now. Only after clearly defining internal boundaries and ensuring low coupling will your future decoupling efforts succeed without chaos.

7 Conclusion: The Architect’s True North is Value, Not Complexity

In a world constantly chasing the next big architectural trend, it’s easy to lose sight of the core purpose of architecture: delivering value efficiently. Complexity, no matter how elegant or trendy, is only justified if it directly supports delivering genuine business value.

7.1 Reclaiming Simplicity as a “Feature”

Simplicity isn’t laziness—it’s strategic clarity. It accelerates your team’s productivity, improves maintainability, and directly reduces costs.

Consider simplicity as a key architectural feature with concrete value:

- Faster Delivery: Less complexity means features launch sooner.

- Reduced Risk: Simpler systems have fewer failure points and are easier to debug.

- Lower Cost: Maintenance and operational overhead decrease dramatically.

The art of architectural decision-making is knowing when to introduce complexity—and when to resist it fiercely. By explicitly prioritizing simplicity, you create sustainable, maintainable, and cost-effective systems.

7.2 A Final Heuristic: The 6-12 Month Test

Before choosing any architectural pattern or complexity, ask one final, clarifying question:

“What is the simplest architecture that will reliably meet our known requirements for the next 6-12 months?”

If your simplest approach still leaves room for future evolution, choose it confidently. Often, simplicity now means you’ll move faster and better understand the real needs as the system evolves—without overcommitting prematurely to complex patterns.

7.3 The Evolving Role of the Architect

Great architects aren’t encyclopedias of patterns—they’re pragmatists and strategists. Their expertise lies in weighing the true costs and benefits of complexity, continuously asking:

- Does this architecture genuinely serve our users?

- Will it sustainably deliver business value?

- Are we adding complexity intentionally and strategically, or are we chasing hype?

Tomorrow’s best architects will act as complexity economists, continuously balancing trade-offs. They’ll strategically choose simplicity first, introducing complexity deliberately and incrementally—only as the business context genuinely requires it.

In doing so, they become invaluable guides, steering projects towards long-term health and sustainability rather than short-term technical fads.