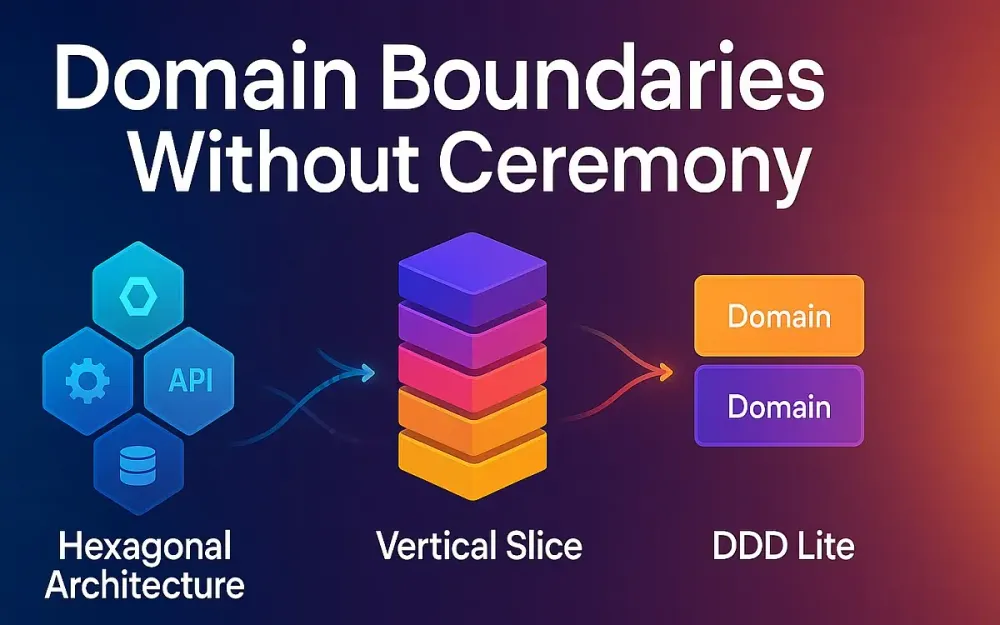

1 Domain Boundaries Without Ceremony

In modern software development, you often find teams drowned in architecture meetings, heavy frameworks, rigid layers, and complex abstractions—only to discover that change is still slow, tests are brittle, and coupling is rampant. What if instead you could define clear domain boundaries with minimal ceremony, keep your core business logic uncluttered, preserve agility, and still allow your system to evolve over time? That’s the promise of Domain Boundaries Without Ceremony—leveraging patterns such as hexagonal architecture, vertical slice, and “DDD Lite” to keep your domain pure, feedback loops fast, and adapters swappable. In this article we’ll walk through why this matters, how we frame the architecture, and provide a practical guide for senior developers, tech-leads and solution architects.

1.1 Why “Domain Boundaries Without Ceremony” Matters Today

In many real-world projects, we see unintended complexity creeping in. Tickets sit in “Business Logic” layer, then travel to “Service” layer, then “Repository” layer, then “Adapter” layer. Tests mock everything, take forever to run, and integration becomes brittle. The domain model—intended as the locus of business change—gets polluted by technical concerns. As a result:

- The business logic is entangled with infrastructure and framework code.

- Feedback loops slow: adding a new feature requires touching many layers and waiting for full regression.

- The system resists change: swapping the database, or the messaging system, becomes a massive refactor.

- Domain boundaries become fuzzy: one team inadvertently modifies another team’s domain model because there were no firm seams. What if you could instead say: “Here is the boundary of a domain capability; within it we evolve freely; outside we hide technical change behind ports/adapters; our domain code is plain and testable.” That’s the essence of without ceremony. It isn’t no architecture—it’s minimal and pragmatic architecture.

1.2 The Promise: keep domain pure, feedback loops fast, adapters swappable

When you adopt domain boundaries without ceremony, you aim for three practical outcomes:

- Purity of domain code: Business rules, invariants, policies live in isolation from infrastructure. The domain model speaks the language of business, not of frameworks or ORMs.

- Fast feedback loops: Because domain logic is isolated and testable, you can write unit tests that execute in milliseconds; you can compose feature slices that deploy quickly. You reduce the “ticket → production” latency.

- Swappable adapters / minimal infrastructure coupling: Since you have clearly defined ports (interfaces) and adapters (implementations) at the periphery, you can change a database, message bus, or external API with minimal impact on the domain. The system stays evolvable (rather than perfect). In short: Less boilerplate. More domain value. Adaptable system.

1.3 Tactical North Stars

1.3.1 Stable interfaces at the boundary, high churn at the edge

Design the ports (i.e., interfaces) to be stable—they define the boundary of what a domain module can do. Let the implementation behind the ports churn: adapters, technical details, framework upgrades. This keeps the “core” stable and allows the periphery to change rapidly.

1.3.2 Test the thing that changes least (domain) fastest

The domain layer is the part of the system that changes least often in terms of infrastructure but most often in business meaning. By providing fast, lightweight tests for invariants and business logic, you build confidence quickly. Then you layer in higher-level tests for slices and integration.

1.3.3 Optimize for evolvability over perfection

Evolvability means: you can change something without rewriting everything. Avoid the temptation of idealizing your architecture up front. Instead adopt a minimal viable architecture that gives you domain boundaries, testability, clear seams—and evolve it as the system grows. In other words: ship, then evolve.

1.4 A Quick Vocabulary

1.4.1 Layered architecture (n-tier) in 60 seconds

Traditional layered architecture (sometimes called n-tier) separates code horizontally: presentation layer, service/business logic layer, data access layer, infrastructure layer. Each layer depends on the one below; you pass through many layers to complete a feature. It’s familiar—but often introduces coupling across layers and slows changes.

1.4.2 Hexagonal (ports & adapters) in 60 seconds

The Hexagonal Architecture (also known as Ports & Adapters) places the domain at the centre, defines ports (interfaces) that the domain depends on—or that external systems depend on—and adapters which implement those ports (e.g., database, HTTP, message queue). The idea: the domain is agnostic of the technology. You can swap adapters without touching domain code.

1.4.3 Vertical Slice architecture in 60 seconds

The Vertical Slice Architecture reorganizes code by feature (slice) rather than by technical layer. Each slice owns all the code to implement a single use-case (UI/API endpoint, business logic, persistence) and is largely independent of other slices. This increases cohesion, reduces cross-feature coupling, and aligns with fast delivery.

1.4.4 “DDD Lite”: tactical DDD without heavyweight process

Domain‑Driven Design (DDD) often conjures heavy processes: domain events, full strategic modelling, ubiquitous language, bounded contexts, event storms. “DDD Lite” means adopting just enough of these – aggregates, invariants, bounded contexts – to gain business alignment and domain clarity, but without lots of ceremony, upfront modelling or heavyweight governance. This is pragmatic DDD for teams who value speed and adaptability.

2 Choosing the Shape: Layered vs. Hexagonal vs. Vertical Slice

Once you understand the vocabulary, you must choose the architectural shape that fits your context. Let’s compare the three styles, decide when each is appropriate, and explore real-world scenarios.

2.1 What each optimizes for

2.1.1 Layered: standardization & reuse across teams

A layered architecture emphasises shared technical infrastructure, consistent layering, and reuse of service/business logic. For large teams, the consistency may ease onboarding, but it risks slow change and higher coupling, especially when many layers must be navigated for a simple change.

2.1.2 Hexagonal: technology independence and testability

Hexagonal architecture is built for decoupling: your core business logic is free from infrastructure concerns. You optimise for testability, clean separation, and ability to swap frameworks or technologies (e.g., switch DB, messaging). If your system has many external integrations, high demands on testability or technology heterogeneity, hexagonal gives you strong leverage.

2.1.3 Vertical Slice: coupling by feature, speed of change

Vertical slice architecture is optimised for feature delivery, high team autonomy, minimal cross-feature coupling. It packs all code for a feature in one place, enabling faster iteration, clearer ownership, and better alignment to business value. It trades some reuse and generalisation for speed and independence.

2.2 Decision matrix (team size, product volatility, integration count, compliance needs)

Here is a simplified decision matrix to help evaluate:

| Factor | Low | Medium | High |

|---|---|---|---|

| Team size | 1-5 | 5-15 | 15+ |

| Product change / volatility | Rare | Moderate | Frequent |

| Integration & external dependencies | Few | Some | Many |

| Compliance / audit / regulation sensitivity | Low | Medium | High |

| Need for technology independence / swapping | Low | Medium | High |

- If team size is small, product changes are slow, and integrations are minimal → Layered may suffice.

- If integrations are many, technology may change, testability is crucial → lean Hexagonal.

- If feature delivery speed is high, team autonomy matters more than reuse → Vertical Slice.

Note: these are not exclusive; you may combine aspects of each.

2.3 Where each wins (real-world scenarios)

2.3.1 CRUD admin apps → layered/vertical slice hybrid

An internal admin app with mostly CRUD operations, few integrations, multiple small features: here a layered architecture brings predictable patterns. Over time you might evolve to vertical slices (features).

2.3.2 Integration-heavy core → hexagonal (ports for inbound/outbound)

When your system is full of inbound events, outbound integrations, variable persistence technologies, then hexagonal architecture shines: define clear inbound ports, outbound ports, adapters, and central domain model.

2.3.3 High-velocity product teams → vertical slices with CQRS

When you have multiple cross-functional squads, each owns a feature end-to-end, need fast time-to-market, minimal dependencies between teams: adopt vertical slices (with possibly CQRS for separation of reads/writes) so each slice becomes autonomous.

2.4 Modular monolith resurgence and why it matters before microservices

Before jumping to microservices, many teams now opt for a modular monolith: a single deployable with clear modules featuring boundaries. Why? Because splitting prematurely adds overhead: distributed systems complexity, networking, versioning, operational burdens. By applying hexagonal or slice-based boundaries inside a monolith, you gain modularity, testability and clear seams—and can transition to microservices later if needed.

2.5 Anti-patterns to avoid (anemic domain, “layer-police”, cargo-cult hexagons)

-

Anemic domain model: domain objects are mere data containers; logic lives in services. This undermines DDD Lite and domain purity.

-

Layer-police: obsessively enforcing “service must call repository only” across layers even when it adds no value—creates ceremony and slows teams.

-

Cargo-cult hexagons: applying hexagonal architecture everywhere, even for trivial features, introducing unnecessary abstraction and complexity. For example, over-engineering adapters for a simple CRUD where a direct ORM access would suffice. As one developer remarked:

“In practice … you start to get many abstractions around concepts that really shouldn’t be abstracted…” By consciously choosing what to apply, when, you maintain pragmatism.

3 Boundary-First Design: Pragmatic Domain + Ports/Adapters

Now we move from “why” and “shape” to “how”. How do you design with boundaries first, define your domain model pragmatically, and layer ports and adapters in a minimal way? Let’s walk through the practical steps.

3.1 Identify core capabilities and seams (domain language, policy vs. workflow)

Start by identifying your domain’s core capabilities. Ask: What business capabilities change the least? Which workflows or policies are central and must be stable? Which are likely to change often? You’re looking for seams—places where the system interacts with externalities (databases, external systems, user interfaces) or where business boundaries occur (billing, order fulfillment, customer management). Use the domain language: e.g., Order Processing, Customer Onboarding, Payment Settlement. These names become your modules. Then classify:

- Policy code (business rules, invariants, guiding decisions)

- Workflow code (orchestration between tasks) Aim to isolate policy into domain model. Use workflow in application services (thin orchestrators) and adapters.

3.2 Define ports

Ports are the interfaces that your core domain or application layer depends on (inbound) or that external systems call into (outbound).

3.2.1 Inbound ports (application services/use cases)

An inbound port is typically a use-case interface: e.g., PlaceOrder, CancelSubscription, GenerateInvoice. It defines the contract for a feature, independent of transport (HTTP, CLI, message queue).

// Example (C#) inbound port

public interface IPlaceOrderUseCase

{

Task<Result<OrderConfirmation>> ExecuteAsync(PlaceOrderCommand command);

}3.2.2 Outbound ports (repositories, message publishers, external gateways)

Outbound ports define what the application or domain needs from external systems: e.g., IOrderRepository, IEmailSender, IBillingGateway. The domain depends only on abstractions.

public interface IOrderRepository

{

Task SaveAsync(Order order);

Task<Order?> GetByIdAsync(OrderId id);

}3.2.3 How many ports is “just enough”?

Don’t obsess over having hundreds of ports. Use the “just enough” rule: one port for each distinct external dependency you’d like to isolate/change independently. If you find yourself creating ports with very little logic, maybe collapse them. If you find many use-cases sharing a port, maybe split it. The goal is to reduce coupling, not to invent abstractions for their own sake.

3.3 Adapters: keep tech choices at the edge (ORMs, HTTP, queues)

Adapters implement the ports: for each outbound port, you’ll typically have e.g., EfCoreOrderRepository, RabbitMqEventPublisher, or HttpPaymentGateway. For inbound ports you’ll have transport adapters: PlaceOrderController, PlaceOrderMessageHandler. These live at the edge of your system. The domain and application logic should not depend on specific frameworks. If the transport changes from HTTP to gRPC, only the adapter layer should change.

3.4 Aggregates and invariants (DDD Lite level of rigor)

Inside the domain model, define aggregates (clusters of domain objects treated as a consistency boundary) and ensure invariants are enforced. This is DDD Lite—not full strategic DDD spying on every bounded context, but enough to capture business rules and maintain integrity. Example: Order aggregate ensures that once payment is captured, you cannot add items; Customer ensures you cannot deactivate a premium plan if open invoices exist. Keep these invariants in methods on the aggregate root, not in service classes.

3.5 Mapping commands/queries to features (thin orchestrators, rich domain)

Often you’ll separate commands (actions that change state) from queries (that fetch state). Use thin orchestrators or application services (handlers) that implement inbound ports. These handlers invoke domain aggregates, repositories, and publish events. This keeps business logic inside domain, and workflow inside application layer. For queries you might use a separate mediator or query handler. This pattern aligns well with vertical slice architectures.

3.6 Vertical slice structure inside a modular monolith (feature folders + ports)

When you adopt a modular monolith, you might structure your code by features (slices). For example:

/Features

/Orders

/PlaceOrder

PlaceOrderCommand.cs

PlaceOrderHandler.cs

PlaceOrderController.cs

OrderDto.cs

OrderMapper.cs

/CancelOrder

…

/Customers

…

/Domain

/Orders

Order.cs

OrderItem.cs

/Customers

…

/Infrastructure

/Persistence

EfCoreOrderRepository.cs

/Messaging

RabbitMqEventPublisher.csIn this structure each slice lives under Features and owns inbound ports, handlers, DTOs. The Domain folder holds aggregates and business logic. The Infrastructure folder holds adapters. This keeps clear boundaries and aligns with both vertical slice and hexagonal principles.

3.7 Example tech stacks that fit nicely

3.7.1 .NET: minimal APIs + MediatR + EF Core adapters

In a .NET world you could use a Minimal API (ASP.NET Core), with MediatR for handling commands/queries, EF Core for persistence, and clear ports/adapters. Use feature folders for slices.

3.7.2 Java: Spring Boot app services + outbound ports + adapters

In a Java context, you might use Spring Boot, define interfaces for ports, use @Service and @Component for application logic, @Repository for infrastructure, and keep dependencies inverted. Use modules or packages for domain logic.

3.7.3 Node/NestJS: modules as slices, CQRS package for handlers

In Node.js with NestJS, you can arrange modules per feature, use @Module to group controllers, services, and define command/query handlers with @nestjs/cqrs. Define interfaces for outbound ports and provide adapter implementations via providers.

4 Walkthrough: From Request → Domain → Persistence (Minimal Boilerplate)

Now that we’ve covered the principles and architectural shapes, let’s walk a complete path through a single feature—from the external request to the domain core and down to persistence. We’ll follow a realistic business example: “Place Order”. The goal isn’t to build the most sophisticated system but to demonstrate how hexagonal and vertical slice concepts blend to keep the code minimal, testable, and evolvable.

4.1 Baseline feature: “Place Order”

Imagine an e-commerce domain. A customer places an order for one or more products, and the system must:

- Validate that all products exist and are available.

- Create a new

Orderaggregate containing items and totals. - Persist the order.

- Publish an event so other subsystems (e.g., invoicing, shipping) can react. Here we’ll design one feature slice implementing this workflow with minimal ceremony.

In our walkthrough we’ll assume:

- Language: C# (.NET 8) for clarity; however, equivalent structures apply in Java/Spring Boot or NestJS/TypeScript.

- Architecture: modular monolith using vertical slices backed by hexagonal boundaries.

- Tooling: MediatR for request/response dispatching, EF Core for persistence, RabbitMQ for messaging.

4.2 Contract first: request/response DTOs per slice

Each feature starts by defining its external contract—what the outside world sees. For HTTP endpoints, this means request and response DTOs. Keep DTOs local to the slice; don’t share them across features to avoid coupling.

// Features/Orders/PlaceOrder/PlaceOrderRequest.cs

public record PlaceOrderRequest(Guid CustomerId, List<OrderItemDto> Items);

public record OrderItemDto(Guid ProductId, int Quantity);

// Features/Orders/PlaceOrder/PlaceOrderResponse.cs

public record PlaceOrderResponse(Guid OrderId, decimal TotalAmount, string Status);These DTOs are purely transport-level structures. They don’t carry business rules—just data for the API layer.

In a controller or API endpoint, the request will be bound automatically, then passed to a handler via MediatR or a similar mediator.

4.3 Inbound port (use case) and handler (e.g., MediatR/Nest CQRS)

Next, define the inbound port—the use case interface that represents the application’s command. Then implement it as a handler that orchestrates domain calls.

// Features/Orders/PlaceOrder/IPlaceOrderUseCase.cs

public interface IPlaceOrderUseCase

{

Task<PlaceOrderResponse> HandleAsync(PlaceOrderRequest request);

}

// Features/Orders/PlaceOrder/PlaceOrderHandler.cs

public class PlaceOrderHandler : IPlaceOrderUseCase

{

private readonly IOrderRepository _orderRepo;

private readonly IProductCatalog _productCatalog;

private readonly IEventPublisher _publisher;

public PlaceOrderHandler(IOrderRepository orderRepo, IProductCatalog productCatalog, IEventPublisher publisher)

{

_orderRepo = orderRepo;

_productCatalog = productCatalog;

_publisher = publisher;

}

public async Task<PlaceOrderResponse> HandleAsync(PlaceOrderRequest request)

{

var products = await _productCatalog.FetchAsync(request.Items.Select(i => i.ProductId));

var order = Order.Create(request.CustomerId, products, request.Items);

await _orderRepo.SaveAsync(order);

await _publisher.PublishAsync(new OrderPlacedEvent(order.Id, order.TotalAmount));

return new PlaceOrderResponse(order.Id, order.TotalAmount, order.Status.ToString());

}

}Notice the separation: PlaceOrderHandler orchestrates the workflow but delegates rules to the domain (Order.Create). It interacts with outbound ports (IOrderRepository, IEventPublisher) but knows nothing about database or messaging specifics.

In NestJS, the equivalent would be a @CommandHandler(PlaceOrderCommand) class invoking injected services—same boundary principles, different syntax.

4.4 Domain model: aggregate, invariants, policies (with quick tests)

Now the heart: the domain aggregate. The Order entity enforces business invariants—such as ensuring quantities are positive, products are valid, and totals consistent. The aggregate root owns consistency for its children.

// Domain/Orders/Order.cs

public class Order

{

private readonly List<OrderItem> _items = new();

private Order(Guid customerId)

{

Id = Guid.NewGuid();

CustomerId = customerId;

Status = OrderStatus.Pending;

}

public Guid Id { get; }

public Guid CustomerId { get; }

public IReadOnlyCollection<OrderItem> Items => _items.AsReadOnly();

public decimal TotalAmount => _items.Sum(i => i.Subtotal);

public OrderStatus Status { get; private set; }

public static Order Create(Guid customerId, IEnumerable<Product> products, IEnumerable<OrderItemDto> itemDtos)

{

if (!itemDtos.Any()) throw new DomainException("Order must contain at least one item.");

var order = new Order(customerId);

foreach (var dto in itemDtos)

{

var product = products.SingleOrDefault(p => p.Id == dto.ProductId)

?? throw new DomainException($"Product {dto.ProductId} not found.");

order._items.Add(new OrderItem(product.Id, dto.Quantity, product.Price));

}

return order;

}

public void MarkAsPaid() => Status = OrderStatus.Paid;

}A quick test ensures invariants are enforced without hitting infrastructure:

[Fact]

public void Create_ShouldThrow_WhenNoItems()

{

Assert.Throws<DomainException>(() =>

Order.Create(Guid.NewGuid(), new List<Product>(), new List<OrderItemDto>()));

}These tests run in milliseconds and validate domain rules. You can scale such unit tests to hundreds with minimal cost—your fastest feedback loop.

4.5 Outbound ports: repository + event publisher

Outbound ports abstract away technical dependencies. Define small, focused interfaces:

public interface IOrderRepository

{

Task SaveAsync(Order order);

Task<Order?> GetByIdAsync(Guid id);

}

public interface IEventPublisher

{

Task PublishAsync<T>(T @event) where T : class;

}If later you move from EF Core to DynamoDB, or from RabbitMQ to Kafka, only adapters change—not the domain or application logic. Each port represents a stable contract for infrastructure to fulfill.

4.6 Adapters

Adapters implement the outbound ports and live at the system’s periphery. They depend on specific frameworks and handle mapping from domain objects to persistence or transport formats.

4.6.1 Persistence adapter (JPA/EF Core/TypeORM)

The persistence adapter bridges domain entities to the data store.

// Infrastructure/Persistence/EfCoreOrderRepository.cs

public class EfCoreOrderRepository : IOrderRepository

{

private readonly AppDbContext _db;

public EfCoreOrderRepository(AppDbContext db) => _db = db;

public async Task SaveAsync(Order order)

{

var entity = OrderEntity.FromDomain(order);

_db.Orders.Add(entity);

await _db.SaveChangesAsync();

}

public async Task<Order?> GetByIdAsync(Guid id)

{

var entity = await _db.Orders.Include(o => o.Items)

.FirstOrDefaultAsync(o => o.Id == id);

return entity?.ToDomain();

}

}Mapping functions translate between domain and database models:

public static class OrderEntityMapper

{

public static OrderEntity FromDomain(Order order) => new()

{

Id = order.Id,

CustomerId = order.CustomerId,

Status = order.Status.ToString(),

Items = order.Items.Select(i => new OrderItemEntity

{

ProductId = i.ProductId,

Quantity = i.Quantity,

Price = i.Price

}).ToList()

};

public static Order ToDomain(this OrderEntity entity)

=> Order.Rehydrate(entity.CustomerId, entity.Items.Select(

i => new OrderItemDto(i.ProductId, i.Quantity)));

}In Java, JPA repositories or Spring Data equivalents play the same role; in Node/NestJS, you’d use TypeORM with entity mapping classes.

4.6.2 Messaging adapter (RabbitMQ/Kafka)

A simple RabbitMQ adapter implementing IEventPublisher:

public class RabbitMqEventPublisher : IEventPublisher

{

private readonly IConnection _connection;

public RabbitMqEventPublisher(IConnection connection) => _connection = connection;

public async Task PublishAsync<T>(T @event) where T : class

{

using var channel = _connection.CreateModel();

var body = JsonSerializer.SerializeToUtf8Bytes(@event);

channel.BasicPublish(exchange: "order.events", routingKey: typeof(T).Name, basicProperties: null, body: body);

await Task.CompletedTask;

}

}A Kafka equivalent would use a producer client, or in Node, a KafkaJS producer—same port, different adapter.

4.6.3 External API adapter (HTTP client)

Suppose the application validates discounts via an external Pricing API:

public interface IPricingGateway

{

Task<Discount?> GetDiscountAsync(Guid productId, Guid customerId);

}

public class HttpPricingGateway : IPricingGateway

{

private readonly HttpClient _client;

public HttpPricingGateway(HttpClient client) => _client = client;

public async Task<Discount?> GetDiscountAsync(Guid productId, Guid customerId)

{

var response = await _client.GetFromJsonAsync<Discount>($"api/discounts/{productId}?customer={customerId}");

return response;

}

}This adapter isolates all HTTP specifics. If you later migrate to gRPC, you replace only this class.

4.7 Transaction & consistency options (single DB txn, outbox)

When saving the order and publishing events, you need consistency. In simple monoliths, both operations can run in a single database transaction if events are persisted first (outbox pattern).

// Example: transactional outbox within SaveAsync

using var tx = await _db.Database.BeginTransactionAsync();

await _db.Orders.AddAsync(orderEntity);

await _db.Outbox.AddAsync(new OutboxMessage

{

Type = nameof(OrderPlacedEvent),

Payload = JsonSerializer.Serialize(orderPlaced)

});

await _db.SaveChangesAsync();

await tx.CommitAsync();A background worker reads OutboxMessage entries and publishes them via IEventPublisher. This ensures at-least-once delivery without losing events even on failure.

In distributed systems, this same pattern generalizes using a reliable queue or transactional messaging platform (e.g., Debezium, transactional Kafka).

4.8 Example repo layout options

The physical layout affects discoverability, ownership, and clarity. Two popular patterns dominate.

4.8.1 Pure hexagonal folders (domain/app/adapters)

/src

/Domain

/Orders

Order.cs

OrderItem.cs

/Application

/Orders

PlaceOrderHandler.cs

IOrderRepository.cs

/Adapters

/Persistence

EfCoreOrderRepository.cs

/Messaging

RabbitMqEventPublisher.cs

/Web

PlaceOrderController.csThis is the classic hexagonal layout—clear conceptual layers. It emphasizes boundaries and testing. Ideal for teams that value uniformity and need to swap technologies frequently.

4.8.2 Vertical slice folders (features/orders/**) with embedded ports

/src

/Features

/Orders

/PlaceOrder

PlaceOrderRequest.cs

PlaceOrderResponse.cs

PlaceOrderHandler.cs

IOrderRepository.cs

Order.cs

/CancelOrder

...

/Infrastructure

/Persistence

EfCoreOrderRepository.cs

/Messaging

RabbitMqEventPublisher.csEach feature holds its ports and domain code; infrastructure stays centralized. It favors autonomy and fast feature delivery. Teams working on separate features can move independently, reducing merge conflicts.

4.9 OSS templates to accelerate

4.9.1 Ardalis Clean Architecture (.NET) as a hexagonal starting point

The Ardalis Clean Architecture template is widely used in the .NET ecosystem. It provides ready-made folder structure, dependency inversion, and test projects aligned with hexagonal principles. Teams can clone and start implementing features while retaining ports/adapters clarity. Benefits include preconfigured dependency injection, MediatR handlers, and clear separation between core, infrastructure, and web. Many organizations adapt it as their internal default for domain-centric projects.

4.9.2 NestJS vertical slice templates

In the Node/TypeScript world, several community templates extend NestJS CQRS to vertical slices: each module encapsulates commands, queries, DTOs, and providers. Developers can scaffold a new slice with one CLI command, keeping dependencies localized. These templates combine Nest’s DI and decorators with the simplicity of feature-based organization—a perfect fit for teams shipping many small features.

5 Modules and Dependency Rules (Keep the Codebase Honest)

With boundaries defined, the next challenge is to keep them honest as the codebase grows. Over months of development, shortcuts, cross-slice imports, or leaking abstractions can erode architecture. The solution: treat boundaries as first-class citizens—enforced by convention and automation.

5.1 Defining modules (team-scale granularity, business ownership)

A module is more than a namespace—it represents a unit of business ownership. Align modules with bounded contexts or major features (Orders, Billing, Customers). Each module should:

- Own its domain model and invariants.

- Define inbound and outbound ports.

- Not reach into another module’s internals—communication via events or public interfaces only.

- Be small enough that one team can maintain it but large enough to encapsulate cohesive functionality. This granularity allows independent evolution and parallel work streams while retaining the benefits of a single deployable.

5.2 Allowed directions (domain → nothing, app → domain, adapters → ports only)

Architectural dependency rules are simple but powerful:

- Domain → nothing: domain entities have no outward dependencies—not even to infrastructure or frameworks.

- Application → domain: use cases depend on domain models and ports, but not on adapter implementations.

- Adapters → ports only: adapters implement ports; they don’t depend on each other or application details. This keeps dependency flow inward, ensuring outer layers can change without disturbing the core.

You can visualize the direction as:

[Adapters] → [Application] → [Domain]Reversing the arrow means trouble—if domain code imports an EF Core class, you’ve lost isolation.

5.3 Enforcing boundaries with code

Conventions aren’t enough; people make mistakes. Modern ecosystems offer automated architectural tests that fail builds when rules are violated.

5.3.1 Java: ArchUnit for package/layer/slice rules with examples

ArchUnit allows you to codify architecture in executable tests:

@AnalyzeClasses(packages = "com.example.shop")

public class ArchitectureTest {

@ArchTest

static final ArchRule domainShouldNotDependOnInfra =

noClasses().that().resideInAPackage("..domain..")

.should().dependOnClassesThat().resideInAnyPackage("..infrastructure..");

@ArchTest

static final ArchRule adaptersDependOnlyOnPorts =

classes().that().resideInAPackage("..infrastructure..")

.should().onlyDependOnClassesThat()

.resideInAnyPackage("..domain..", "..application..", "..infrastructure..");

}These rules run as JUnit tests. Violations (e.g., domain referencing JpaRepository) fail CI builds, providing immediate feedback. Teams can extend rules per slice or package.

5.3.2 .NET: NetArchTest / ArchUnitNET to codify rules

In the .NET ecosystem, NetArchTest or ArchUnitNET provide similar functionality.

[Test]

public void Domain_ShouldNotReference_Infrastructure()

{

var result = Types.InAssembly(DomainAssembly)

.ShouldNot()

.HaveDependencyOn("MyApp.Infrastructure")

.GetResult();

Assert.IsTrue(result.IsSuccessful);

}These tests are simple to maintain and run in build pipelines. Violations surface early—preventing slow architectural drift.

5.3.3 JS/TS: dependency-cruiser to ban cross-slice imports, visualize graphs

For JavaScript or TypeScript, dependency-cruiser analyzes import graphs and can enforce module boundaries via configuration:

{

"forbidden": [

{

"name": "no-cross-slice-imports",

"from": { "path": "^src/features/([^/]+)/" },

"to": { "pathNot": "^src/features/\\1/" },

"severity": "error"

}

]

}Running depcruise --validate .dependency-cruiser.js src fails the build if a feature imports another’s internals. It can also output a dependency graph for visualization—great for spotting hidden coupling.

5.4 Pull requests + pipelines: fail fast on architectural drift

Automated tests are only effective when enforced consistently. Integrate architectural tests into CI pipelines, and make them part of pull request gating. For instance:

- Run

ArchUnitorNetArchTestchecks as a separate build stage. - Reject merges when boundaries are violated.

- Generate dependency reports periodically to monitor erosion trends.

Teams can also add lightweight Git hooks that scan for banned imports or namespace usage before commit. The earlier the feedback, the lower the friction.

Culturally, treat boundary violations not as personal failures but as design signals—maybe a refactor or new port is needed. The point is to maintain clarity, not punish.

5.5 Versioned boundaries: how to evolve contracts and ports safely

As systems evolve, ports and domain contracts change. Without discipline, version drift between modules or services can cause runtime failures. The remedy: versioned boundaries.

Practical strategies:

-

Interface versioning: use

IOrderRepositoryV2or extension methods until consumers migrate, then deprecate older versions. -

Semantic versioning of modules: maintain version tags (e.g.,

orders@1.3.0) even in a monorepo; pipelines ensure consumers use compatible versions. -

Expand/contract approach: when changing DTOs or events, first expand by adding new fields (backward compatible), deploy producers/consumers that handle both, then contract old fields once unused.

-

Contract tests: for cross-module or service interaction, use consumer-driven contract tools like Pact to ensure compatibility before release.

A stable boundary isn’t one that never changes—it’s one that changes predictably and safely.

6 Testing for Speed and Signal (Fast Unit, Lean Integration, Sharp Contracts)

Testing in a boundary-first architecture is not about volume—it’s about signal quality and feedback speed. When your domain, application, and adapter boundaries are clean, you can test each layer with a tailored approach. The goal: maximum confidence with minimal runtime. Let’s walk through how to structure a testing strategy that aligns with domain boundaries and avoids redundant or fragile tests.

6.1 Test pyramid tuned for domain boundaries

The classical “test pyramid” still applies but needs tuning for boundary-centric architectures. Instead of UI, service, and unit layers, you test across domain, slice, and integration levels. Each has a different purpose and scope.

6.1.1 Micro-unit tests for domain invariants (pure functions, no I/O)

Domain tests sit at the base of the pyramid. They target pure business logic—aggregates, policies, and domain services—without any infrastructure. These are micro-unit tests: fast, deterministic, hermetic.

public class OrderTests

{

[Fact]

public void Cannot_Add_Item_With_Zero_Quantity()

{

var product = new Product(Guid.NewGuid(), "Book", 10m);

var ex = Assert.Throws<DomainException>(() =>

Order.Create(Guid.NewGuid(), new[] { product },

new[] { new OrderItemDto(product.Id, 0) })

);

Assert.Equal("Quantity must be positive.", ex.Message);

}

}Such tests run in milliseconds, verifying invariants and edge cases. They require no mocks—if you find yourself mocking dependencies, you’re testing the wrong layer. These tests form 70-80 % of your suite by count but only ~10 % of runtime.

6.1.2 Slice-level tests per feature (use case + in-memory adapters)

The next level tests a full use case (e.g., PlaceOrderHandler) but replaces real infrastructure with in-memory adapters. This validates orchestration, mapping, and collaboration between ports without paying I/O costs.

public class PlaceOrderSliceTests

{

[Fact]

public async Task PlaceOrder_Should_Save_And_Publish_Event()

{

var repo = new InMemoryOrderRepository();

var publisher = new InMemoryEventPublisher();

var productCatalog = new FakeProductCatalog();

var handler = new PlaceOrderHandler(repo, productCatalog, publisher);

var request = new PlaceOrderRequest(Guid.NewGuid(),

[ new OrderItemDto(productCatalog.DefaultProduct.Id, 2) ]);

var response = await handler.HandleAsync(request);

Assert.NotNull(await repo.GetByIdAsync(response.OrderId));

Assert.Contains(publisher.Published, e => e is OrderPlacedEvent);

}

}You get high coverage of application logic without integration drag. These tests mimic production flows but remain fast (tens of milliseconds). Use them as your regression safety net.

6.1.3 Integration paths you do care about (DB schema + key gateway)

Finally, you verify the paths that cross real boundaries—database persistence, message broker, or critical external APIs. Don’t test all integrations; test the ones that define contracts.

[Fact]

public async Task EfCoreRepository_Should_Persist_And_Retrieve_Order()

{

using var db = new AppDbContext(TestDbOptions.InMemory());

var repo = new EfCoreOrderRepository(db);

var order = DummyOrders.New();

await repo.SaveAsync(order);

var found = await repo.GetByIdAsync(order.Id);

Assert.Equal(order.TotalAmount, found?.TotalAmount);

}These tests prove that mappings and schemas align with domain expectations. Keep them lean and targeted—one per integration boundary is often enough.

6.2 Contract testing between slices/services with Pact

When your system has multiple modules or microservices, contract testing ensures consumers and providers evolve safely. Pact enables consumer-driven contracts: consumers define expectations for provider APIs; providers verify compliance.

Consumer test (e.g., OrderService calling PaymentService):

[Fact]

public async Task Should_Receive_Valid_PaymentResponse()

{

var pact = Pact.V3("OrderService", "PaymentService");

pact.UponReceiving("A valid payment authorization")

.WithRequest(HttpMethod.Post, "/api/payments")

.WillRespond()

.WithStatus(HttpStatusCode.OK)

.WithJsonBody(new { status = "AUTHORIZED" });

await pact.VerifyAsync(async ctx =>

{

var client = new PaymentClient(ctx.MockServerUri);

var result = await client.AuthorizePaymentAsync(new PaymentRequest(...));

Assert.Equal("AUTHORIZED", result.Status);

});

}Providers then run verification against the stored pact files to guarantee compatibility. This shifts integration validation from runtime to build-time—preventing surprises in staging.

In modular monoliths, Pact is equally useful for testing slice-to-slice contracts (e.g., Billing publishing an InvoiceIssuedEvent consumed by Orders). You validate event schema evolution without deploying.

6.3 Real dependencies in tests with Testcontainers (DB, Kafka, etc.)

When you need full integration confidence but want disposable environments, Testcontainers is the modern choice. It spins up lightweight Docker containers for databases, queues, or any external dependency directly from test code.

public class RepositoryIntegrationTests : IAsyncLifetime

{

private readonly PostgreSqlContainer _pg = new PostgreSqlBuilder()

.WithDatabase("orders").Build();

public async Task InitializeAsync() => await _pg.StartAsync();

public async Task DisposeAsync() => await _pg.DisposeAsync();

[Fact]

public async Task Should_Save_And_Retrieve_Order_In_Postgres()

{

var opts = new DbContextOptionsBuilder<AppDbContext>()

.UseNpgsql(_pg.GetConnectionString()).Options;

using var db = new AppDbContext(opts);

var repo = new EfCoreOrderRepository(db);

var order = DummyOrders.New();

await repo.SaveAsync(order);

var found = await repo.GetByIdAsync(order.Id);

Assert.NotNull(found);

}

}You get real infrastructure without shared state or fragile CI dependencies. Containers start in seconds, run in isolation, and vanish post-test. Apply the same approach for Kafka, RabbitMQ, or Redis. Your integration suite stays reproducible everywhere.

6.4 Mock external HTTPs with WireMock, including non-HTTP mode for speed

For testing outbound HTTP adapters, WireMock provides programmable mocks and record/replay capability.

[Fact]

public async Task Should_Call_Pricing_API_And_Return_Discount()

{

using var server = WireMockServer.Start();

server.Given(Request.Create().WithPath("/api/discounts/*").UsingGet())

.RespondWith(Response.Create().WithStatusCode(200)

.WithBodyAsJson(new { amount = 5 }));

var client = new HttpPricingGateway(new HttpClient

{ BaseAddress = new Uri(server.Urls[0]) });

var discount = await client.GetDiscountAsync(Guid.NewGuid(), Guid.NewGuid());

Assert.Equal(5, discount?.Amount);

}For pure speed, you can bypass HTTP entirely by mocking HttpMessageHandler or using WireMock’s non-HTTP stub mode, injecting pre-programmed responses into your adapter. The goal is fidelity without flakiness.

6.5 Cloud emulation locally with LocalStack for AWS-heavy adapters

When your adapters talk to AWS services (S3, SQS, DynamoDB), integration testing can be slow and costly. LocalStack emulates AWS APIs locally. Combine it with Testcontainers for a disposable local cloud:

var localStack = new LocalStackContainer()

.WithServices(LocalStackService.S3, LocalStackService.SQS);

await localStack.StartAsync();

var s3Client = new AmazonS3Client(localStack.GetCredentials(),

new AmazonS3Config { ServiceURL = localStack.GetEndpointOverride(LocalStackService.S3) });

await s3Client.PutBucketAsync("test-bucket");This setup allows you to test S3 or SQS adapters using the same AWS SDK code paths your production code uses—zero network latency, no external credentials. You gain confidence that IAM policies, serialization, and SDK usage are correct, while keeping CI runs fast.

6.6 Keeping tests under a minute: parallelism, hermetic fixtures, golden test data

A healthy build runs all tests under a minute. To achieve this:

- Parallelize: run domain and slice tests concurrently using test frameworks’ parallel execution.

- Hermetic fixtures: reset state between tests via in-memory databases or container re-initialization.

- Golden test data: instead of random generators, maintain known good fixtures stored as JSON/yaml snapshots. They document expectations and prevent flaky assertions.

- Selective integration: only spin up containers for critical paths; use mocks elsewhere.

[Collection("ParallelSafe")]

public class DomainSuite { /* domain tests */ }

[Collection("ParallelSafe")]

public class SliceSuite { /* use case tests */ }With strict isolation and parallel runs, teams get immediate feedback after each commit—turning tests into a development accelerator, not a drag.

7 Operating the Architecture: From First Commit to Production

Architecture doesn’t end at dotnet run or mvn spring-boot:run. Operating a boundary-first system means evolving, monitoring, and aligning it with people and delivery practices. Let’s see how to make it thrive beyond the initial build.

7.1 Start as a modular monolith, carve out later (signals for extraction)

The pragmatic route: begin as a modular monolith with enforced boundaries. Extract microservices only when boundaries prove stable and the scaling need is real. Extraction signals include:

- Independent release cadence needed by a module (e.g., pricing engine updated daily).

- Distinct infrastructure requirements (e.g., high-volume event stream).

- Performance isolation or failure containment.

When extraction time arrives, you already have clear ports and adapters—just replace in-process calls with HTTP or messaging transport. The rest of the domain remains unchanged. This incremental path minimizes risk and avoids premature distribution.

7.2 Align slices with teams (Conway’s Law in practice)

Your architecture mirrors your communication structure. Each vertical slice or module should map cleanly to a cross-functional team owning that domain capability.

- Orders team owns

/Features/Ordersand its outbound ports. - Billing team owns

/Features/Billing. - Shared infrastructure (e.g., event bus, observability) has a platform owner.

Autonomy in code boundaries supports autonomy in decision making. Teams can release independently, optimize their slice’s performance, and evolve their models without coordination overhead—Conway’s Law working for you, not against you.

7.3 Observability by slice: logs, metrics, traces tied to use cases

When features are organized by slice, observability should follow the same boundary lines. Attach context—slice name, use case name—to every log, metric, and trace.

using var scope = _tracer.StartActiveSpan("PlaceOrder");

_logger.LogInformation("Feature=Orders Slice=PlaceOrder Status=Started");

await _handler.HandleAsync(request);

_logger.LogInformation("Feature=Orders Slice=PlaceOrder Status=Completed");Metrics can be aggregated per slice (orders.request.count, billing.failure.rate) giving visibility into where errors or latency concentrate. Traces show complete request flow across adapters, invaluable for debugging distributed systems.

Adopt tools like OpenTelemetry with naming conventions: <module>.<usecase>.<metric>. This not only improves diagnosis but also reinforces ownership.

7.4 Data ownership per module; cross-module interaction via domain events

Each module owns its data schema. Cross-module queries are forbidden; instead, modules exchange domain events. Orders publishes OrderPlacedEvent, Billing consumes it to generate invoices.

public record OrderPlacedEvent(Guid OrderId, Guid CustomerId, decimal Total);

public class InvoiceHandler : INotificationHandler<OrderPlacedEvent>

{

private readonly IInvoiceService _invoiceService;

public async Task Handle(OrderPlacedEvent e, CancellationToken ct)

=> await _invoiceService.GenerateAsync(e.OrderId, e.Total);

}Events maintain autonomy and consistency. When shared data is unavoidable, replicate via read-models or projections rather than sharing tables. This ensures loose coupling and clarity of data ownership.

7.5 Backward-compatible change patterns (expand/contract adapters)

When evolving APIs, DTOs, or events, use expand/contract patterns to preserve backward compatibility.

Example: adding a new field to a response DTO.

- Expand – add new field as optional. Consumers ignore it safely.

- Deploy producers first – they emit both old and new versions.

- Upgrade consumers – they start reading the new field.

- Contract – remove the old field once all consumers migrated.

For adapters, version interfaces (IPricingGatewayV2) or use feature flags to toggle behavior. For events, embed version numbers or schema registry (e.g., Confluent Schema Registry). These tactics let you iterate rapidly without breaking downstream consumers.

7.6 Lightweight documentation: ADRs, diagrams-as-code, README per slice

Heavy architectural documentation quickly decays. Instead, embed lightweight, living docs directly in your repo:

- ADRs (Architecture Decision Records) capture why behind choices. Example:

adr-002-hexagonal-persistence.md. - Diagrams-as-code (e.g., Structurizr DSL, PlantUML) keep visuals versioned and regenerable.

- README per slice summarizing use cases, ports, and adapters.

Example ADR snippet:

# ADR-004: Use Outbox Pattern for Event Publishing

Date: 2025-10-20

Status: Accepted

Context: OrderPlaced events must be reliable even on transaction rollback.

Decision: Implement outbox table with background dispatcher.

Consequences: Slight latency increase but strong consistency guarantees.Developers discover architectural intent by browsing the repo, not static wiki pages. This reduces onboarding friction and aligns code with reasoning.

8 Playbooks, Patterns, and Pitfalls

By this stage, you’ve built the architectural foundations, reinforced them with testing and observability, and understood how to evolve them safely. The final piece is about operational excellence—the daily craft of applying these patterns without ceremony. In this section, we’ll cover practical playbooks, recurring pitfalls, and a curated toolbelt for high-signal, low-friction software delivery.

8.1 Build your “Default Stack” checklists (hexagonal defaults, VSA defaults)

Every engineering organization benefits from a default stack—a pre-agreed set of choices for architecture, testing, and tooling that avoids reinvention. This is not rigidity; it’s acceleration through convention.

For hexagonal (ports & adapters) systems, a typical default stack might include:

- Application pattern: Clean Architecture or DDD Lite structure (

Domain,Application,Infrastructure). - Persistence: EF Core (C#), JPA/Hibernate (Java), or TypeORM (Node).

- Integration: Outbox pattern for event reliability.

- Testing: Fast unit + Testcontainers + Pact for contract validation.

- Boundary enforcement: NetArchTest or ArchUnit.

- Deployment: containerized modular monolith with feature toggles.

For Vertical Slice Architecture (VSA) systems, defaults emphasize speed:

- Structure: Feature folders under

/Features/<domain>with command/query handlers and DTOs. - Mediator pattern: MediatR (C#) or @nestjs/cqrs (Node).

- Mapping: per-slice

Mapperor lightweight object transformation. - Testing: slice-level tests + minimal mocks + ephemeral DB via Testcontainers.

- Monitoring: feature-based logs and metrics, not global.

Having these checklists documented in your engineering handbook or repo scaffold reduces onboarding time dramatically. When new teams start, they know exactly where domain logic goes, how to test it, and how to evolve boundaries.

8.2 Common “gotchas” (repo explosion, listener soup, duplicating models too eagerly)

Even disciplined teams fall into recurring traps that quietly erode architectural clarity.

Repo explosion When adopting vertical slices, teams sometimes create a new repository for every slice or module—fragmenting code, build pipelines, and shared context. The better approach is a monorepo with strong modularity: boundaries enforced by architecture tests, not by separate Git repos. This keeps dependency management and refactors practical.

Listener soup

As domain event adoption grows, event handlers multiply: OrderPlacedEvent, OrderPaidEvent, OrderShippedEvent, each spawning a dozen listeners. Without discipline, you get spaghetti-like event chains that are hard to trace. The antidote:

- Use event-driven integration between modules, not within one.

- Group related handlers logically under

/Features/<Domain>/Listeners. - Use observability (structured logs, spans) to visualize event flows.

Duplicating models too eagerly Overzealous teams sometimes duplicate DTOs, entities, and events for every layer in the name of “decoupling.” While separation is good, excessive duplication increases mapping overhead and cognitive load. The heuristic:

- If a model is purely structural (e.g.,

OrderItemwith no behavior), reuse it across layers. - If semantics differ (e.g., persistence entity vs. domain aggregate), separate them. The balance lies in intent-based duplication, not blanket rules.

8.3 Example playbook: adding a new feature slice in 60 minutes (step-by-step)

The following playbook shows how to add a new slice using the patterns we’ve built—rapidly, safely, and with minimal ceremony. Let’s say we need a new feature: “Cancel Order.”

Step 1 – Create slice folder

/Features/Orders/CancelOrder/Add a subfolder structure for Command, Response, Handler, and optional tests.

Step 2 – Define DTOs (contracts)

public record CancelOrderCommand(Guid OrderId, string Reason);

public record CancelOrderResponse(Guid OrderId, string Status);Step 3 – Define inbound port (use case interface)

public interface ICancelOrderUseCase

{

Task<CancelOrderResponse> HandleAsync(CancelOrderCommand command);

}Step 4 – Implement handler

public class CancelOrderHandler : ICancelOrderUseCase

{

private readonly IOrderRepository _orders;

private readonly IEventPublisher _publisher;

public CancelOrderHandler(IOrderRepository orders, IEventPublisher publisher)

{

_orders = orders;

_publisher = publisher;

}

public async Task<CancelOrderResponse> HandleAsync(CancelOrderCommand cmd)

{

var order = await _orders.GetByIdAsync(cmd.OrderId)

?? throw new NotFoundException("Order not found");

order.Cancel(cmd.Reason);

await _orders.SaveAsync(order);

await _publisher.PublishAsync(new OrderCanceledEvent(order.Id, cmd.Reason));

return new CancelOrderResponse(order.Id, order.Status.ToString());

}

}Step 5 – Write slice-level test

[Fact]

public async Task CancelOrder_Should_Update_Status_And_Publish_Event()

{

var repo = new InMemoryOrderRepository();

var publisher = new InMemoryEventPublisher();

var handler = new CancelOrderHandler(repo, publisher);

var order = DummyOrders.New();

await repo.SaveAsync(order);

await handler.HandleAsync(new CancelOrderCommand(order.Id, "Customer request"));

var saved = await repo.GetByIdAsync(order.Id);

Assert.Equal(OrderStatus.Canceled, saved?.Status);

Assert.Contains(publisher.Published, e => e is OrderCanceledEvent);

}Step 6 – Add controller endpoint

[HttpPost("/orders/{id}/cancel")]

public async Task<IActionResult> Cancel(Guid id, [FromBody] CancelOrderCommand cmd)

{

var result = await _mediator.Send(cmd with { OrderId = id });

return Ok(result);

}In under an hour, you have a new vertical slice—fully tested, integrated, and deployable without touching any shared service layers.

8.4 Example playbook: swapping a persistence technology via an adapter (step-by-step)

Swapping persistence layers is one of the ultimate tests of your boundary design. Here’s how to replace EF Core with DynamoDB in a hexagonal system with minimal friction.

Step 1 – Keep the outbound port unchanged

public interface IOrderRepository

{

Task SaveAsync(Order order);

Task<Order?> GetByIdAsync(Guid id);

}Step 2 – Implement new adapter

public class DynamoOrderRepository : IOrderRepository

{

private readonly IAmazonDynamoDB _db;

private readonly DynamoDBContext _context;

public DynamoOrderRepository(IAmazonDynamoDB db)

{

_db = db;

_context = new DynamoDBContext(db);

}

public async Task SaveAsync(Order order) =>

await _context.SaveAsync(new OrderDocument(order));

public async Task<Order?> GetByIdAsync(Guid id)

{

var doc = await _context.LoadAsync<OrderDocument>(id);

return doc?.ToDomain();

}

}Step 3 – Register adapter in DI container

services.Remove<IOrderRepository>();

services.AddScoped<IOrderRepository, DynamoOrderRepository>();Step 4 – Run adapter integration test Use Testcontainers with LocalStack to emulate DynamoDB.

var localStack = new LocalStackContainer()

.WithServices(LocalStackService.DynamoDb);

await localStack.StartAsync();Step 5 – Validate application unchanged

Because all application and domain layers depend on IOrderRepository, no other code changes are required. All handlers and tests still compile. The only difference: configuration.

That’s true decoupling validated in production.

8.5 Reference implementations & learning paths

To deepen mastery, explore open-source examples and industry resources that embody these principles.

8.5.1 Hexagonal deep dives & clarifications

- Alistair Cockburn’s original “Ports and Adapters” paper – the definitive conceptual anchor.

- Optivem Journal’s Clean vs. Hexagonal series – excellent comparative breakdowns of dependency flow and testability.

- Ardalis Clean Architecture Template (.NET) – a reference-grade example balancing structure and pragmatism.

- Jakub Nabrdalik’s Hexagonal in Practice (Java) – tactical examples with Spring and real adapters.

8.5.2 Vertical Slice talks & guides

- Jimmy Bogard – “Vertical Slice Architecture” (NDC Conference) – how vertical slices emerged from years of enterprise layering pain.

- Milan Jovanović’s blog – modern .NET examples showing MediatR-based slices and thin orchestrators.

- Ivan Ojgarcia’s article “Vertical Slicing: A Term for Powerful Hexagonal Architecture” – how vertical slicing complements ports & adapters.

8.5.3 Templates & starters: Ardalis (.NET), NestJS VSA, Clean-ish Spring Boot

- Ardalis Clean Architecture – ideal hexagonal baseline; strong separation and test scaffolds.

- NestJS CQRS Starter – organizes Node modules as feature slices; aligns perfectly with VSA.

- Spring Boot Clean-ish Template – pragmatic DDD-lite implementation with hexagonal persistence and messaging examples. Cloning and experimenting with these templates accelerates onboarding and gives teams battle-tested conventions from day one.

8.6 Toolbelt recap: ArchUnit / NetArchTest / dependency-cruiser / Pact / Testcontainers / WireMock / LocalStack

Finally, a concise toolbelt reference—your go-to stack for pragmatic domain boundaries:

| Tool | Purpose | Ecosystem | Example Use |

|---|---|---|---|

| ArchUnit | Enforce package/layer rules | Java | Block domain → infra deps |

| NetArchTest / ArchUnitNET | Code-level dependency tests | .NET | Verify boundaries in CI |

| dependency-cruiser | Visualize & enforce imports | JS/TS | Prevent cross-slice imports |

| Pact | Contract tests | All | Consumer-driven verification |

| Testcontainers | Real infra in tests | All | Start PostgreSQL/Kafka in CI |

| WireMock | HTTP API mocking | All | Simulate Pricing API responses |

| LocalStack | AWS service emulation | All | Local S3/SQS testing |

Each complements the others. Together, they provide confidence, visibility, and speed—the three cornerstones of maintainable, boundary-driven systems.

A team fluent in these patterns doesn’t chase architectures—they shape them. By combining clean domain boundaries, fast feedback loops, and disciplined tooling, they reach that rare state where design elegance and delivery velocity coexist. And that’s the true spirit of Domain Boundaries Without Ceremony: clarity, adaptability, and craftsmanship through simplicity.