1 The Modern Landscape of Azure Background Processing

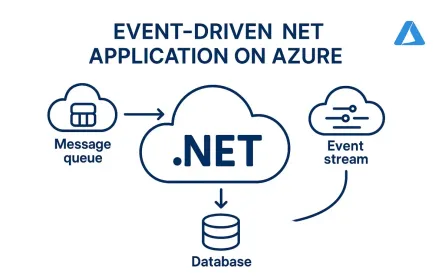

Background processing on Azure has evolved from “run this somewhere in the background” to a set of well-defined architectural choices. What used to be a simple background thread is now a decision about durability, scaling behavior, cost model, observability, and operational risk.

For senior .NET engineers, the real question is no longer how do I run work in the background? It’s where should this workload live, and what guarantees does it need? Today, the primary options are Azure Functions, Azure WebJobs, and Azure Container Apps (ACA) Jobs. Each solves a different class of problems.

1.1 Evolution of Background Tasks: From Cloud Services to Serverless and Containerized Jobs

In early Azure architectures, background processing typically ran inside Azure Cloud Services worker roles — essentially managed virtual machines with manual scaling and high operational overhead.

Then came Azure WebJobs, running inside Azure App Service. WebJobs simplified deployment by collocating background logic with your web application. But this also meant tight coupling: scaling your API scaled your jobs, and background tasks competed for the same CPU and memory.

In 2016, Azure Functions changed the model. Instead of thinking about servers, you focused on triggers. Scaling became automatic. Billing became per-execution. Native integration with Azure Service Bus, Storage Queues, and Event Hubs reduced infrastructure plumbing.

By 2023–2025, Azure Container Apps Jobs emerged as a first-class option for containerized, run-to-completion workloads. This filled a gap between serverless and Kubernetes for teams needing predictable CPU and memory reservations, custom runtimes, GPU support, or scaling based on non-Azure metrics via KEDA.

Now, background processing on Azure spans three clear models:

- App Service–based (WebJobs)

- Serverless event-driven (Functions)

- Containerized run-to-completion (ACA Jobs)

Choosing correctly depends on execution time, throughput, isolation requirements, and cost predictability.

1.2 Why “Fire and Forget” Is an Anti-Pattern in Distributed Systems

ASP.NET Core provides background mechanisms like IHostedService and BackgroundService. These are valid tools inside a single process. For example:

public class EmailBackgroundService : BackgroundService

{

protected override async Task ExecuteAsync(CancellationToken stoppingToken)

{

while (!stoppingToken.IsCancellationRequested)

{

var job = await _channel.Reader.ReadAsync(stoppingToken);

await SendEmailAsync(job);

}

}

}This works well when you only have one instance, process restarts are rare, and losing in-memory work is acceptable. But in distributed systems, these assumptions break:

- No durability across restarts — if the instance restarts, in-memory queues are lost.

- No cross-instance coordination — each instance has its own queue with no global distribution.

- No built-in retry or dead-letter handling — retries and poison message detection must be hand-built.

- No back-pressure — an endpoint that enqueues 10,000 tasks can overwhelm the process.

The common anti-pattern:

// Fragile in distributed systems

Task.Run(() => ProcessOrderAsync(order));The correct pattern externalizes work into a durable system:

var message = JsonSerializer.Serialize(order);

await serviceBusSender.SendMessageAsync(

new ServiceBusMessage(message));Now you gain durable storage, at-least-once delivery, centralized retry policies, dead-letter queues, and horizontal scaling.

Architectural rule: if work must survive restarts or scale across instances, it belongs in a queue or event stream — not IHostedService or Task.Run.

1.3 The Shift Toward the Isolated Worker Model

The Azure Functions hosting model has changed significantly. The most important shift is toward the Isolated Worker Model for .NET 8, .NET 9, and .NET 10.

In the isolated model, your application runs in a separate worker process that communicates with the Functions host over gRPC. Your Program.cs behaves like a standard .NET host:

var host = new HostBuilder()

.ConfigureFunctionsWebApplication()

.ConfigureServices(services =>

{

services.AddScoped<IOrderService, OrderService>();

})

.Build();

await host.RunAsync();This gives you full control over startup, standard .NET configuration patterns, clean DI boundaries, and better testability.

The in-process model (where your code runs inside the Functions runtime) is officially deprecated, with retirement targeting late 2026. Always verify the latest timeline in the official documentation. The practical takeaway: do not start new projects on in-process, and plan migrations if you are still using it.

2 Comparative Analysis: Choosing the Right Compute for the Job

Choosing between Azure Functions, WebJobs, and ACA Jobs depends on networking requirements, security posture, deployment complexity, scaling control, and operational visibility. This section compares the platforms through real architectural trade-offs.

2.1 Feature Matrix

| Capability | Azure Functions (Isolated Worker) | Azure WebJobs | ACA Jobs |

|---|---|---|---|

| Execution Model | Event-driven, serverless | Continuous or triggered | Containerized run-to-completion |

| Scaling | Event-driven auto-scaling | Bound to App Service Plan | KEDA-based event scaling |

| Runtime Isolation | High (separate worker process) | Low (shares App Service process space) | High (dedicated container boundary) |

| VNet Integration | Yes (Premium & Flex; Consumption limited) | Yes (via App Service VNet integration) | Yes (native VNet environment) |

| Managed Identity | Native support | Supported via App Service identity | Native support |

| Long-Running Jobs | 5–10 min on Consumption; unlimited on Premium/Flex; Durable for orchestration | No hard limit, but tied to App Service stability | No hard limit |

| Cost Model | Per execution (Consumption/Flex) or reserved (Premium) | App Service Plan-based | CPU/memory per execution |

| Deployment Complexity | Low to medium | Low (shared with web app) | Medium (container registry + image pipeline) |

| Observability | Built-in Application Insights | Uses App Service logging; less integrated | Azure Monitor + container logs |

| Best Fit | Event-driven, short-lived, cloud-native workloads | Legacy App Service environments | Heavy compute, custom runtime, GPU, batch workloads |

2.2 Cost Models: Consumption, Premium, and Flex Consumption

Consumption Plan

- Charged per execution and duration

- Scales automatically from zero

- Default timeout: 5 minutes, configurable up to 10 minutes

Best for bursty, short-lived workloads. The challenge is that cold starts and execution limits introduce friction for steady workloads.

Premium Plan

- Pre-allocated instances (no cold start)

- Unlimited execution time

- Full VNet integration

- Higher baseline monthly cost

Flex Consumption (GA 2024/2025)

Designed to sit between Consumption and Premium:

- Supports Always Ready instances

- Per-instance concurrency control

- No hard execution limit

- Lower baseline cost than Premium

Flex can reduce baseline compute cost compared to Premium for medium, steady workloads by avoiding fully reserved instance pricing. Architects should validate cost assumptions using the Azure Pricing Calculator.

For long-running, CPU-heavy workloads, ACA Jobs may still be more predictable because pricing directly maps to allocated resources.

Messaging Trade-Offs: Azure Queue Storage vs. Service Bus

If you use queues, you must choose the right one.

- Azure Queue Storage: Simple, high throughput, low cost. No sessions or advanced routing.

- Azure Service Bus: FIFO via sessions, transactions, dead-letter queues, advanced routing (topics/subscriptions).

If you need ordering guarantees, transactions, or complex routing, use Service Bus. If you need massive throughput at low cost and can tolerate simpler semantics, Queue Storage is often sufficient.

Resource Isolation

- WebJobs share App Service resources. Background work competes with web traffic.

- Functions Premium/Flex isolate workloads better, though still under the Functions runtime.

- ACA Jobs provide full container-level CPU and memory isolation.

If one workload must never impact another, container isolation is usually the safest boundary.

2.3 Scaling Mechanics: Event-Driven vs. KEDA-Based

Azure Functions scale based on trigger-specific metrics (Service Bus backlog, queue length, Event Hub partition load). Architects can influence behavior through maxConcurrentCalls, batch size, prefetch, target-based scaling settings, and max scale-out limits.

ACA Jobs use KEDA, which supports custom scalers beyond Azure-native triggers:

- Kafka lag

- PostgreSQL row count queries

- Redis list length

- Prometheus metrics

- Cron schedules

Example KEDA configuration for Kafka:

triggers:

- type: kafka

metadata:

bootstrapServers: kafka:9092

topic: orders

consumerGroup: order-group

lagThreshold: "500"In short: Functions scale based on trigger-native metrics. ACA Jobs scale based on almost any metric KEDA supports.

2.4 Decision Tree: When to Use What?

flowchart TD

A[Is the workload event-driven?] -->|No| B[Is it long-running batch or compute-heavy?]

A -->|Yes| C[Is execution <10 minutes?]

C -->|Yes| D[Use Azure Functions - Consumption or Flex]

C -->|No| E[Is it orchestration or multi-step workflow?]

E -->|Yes| F[Use Durable Functions]

E -->|No| G[Use Functions Premium or ACA Jobs]

B -->|Yes| H[Use Azure Container Apps Jobs]

B -->|No| I[Already on App Service?]

I -->|Yes| J[Use WebJobs - short term]

I -->|No| HUse Azure Functions When:

- The workload is event-driven with short execution times

- You want minimal infrastructure management

- Azure-native triggers fit your scaling needs

Use Durable Functions When:

- You need orchestration (fan-out/fan-in, human approval, timers)

- The workflow spans multiple steps with state that must survive restarts

Use Azure Container Apps Jobs When:

- Execution time may be hours

- You need full container control or GPU compute

- Scaling must react to custom metrics via KEDA

Use Azure WebJobs When:

- The system already runs on App Service

- Background load is predictable and modest

- You are incrementally modernizing toward a cloud-native model

The right choice depends on durability requirements, execution time, networking needs, scaling control, and cost tolerance. Treat background jobs as a first-class architectural component.

3 Azure Functions: Isolated Worker Model and Flex Consumption

Azure Functions remains the most common entry point for background processing on Azure. The Isolated Worker Model and Flex Consumption have fundamentally changed how you design, scale, and operate production workloads.

3.1 Architecture of the Isolated Worker

In the isolated model, your application runs in its own worker process. The Azure Functions host communicates with your worker over gRPC. Your Program.cs looks and behaves like a standard .NET application with full DI, configuration, and middleware support.

Middleware

Middleware is one of the biggest advantages of the isolated model. It allows cross-cutting concerns to be implemented once:

builder.Use(async (context, next) =>

{

var logger = context.InstanceServices

.GetRequiredService<ILoggerFactory>()

.CreateLogger("FunctionTiming");

var sw = Stopwatch.StartNew();

await next(context);

sw.Stop();

logger.LogInformation(

"Function {Name} executed in {Elapsed} ms",

context.FunctionDefinition.Name,

sw.ElapsedMilliseconds);

});This runs for every function invocation. You can extend it to attach correlation IDs, measure queue latency, or push custom metrics — control that was not possible in the old in-process model.

3.2 Leveraging Flex Consumption

Eliminating Cold Start with Always-Ready Instances

Flex supports Always Ready instances, keeping a defined number of workers warm:

az functionapp create \

--name my-flex-app \

--resource-group rg \

--plan my-flex-plan \

--runtime dotnet-isolated \

--functions-version 4

az functionapp config set \

--name my-flex-app \

--resource-group rg \

--always-ready-instances 1This eliminates JIT cold start, pre-builds the DI container, and caches configuration — making a measurable difference for latency-sensitive event processing without moving to fully reserved Premium instances.

Per-Instance Concurrency Control

Concurrency tuning is one of the most overlooked scaling controls. Configure it in host.json:

{

"version": "2.0",

"concurrency": {

"dynamicConcurrencyEnabled": true

},

"extensions": {

"serviceBus": {

"prefetchCount": 100,

"maxConcurrentCalls": 32

}

}

}maxConcurrentCallscontrols how many messages a single instance processes simultaneously.prefetchCountreduces round-trips to Service Bus.dynamicConcurrencyEnabledallows the runtime to adapt based on resource usage.

Higher concurrency is useful when handlers are I/O-bound. Lower concurrency is safer for CPU-bound work or when downstream systems have connection limits.

3.3 Trigger Deep-Dives

Service Bus Trigger

For enterprise messaging, Service Bus is the most feature-rich trigger. In the isolated worker, use ServiceBusReceivedMessage for metadata access:

public class OrderHandler

{

[Function("ProcessOrder")]

public async Task Run(

[ServiceBusTrigger("orders", Connection = "ServiceBus")]

ServiceBusReceivedMessage message,

ServiceBusMessageActions messageActions)

{

var order = JsonSerializer.Deserialize<Order>(message.Body);

try

{

await ProcessAsync(order);

await messageActions.CompleteMessageAsync(message);

}

catch (Exception)

{

if (message.DeliveryCount > 5)

{

await messageActions.DeadLetterMessageAsync(message);

}

else

{

await messageActions.AbandonMessageAsync(message);

}

}

}

}If you don’t need metadata, a simple string binding also works:

[Function("ProcessOrder")]

public Task Run(

[ServiceBusTrigger("orders", Connection = "ServiceBus")]

string payload)

{

var order = JsonSerializer.Deserialize<Order>(payload);

return ProcessAsync(order);

}Use the richer model when you need delivery count, session ID, or advanced control.

Storage Queue Trigger

Azure Queue Storage is simpler and cheaper — ideal for high-volume, low-complexity jobs where you don’t need sessions, transactions, or FIFO guarantees:

[Function("ResizeImage")]

public async Task Run(

[QueueTrigger("images")] string blobName)

{

await ResizeAsync(blobName);

}Event Hubs Trigger

Event Hubs is designed for high-throughput streaming. Checkpointing is handled automatically, but if the function fails mid-batch before checkpointing, the entire batch may be reprocessed — reinforcing the requirement for idempotent handlers:

[Function("ProcessEvents")]

public async Task Run(

[EventHubTrigger("events", Connection = "EventHub")]

EventData[] events)

{

foreach (var e in events)

{

await HandleEventAsync(e);

}

}3.4 Durable Functions: Orchestration and Long-Running Workflows

Durable Functions solves scenarios that simple triggers cannot: long-running orchestrations, fan-out/fan-in parallel processing, human approval workflows, and chained stateful processes.

Instead of one long-running function, Durable breaks work into orchestrations and activities:

[Function("OrderOrchestrator")]

public async Task RunOrchestrator(

[OrchestrationTrigger] TaskOrchestrationContext context)

{

var order = context.GetInput<Order>();

await context.CallActivityAsync("ValidateOrder", order);

await context.CallActivityAsync("ChargePayment", order);

var items = await context.CallActivityAsync<List<Item>>(

"ReserveInventory", order);

await context.CallActivityAsync("SendConfirmation", order);

}

[Function("ValidateOrder")]

public Task ValidateOrder([ActivityTrigger] Order order)

{

return Task.CompletedTask;

}Durable Functions automatically persists state between steps, replays orchestrations safely, survives host restarts, and supports timers and external events. This avoids hitting the Consumption timeout because the orchestration checkpoints between activities.

Use Durable Functions when your background job is a workflow, not a single step. For simple message handlers, standard triggers are sufficient.

4 Azure Container Apps Jobs: The Power of KEDA and Containers

ACA Jobs fill a specific gap in Azure’s background processing story. They are purpose-built for run-to-completion workloads where you want container-level control, predictable resources, and flexible scaling.

4.1 Apps vs. Jobs

Azure Container Apps supports two execution models:

- Container Apps (Apps) — continuously running services (APIs, daemons, workers)

- Container Apps Jobs — triggered, run-to-completion workloads

A job starts when triggered (manually, on schedule, or event-driven), runs a container, exits when work is complete, does not expose ingress, and does not remain running. Each execution is isolated with clean state — valuable for batch pipelines, ETL, reconciliation jobs, and ML processing.

4.2 Implementing Event-Driven Jobs with KEDA

ACA Jobs use KEDA internally. You configure them using ARM/Bicep templates or Azure CLI — not Kubernetes-native Job resources.

Example event-driven job triggered by Kafka:

az containerapp job create \

--name process-orders \

--resource-group rg \

--environment my-aca-env \

--trigger-type Event \

--replica-timeout 1800 \

--replica-retry-limit 3 \

--parallelism 5 \

--image myregistry.azurecr.io/order-processor:latest \

--registry-server myregistry.azurecr.io \

--scale-rule-name kafka-rule \

--scale-rule-type kafka \

--scale-rule-metadata bootstrapServers=kafka1:9092 topic=orders consumerGroup=order-group lagThreshold=500Key parameters: --replica-timeout defines max runtime per replica, --replica-retry-limit controls retries on failure, and --parallelism defines concurrent replicas.

A Realistic .NET Worker

In production, ACA Jobs typically consume from a broker such as Service Bus:

using Azure.Messaging.ServiceBus;

var client = new ServiceBusClient(

Environment.GetEnvironmentVariable("ServiceBusConnection"));

var receiver = client.CreateReceiver("orders");

while (true)

{

var message = await receiver.ReceiveMessageAsync(TimeSpan.FromSeconds(5));

if (message == null)

break; // No more work; exit container

try

{

var order = JsonSerializer.Deserialize<Order>(message.Body);

await ProcessAsync(order);

await receiver.CompleteMessageAsync(message);

}

catch

{

await receiver.AbandonMessageAsync(message);

}

}The loop exits when no more messages are available, allowing the container to complete naturally. KEDA triggers additional executions when new messages accumulate.

4.3 Retry, Failure Behavior, and Resource Isolation

If a container exits with a non-zero code, ACA retries up to --replica-retry-limit. If retries are exhausted, the execution is marked failed. Your container must return proper exit codes — swallowing exceptions without failing the process hides errors.

ACA does not automatically move messages to dead-letter queues. That responsibility remains with your message broker. ACA manages container lifecycle; the broker manages message semantics.

For production systems, your worker must fail fast on unrecoverable errors, use explicit exit codes, and implement idempotency internally. Monitor execution history, track failure rates, and alert on repeated replica failures.

GPU and Compute-Heavy Workloads

ACA supports GPU-backed container execution — critical for model inference, batch scoring, and data science pipelines. Use modern CUDA base images:

FROM nvidia/cuda:12.2.0-runtime-ubuntu22.04

WORKDIR /app

COPY . .

RUN apt-get update && apt-get install -y dotnet-runtime-8.0

RUN dotnet publish -c Release -o /out

CMD ["dotnet", "/out/MyGpuWorker.dll"]Inside .NET, enable GPU inference with ONNX Runtime:

using Microsoft.ML.OnnxRuntime;

var options = new SessionOptions();

options.AppendExecutionProvider_CUDA();

using var session = new InferenceSession("model.onnx", options);

var input = BuildInputTensor(data);

using var results = session.Run(

new[] { NamedOnnxValue.CreateFromTensor("input", input) });GPU resources are configured during job creation:

az containerapp job create \

--name gpu-batch-job \

--resource-group rg \

--environment my-aca-env \

--image myregistry.azurecr.io/gpu-worker:latest \

--cpu 4 \

--memory 16Gi \

--gpu 1Exact GPU SKU names vary by region; always verify against current ACA documentation.

4.4 Network Security and Private Connectivity

Networking for ACA Jobs is defined at the Container Apps Environment level. The environment deploys into a VNet, and all jobs inherit that network configuration:

- ACA Environment in a private subnet

- Private endpoints for SQL, Storage, or Service Bus

- Managed Identity for secret access (no plain-text connection strings)

- Controlled egress routes

var credential = new DefaultAzureCredential();

var client = new SecretClient(

new Uri("https://myvault.vault.azure.net/"),

credential);

var secret = await client.GetSecretAsync("SqlConnectionString");Network isolation is environment-scoped. Identity is workload-scoped. This makes ACA Jobs suitable for regulated environments where connectivity must remain private.

5 Azure WebJobs: Legacy Stability and Pragmatic Migration

Azure WebJobs are no longer the default choice for new background processing systems. But they remain widely used in enterprise environments running on App Service. The key is understanding where they make sense — and where they don’t.

5.1 Why WebJobs Remain Relevant

Many organizations still operate ASP.NET applications on Azure App Service that share deployment pipelines, configuration, and infrastructure between web and background components. For these systems, WebJobs remain a low-friction option:

- They reuse existing App Service infrastructure

- They deploy together with the web app

- They inherit the same identity and configuration

WebJobs are reasonable when the application lifecycle is limited, background load is predictable, and the organization wants incremental modernization — not wholesale migration.

5.2 Implementing WebJobs in Modern .NET

With the modern WebJobs SDK (v3+), you use HostBuilder:

using Microsoft.Extensions.Hosting;

using Microsoft.Azure.WebJobs;

var host = new HostBuilder()

.ConfigureWebJobs(builder =>

{

builder.AddAzureStorageQueues();

})

.Build();

await host.RunAsync();

public class Functions

{

public static async Task ProcessImage(

[QueueTrigger("images")] string blobName)

{

await ResizeAsync(blobName);

}

}The SDK polls the queue, delivers messages, and handles retries via queue semantics — a pattern that closely resembles early Azure Functions.

Timer-Triggered WebJob

For scheduled background work, use the SDK’s timer trigger:

using Microsoft.Extensions.Hosting;

using Microsoft.Azure.WebJobs;

var host = new HostBuilder()

.ConfigureWebJobs(builder =>

{

builder.AddTimers();

})

.Build();

await host.RunAsync();

public class ScheduledTasks

{

public static Task RunCleanup(

[TimerTrigger("0 */5 * * * *")] TimerInfo timer)

{

return CleanupAsync();

}

}This is the proper SDK-based pattern for scheduled work. A raw while(true) loop is technically possible but bypasses the SDK’s lifecycle and trigger handling.

5.3 The “Noisy Neighbor” Problem

WebJobs share compute resources with the web application. If a background job spikes CPU during a large queue backlog, API response times degrade. To compensate, you scale the entire App Service Plan — paying for increased web capacity when you only needed more background capacity.

Imagine this scenario: your API runs on an S1 App Service Plan (~1.75 GB RAM, 1 core). A background WebJob starts processing a large queue backlog. CPU spikes. API response times increase. To compensate, you scale from S1 to S3, paying significantly more — but the WebJob only needed an extra half-core for a short burst.

There is no independent scale-out for WebJobs. Both the web app and WebJob scale together, leading to resource contention, unpredictable latency, and over-provisioning costs. For steady, light workloads, this is acceptable. For bursty or heavy workloads, it becomes operationally painful.

5.4 When to Migrate — and When Not To

Migrate to Functions or ACA Jobs when:

- Background processing impacts web performance

- You need independent scaling

- Execution times exceed comfortable App Service limits

Migrating a queue-triggered WebJob to Azure Functions is often straightforward:

public class ProcessImage

{

[Function("ProcessImage")]

public Task Run(

[QueueTrigger("images")] string blobName)

{

return ResizeAsync(blobName);

}

}The business logic remains intact. The hosting model changes.

Leave WebJobs in place when:

- Workloads are predictable and lightweight

- The application has a short remaining lifespan

- Operational risk of migration outweighs the benefit

The practical approach: migrate high-impact workloads first, leave stable low-volume jobs in place, and avoid rewriting systems near end-of-life.

6 Engineering for Resilience: Idempotency and Error Handling

Background jobs fail. Networks glitch. Containers restart. Messages get delivered twice. The difference between a robust system and a fragile one is how you design for these realities.

6.1 Why Every Background Job Must Be Idempotent

Most Azure messaging systems guarantee at-least-once delivery. A message may be delivered more than once because a handler crashes before completing, a container restarts mid-execution, or a transient failure prevents acknowledgment.

If your handler is not idempotent, duplicates cause real damage: payments charged twice, inventory decremented multiple times, duplicate database rows.

Idempotency means processing the same message twice produces the same final state as processing it once.

6.2 Implementing Idempotent Consumers

Deduplication with Unique Constraints

Persist processed message IDs with a unique constraint. The critical detail is wrapping both the claim and business logic in a single transaction:

public async Task HandleAsync(EventMessage message)

{

using var tx = await _db.Database.BeginTransactionAsync();

var record = new ProcessedMessage

{

MessageId = message.Id,

ProcessedAtUtc = DateTime.UtcNow

};

_db.ProcessedMessages.Add(record);

try

{

await _db.SaveChangesAsync(); // claim the message

}

catch (DbUpdateException)

{

// Duplicate MessageId -> already processed

return;

}

await PerformBusinessLogicAsync(message);

await tx.CommitAsync();

}If two instances process the same message concurrently, only one succeeds due to the unique constraint. If business logic fails, the transaction rolls back and the message is not marked as processed.

The Transactional Outbox Pattern

When your background job both updates state and publishes a message, you must avoid the failure scenario where the database updates but the message never sends (or vice versa).

The Transactional Outbox stores outbound messages in the same transaction as business state:

using var tx = await _db.Database.BeginTransactionAsync();

_db.Orders.Add(order);

_db.OutboxMessages.Add(new OutboxMessage

{

Payload = JsonSerializer.Serialize(order),

Type = "OrderCreated",

CreatedAtUtc = DateTime.UtcNow,

Sent = false

});

await _db.SaveChangesAsync();

await tx.CommitAsync();A separate publisher process reads unsent outbox messages and publishes them:

var messages = await _db.OutboxMessages

.Where(m => !m.Sent)

.OrderBy(m => m.Id)

.ToListAsync();

foreach (var msg in messages)

{

await _bus.PublishAsync(msg.Payload);

msg.Sent = true;

}

await _db.SaveChangesAsync();If the publisher crashes after PublishAsync but before SaveChangesAsync, the message will be published again. The outbox guarantees at-least-once delivery, so downstream consumers must still be idempotent. The outbox pattern trades complexity for strong consistency between database state and emitted events — for distributed background systems, that trade-off is often worth it.

6.3 Retry Patterns

Retries must be deliberate. Blind retries amplify failures.

Polly v8 for Exponential Backoff and Circuit Breakers

var pipeline = new ResiliencePipelineBuilder()

.AddRetry(new RetryStrategyOptions

{

MaxRetryAttempts = 5,

BackoffType = DelayBackoffType.Exponential,

Delay = TimeSpan.FromSeconds(1)

})

.AddCircuitBreaker(new CircuitBreakerStrategyOptions

{

FailureRatio = 0.5,

SamplingDuration = TimeSpan.FromSeconds(30),

MinimumThroughput = 10,

BreakDuration = TimeSpan.FromSeconds(30)

})

.Build();

await pipeline.ExecuteAsync(async token =>

{

await CallExternalApiAsync(token);

});Use retries for transient failures. Use circuit breakers to prevent overwhelming downstream systems. In high-concurrency scenarios, retries without backoff easily cause cascading failures.

Dead-Letter Queues and Poison Messages

Service Bus supports MaxDeliveryCount. Once exceeded, the message automatically moves to the dead-letter queue. Configure this at the queue level (e.g., 5 or 10 attempts) and let your handler focus on business logic rather than duplicating built-in retry behavior.

For custom handling — such as routing failed messages for manual review:

catch (Exception ex)

{

await _reviewTopicSender.SendMessageAsync(

new ServiceBusMessage(JsonSerializer.Serialize(new

{

OriginalMessageId = message.MessageId,

Error = ex.Message

})));

await messageActions.CompleteMessageAsync(message);

}Use DLQs for system-level retry exhaustion. Use custom routing when business context requires domain-specific review.

6.4 Graceful Shutdown and Cancellation

All three compute models support cancellation tokens. When the platform scales down or restarts, your code must respect cancellation:

[Function("ProcessOrder")]

public async Task Run(

[ServiceBusTrigger("orders")] ServiceBusReceivedMessage message,

CancellationToken cancellationToken)

{

cancellationToken.ThrowIfCancellationRequested();

await ProcessAsync(message, cancellationToken);

}In ACA Jobs, handle SIGTERM explicitly:

var cts = new CancellationTokenSource();

Console.CancelKeyPress += (_, e) =>

{

e.Cancel = true;

cts.Cancel();

};

await ProcessBatchAsync(cts.Token);Why this matters: if shutdown happens mid-transaction, you want rollback — not partial writes. If processing large batches, you want to checkpoint safely. Ignoring cancellation leads to half-completed operations and inconsistent state.

Resilient background processing is not about a single retry policy or a single idempotency table. It is about designing every layer — message handling, state persistence, retries, shutdown — to assume failure is normal.

7 Using Open-Source Frameworks with Azure Background Systems

Azure provides strong primitives, but many teams prefer higher-level messaging frameworks that standardize message handling, retries, and testing patterns. The goal is not to replace Azure-native features, but to make job logic cleaner, more testable, and more portable.

7.1 MassTransit and Rebus: Abstracting Azure Service Bus

MassTransit and Rebus sit on top of transports like Azure Service Bus. Instead of writing trigger-bound logic directly, you define transport-agnostic consumers.

MassTransit Consumer

public class OrderSubmittedConsumer : IConsumer<OrderSubmitted>

{

public async Task Consume(ConsumeContext<OrderSubmitted> context)

{

await ProcessOrderAsync(context.Message);

}

}Configuration:

services.AddMassTransit(x =>

{

x.AddConsumer<OrderSubmittedConsumer>();

x.UsingAzureServiceBus((context, cfg) =>

{

cfg.Host(configuration["ServiceBusConnection"]);

cfg.ReceiveEndpoint("orders", e =>

{

e.ConfigureConsumer<OrderSubmittedConsumer>(context);

});

});

});Running MassTransit Inside Azure Functions

MassTransit has an Azure Functions integration package. Instead of manually wiring Service Bus triggers, you can host MassTransit inside the isolated worker:

builder.Services.AddMassTransitForAzureFunctions(cfg =>

{

cfg.AddConsumer<OrderSubmittedConsumer>();

cfg.UsingAzureServiceBus((context, busCfg) =>

{

busCfg.Host(configuration["ServiceBusConnection"]);

});

});This approach gives you consistent consumer patterns across Functions and container workers, built-in saga support, and integration test harnesses.

Rebus

Rebus emphasizes simplicity and embeds easily in ACA Jobs, WebJobs, console workers, or Azure Functions:

public class OrderSubmittedHandler : IHandleMessages<OrderSubmitted>

{

public async Task Handle(OrderSubmitted message)

{

await ProcessOrderAsync(message);

}

}Wolverine (by Jeremy Miller) is also gaining traction as a modern alternative, with mediator-style message handling and deep ASP.NET Core integration.

The key takeaway: these frameworks decouple business logic from Azure trigger implementations, improving testability and portability across compute models.

7.2 Hangfire: When You Need a Database-Backed Scheduler

Hangfire is not event-driven — it is a persistent background job scheduler backed by SQL Server or Redis. It is useful when you need scheduled or delayed jobs, a dashboard for job inspection, and identical behavior locally and in Azure:

builder.Services.AddHangfire(config =>

config.UseSqlServerStorage(

configuration["HangfireConnection"]));

builder.Services.AddHangfireServer();

// Enqueue

BackgroundJob.Enqueue<EmailService>(

s => s.SendWelcomeEmailAsync("123"));

// Recurring

RecurringJob.AddOrUpdate<EmailService>(

"daily-summary",

s => s.SendWelcomeEmailAsync("admin"),

Cron.Daily);Note that advanced features like Redis storage are part of Hangfire Pro (paid). Hangfire is not ideal for high-throughput event streaming — it is best suited for scheduled or business workflow-style tasks.

7.3 Distributed Locking: Coordinating Multiple Job Instances

When multiple job instances run concurrently, race conditions become real. You may need to ensure only one cleanup job runs at a time, or a single coordinator controls shared state.

Redis-Based Lock

Using StackExchange.Redis for a simple single-instance lock:

var db = redis.GetDatabase();

var lockKey = "lock:daily-cleanup";

var lockToken = Guid.NewGuid().ToString();

var acquired = await db.LockTakeAsync(

lockKey, lockToken, TimeSpan.FromSeconds(30));

if (!acquired)

return;

try

{

await RunCleanupAsync();

}

finally

{

await db.LockReleaseAsync(lockKey, lockToken);

}Note: this is not the Redlock algorithm, which requires multiple independent Redis instances. For many applications, a single-instance lock is sufficient. For stronger distributed guarantees, use a Redlock implementation library.

Durable Entities as a Coordinator

Durable Entities in Azure Functions provide serialized execution per entity instance — an implicitly serialized coordinator:

public class BatchCoordinator

{

public bool IsRunning { get; set; }

public void Start()

{

if (IsRunning)

throw new InvalidOperationException("Already running");

IsRunning = true;

}

public void Complete() => IsRunning = false;

}Each entity instance processes operations sequentially, giving you lock-like behavior without manual distributed lock code. Use Redis locks when outside the Durable ecosystem; use Durable Entities when already inside Azure Functions.

7.4 The Saga Pattern for Multi-Step Workflows

Sagas coordinate long-running, multi-step processes without distributed transactions. MassTransit provides built-in saga state machines:

public class OrderState : SagaStateMachineInstance

{

public Guid CorrelationId { get; set; }

public string CurrentState { get; set; } = default!;

public bool PaymentCompleted { get; set; }

}

public class OrderStateMachine : MassTransitStateMachine<OrderState>

{

public State AwaitingPayment { get; private set; }

public State Completed { get; private set; }

public Event<PaymentCompleted> PaymentCompletedEvent { get; private set; }

public OrderStateMachine()

{

InstanceState(x => x.CurrentState);

Event(() => PaymentCompletedEvent);

During(AwaitingPayment,

When(PaymentCompletedEvent)

.ThenAsync(async context =>

{

await UpdateInventoryAsync(context.Instance);

})

.TransitionTo(Completed));

}

}Saga state persists in SQL Server, Cosmos DB, or Azure Table Storage. If the host crashes, the saga resumes from persisted state. This pattern is powerful for order fulfillment, subscription lifecycle management, and multi-step financial transactions.

The core idea: Azure provides the infrastructure. Frameworks like MassTransit, Rebus, Wolverine, and Hangfire provide higher-level structure. Use them when your background system grows beyond simple queue handlers into a distributed workflow engine.

8 Operational Excellence and Observability

Background jobs rarely have a UI. When something goes wrong, there is no user staring at a spinner. That makes observability critical. If you cannot quickly answer which jobs are failing, how long they take, whether backlog is growing, and whether retries are masking deeper failures — you do not have sufficient visibility.

8.1 OpenTelemetry for Cross-Service Tracing

OpenTelemetry (OTEL) provides a vendor-neutral way to capture traces, metrics, and logs. In modern .NET, it should be the primary observability entry point.

Basic Setup

builder.Services.AddOpenTelemetry()

.WithTracing(tracing =>

{

tracing

.AddSource("BackgroundJobs")

.AddAzureMonitorTraceExporter();

})

.WithMetrics(metrics =>

{

metrics

.AddRuntimeInstrumentation()

.AddAzureMonitorMetricExporter();

});Inside your job, create structured traces:

private static readonly ActivitySource ActivitySource =

new("BackgroundJobs");

using var activity = ActivitySource.StartActivity("ProcessOrder");

activity?.SetTag("job.name", "OrderProcessor");

activity?.SetTag("order.id", order.Id);

await ProcessAsync(order);Propagating Trace Context Through Queues

The hardest part of distributed tracing in background systems is propagation across message brokers. Without this step, traces stop at the queue boundary.

When publishing:

using var activity = ActivitySource.StartActivity("PublishOrder");

var message = new ServiceBusMessage(

JsonSerializer.Serialize(order));

if (activity != null)

{

message.ApplicationProperties["traceparent"] = activity.Id;

message.ApplicationProperties["tracestate"] = activity.TraceStateString;

}

await sender.SendMessageAsync(message);On the consumer side:

if (message.ApplicationProperties.TryGetValue("traceparent", out var traceParent))

{

var context = ActivityContext.Parse(

traceParent.ToString(),

message.ApplicationProperties["tracestate"]?.ToString());

using var activity = ActivitySource.StartActivity(

"ProcessOrder",

ActivityKind.Consumer,

context);

await ProcessAsync(order);

}With trace propagation, you get true end-to-end distributed tracing across services.

8.2 Structured Logging with ILogger

In modern .NET with OTEL, structured logging via ILogger<T> should be preferred over direct TelemetryClient usage:

_logger.LogInformation(

"Processing order {OrderId} for tenant {TenantId}",

order.Id,

order.TenantId);The message template properties become structured fields in Azure Monitor, making Kusto queries far more powerful for diagnosis and analysis.

8.3 Monitoring Silent Failures

Silent failures are dangerous — a job may appear healthy while backlog grows quietly. Key signals to monitor:

- Service Bus

ActiveMessagesandDeadLetteredMessages - Function execution failures

- ACA Job execution failures and container exit codes

Example CLI-based alert for DLQ growth:

az monitor metrics alert create \

--name dlq-alert \

--resource-group rg \

--scopes /subscriptions/{subId}/resourceGroups/rg/providers/Microsoft.ServiceBus/namespaces/ns \

--condition "avg DeadletteredMessages > 10" \

--window-size 5m \

--evaluation-frequency 1m \

--action-group my-action-groupAlerting should be based on trends, not single failures. One retry is normal. Sustained failure is not.

8.4 Dashboarding: Duration, Throughput, and Cost

Record job duration explicitly as a custom metric:

var sw = Stopwatch.StartNew();

await ProcessAsync(order);

sw.Stop();

telemetryClient.TrackMetric(

"JobDurationMs",

sw.ElapsedMilliseconds,

new Dictionary<string, string>

{

{ "JobName", "OrderProcessor" }

});Query in Kusto:

customMetrics

| where name == "JobDurationMs"

| summarize avgDuration = avg(value),

p95 = percentile(value, 95)

by bin(timestamp, 1h)For cost estimation: Functions use FunctionExecutionUnits; ACA Jobs multiply CPU/memory allocation by execution duration. Architects need visibility into cost per workload, not just total monthly spend.

8.5 Health Checks for Background Systems

Health checks are straightforward for web apps but less obvious for background jobs.

For Container Apps or WebJobs with an HTTP endpoint:

builder.Services.AddHealthChecks()

.AddCheck("database", () =>

{

return _db.CanConnect()

? HealthCheckResult.Healthy()

: HealthCheckResult.Unhealthy();

});

app.MapHealthChecks("/health");For Azure Functions (which don’t expose traditional health endpoints), use Application Insights availability tests, monitor execution metrics, or emit custom heartbeat metrics.

For ACA Jobs (run-to-completion), monitor execution success/failure rates and alert on repeated non-zero exit codes. Health in background systems is less about HTTP endpoints and more about consistent execution patterns.

Operational excellence for background jobs is not optional. Durable messaging and scaling mean little if you cannot see what is happening. Structured logging, trace propagation, explicit metrics, and real alert rules turn background processing from a black box into an observable system you can trust.