1 The Strategic Foundation: The Hybrid Compute Spectrum

Mobile AI systems now have three realistic places where computation can run: the cloud, the edge (MEC), and directly on the device. Each tier comes with different trade-offs around latency, privacy, cost, and reliability. The question for architects is no longer “Can our model run on mobile hardware?” — because it often can — but “Which workloads belong on-device, and which should stay off-device?” This section explains how to think about those decisions and why they matter for performance, user experience, and compliance.

1.1 The Three-Tier Architecture: Cloud vs. Edge (MEC) vs. On-Device

Most modern mobile AI applications distribute their workloads across all three tiers. Heavy or shared tasks may live in the cloud, low-latency regional tasks run at the edge, and privacy-sensitive or instant-response features execute locally on the device.

1.1.1 Cloud: Infinite scale, high latency, privacy risks

The cloud remains the most capable environment for large-scale AI workloads. It provides the compute needed for:

- Training and fine-tuning foundation models

- Running very large RAG pipelines over massive datasets

- Executing high-parameter LLMs (70B–400B)

- Experimentation, evaluation, and A/B testing

But cloud inference also introduces latency. Typical round trips fall between 100–300 ms, and real-world mobile networks regularly add additional jitter. There are privacy and compliance concerns as well: whenever user data leaves the device, the system inherits responsibilities around:

- Handling PII and PHI correctly

- Meeting GDPR/CCPA or regional data laws

- Managing API costs as usage scales

Cloud is powerful, but relying on it for everything hurts responsiveness and creates ongoing operational cost.

1.1.2 The Edge (5G/MEC): The middle ground

Multi-access edge compute sits physically closer to the user, usually inside the carrier network. This reduces latency to under 10 ms, which makes certain workloads possible:

- Real-time gaming AI with strict latency bounds

- Shared augmented reality scenes

- Processing streams from large numbers of IoT sensors

- Vehicle-to-infrastructure safety or routing systems

Edge compute is also helpful when regulations require data to stay within a specific region.

However, MEC still cannot solve:

- Offline usage

- Absolute privacy requirements

- Contextual personalization that lives exclusively on the user’s device

It sits in the middle: faster than the cloud, broader than the device, but limited by network dependency.

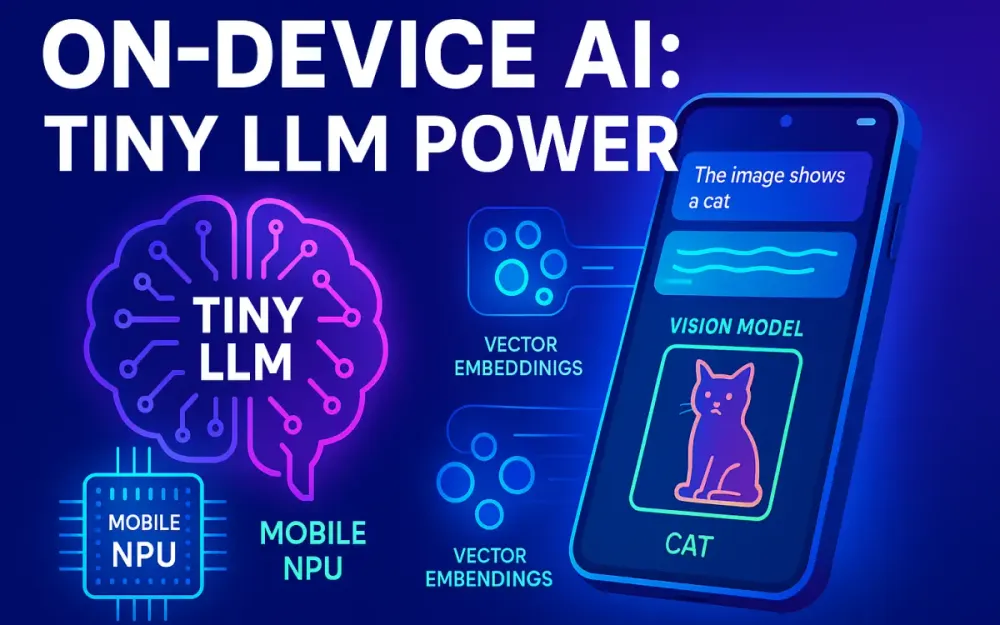

1.1.3 On-Device (The Focus): Zero latency, offline capability, absolute privacy

Running AI directly on the device removes the network from the equation. Tiny LLMs and mobile-first vision models now run in real time, often achieving:

- <5 ms latency for vision tasks

- <20 ms per token on current NPUs

This enables:

- Live translation and transcription

- Biometric and identity verification

- Privacy-preserving voice assistants

- Notification and message summarization even when offline

Because data never leaves the device:

- Privacy exposure is drastically reduced

- GDPR/CCPA compliance becomes simpler

- Per-inference cloud costs disappear

The trade-offs are different: you’re now constrained by the phone’s heat, memory, and battery. Designing within those limits is what makes on-device AI challenging and interesting.

1.2 The Decision Matrix: When to Move Workloads to the Device

Not every workload belongs on the device. Some are too large, some too complex, and some too energy-intensive. A structured decision framework helps determine the right place to run each feature.

1.2.1 Cost per Inference: API cost vs. battery cost

Cloud inference has a direct financial cost — every request uses tokens and hits a billable API. On-device inference doesn’t cost money, but it does cost battery and sometimes introduces heat that can slow the system down.

A rough comparison:

- Cloud inference for a 3B model: ~$0.00003–$0.0001 per request

- On-device inference for the same: ~5–12 joules per 200-token output

For features users trigger many times a day — autocomplete, summaries, simple Q&A — on-device execution is usually more efficient and much cheaper overall. Heavy, occasional, or long-context tasks may still favor the cloud.

1.2.2 Privacy & Compliance: GDPR/CCPA implications

Cloud-based AI must treat user input as potentially sensitive data. This triggers:

- Data processing agreements

- Retention and deletion policies

- Documentation for audits

- Limits on how data moves across regions

On-device execution avoids most of these concerns entirely because the data never leaves the device. This is why many enterprises now require:

- Local embeddings

- Local vector stores

- Local generation for personal or confidential content

It reduces compliance overhead and lowers risk — both legal and reputational.

1.2.3 Availability: The “Tunnel Problem”

Mobile connectivity is unpredictable. Users move between elevators, subways, planes, parking garages, and rural dead zones. Any AI feature that fails simply because the network is unavailable creates a broken experience.

Architects solve for this by ensuring:

- Local fallback models are always available

- A routing system decides whether to run locally or in the cloud

- Cloud offloading happens opportunistically, not by default

A well-designed system never makes the user wait for connectivity to do something simple.

1.3 The Rise of the NPU (Neural Processing Unit)

The main reason on-device AI is becoming practical is the rise of NPUs — specialized AI accelerators inside modern smartphones. They handle matrix-heavy operations far more efficiently than CPUs or GPUs.

1.3.1 Understanding the heterogeneous compute triangle

Each compute unit plays a different role:

- CPU – general-purpose but inefficient for ML

- GPU – strong at parallel math, historically popular for vision

- NPU – optimized for quantized inference (INT8, INT4), extremely power-efficient

NPUs allow mobile devices to run LLMs and multimodal models at speeds that would have been impossible a few years ago. They reduce power consumption, manage tensor operations efficiently, and offload work from the CPU and GPU.

1.3.2 Current Hardware Landscape

Most flagship devices now ship with dedicated AI cores:

| Platform | Accelerator | Peak Performance |

|---|---|---|

| Apple A17 Pro / M-series | ANE | ~35 TOPS |

| Snapdragon 8 Gen 3 | Hexagon NPU | ~45 TOPS |

| MediaTek Dimensity 9300 | APU 790 | ~60 TOPS |

| Google Tensor G3 | TPU-like NPU | ~25 TOPS |

These accelerators make it possible to run Tiny LLMs, perform vision tasks in real time, and build fully private on-device RAG systems. They are the foundation of the next generation of mobile AI experiences.

2 The Hardware Reality: Constraints and Budgets

On-device AI is powerful, but it has limits. Phones operate inside strict physical boundaries that don’t exist in cloud environments. Heat, battery drain, and RAM pressure directly shape what an app can realistically do. Even a well-designed model or pipeline will fail if it ignores these constraints. Understanding them is essential before deciding how to run Tiny LLMs, vision models, or on-device RAG.

2.1 The Thermal Envelope

Phones warm up quickly when running continuous AI workloads. They have no active cooling, so when the device reaches its thermal threshold, the operating system slows the processor down to avoid overheating. This affects both latency and user experience.

2.1.1 Throttling dynamics: How sustained inference kills performance

Thermal throttling usually begins when the device surface reaches around 42–48°C. The heat builds faster during:

- LLM generation above ~10 tokens/sec

- High frame rate vision tasks (50–60 FPS object detection)

- Multimodal workloads that use both camera and NPU

Once throttling starts, performance drops sharply:

- Token generation can slow by 30–70%

- Camera-driven models lose frame rate

- UI animations may stutter or freeze

For on-device AI, this means features must be designed to avoid long, uninterrupted compute bursts.

2.1.2 Burst vs. Sustained workloads

Most mobile AI tasks fall into two categories:

- Burst workloads — quick operations like autocomplete, intent classification, or summarizing a notification. These fit naturally on-device because they complete before heat builds up.

- Sustained workloads — continuous video analysis, live transcription, or long-context reasoning. These must be throttled, chunked, or selectively offloaded.

Common mitigation techniques include:

- Lowering frame rate until motion is detected

- Using smaller or more aggressively quantized models

- Switching to cloud inference when the device is too hot

The goal is to keep the device responsive while still delivering value from on-device AI.

2.2 The Battery Budget

Battery determines whether users trust an AI feature. If a model drains 20% of a battery during a short session, users quickly disable the feature. On-device AI must therefore be engineered with energy use front and center.

2.2.1 Measuring Joules per Token (J/T) and Joules per Frame

Benchmarking energy cost is as important as benchmarking speed. For mobile, the right metrics depend on the model type:

- LLMs: Joules per token

- Vision: Joules per frame

- Embeddings: Joules per sample

For example, a well-optimized 2–3B INT4 model on an iPhone 15 Pro typically costs:

- ~0.05–0.1 J/token

- 2–4 W during active inference

Vision models consume more power, often spiking to 6 W or higher during high-frame-rate processing.

These numbers help architects design tasks that fit within a user’s expected battery usage.

2.2.2 Impact of memory bandwidth

A major—but often overlooked—source of battery drain is memory bandwidth. Large models require constant fetching of weights and key/value caches from RAM. Each fetch costs energy.

Smaller models reduce this cost, which is one reason Tiny LLMs perform so well on mobile: fewer parameters mean fewer memory transfers.

2.2.3 Techniques to minimize wake-ups and radio usage

Surprisingly, using the network is often more expensive than doing inference locally. Radios consume significant energy, especially if they need to re-establish a connection.

To reduce battery impact:

- Batch background tasks to avoid repeated wake-ups

- Prefer local inference to avoid unnecessary API calls

- Cache embeddings or summaries locally

- Sync with the cloud only during charging or when on Wi-Fi

When designed correctly, on-device AI can reduce total energy consumption by avoiding constant cloud communication.

2.3 Memory Management (RAM)

However efficient the model is, it still needs to fit in RAM. Tiny LLMs are small relative to server models, but even a 1B–3B parameter model can push the limits on mid-range devices.

2.3.1 The OS Killer: iOS/Android aggressive background app killing

Both iOS and Android aggressively reclaim memory when RAM is tight. Large LLMs or vision models may force the app into situations where:

- The OS kills the app in the background

- The model is unloaded without warning

- The user returns to a cold-start experience

To reduce the risk, developers use:

- Lazy-loading, where only critical model layers load initially

- Streaming quantization formats like GGUF or MLX shards

- Adapters or LoRA modules instead of full fine-tuned models

These strategies help keep memory usage manageable while still supporting multiple on-device features.

2.3.2 Unified Memory Architecture (UMA)

Most modern mobile chipsets use a Unified Memory Architecture (UMA). CPU, GPU, and NPU share the same RAM pool. This makes some operations faster, but it also means all components compete for the same memory budget.

Typical limits:

- Devices with 4 GB RAM can comfortably host ~1B parameter models

- Devices with 8 GB RAM can support ~3B models

- Devices with 12 GB RAM can support ~8B models, depending on quantization

UMA benefits include:

- No PCIe transfer overhead

- Lower latency for multimodal tasks

- Efficient sharing across compute units

But UMA also means poor memory planning can affect the entire system, not just the AI feature. If an LLM takes too much RAM, other apps or system processes slow down, creating a poor experience.

3 The Optimization Pipeline: From Foundation to Artifact

Taking a large cloud model and turning it into something a mobile device can run is not a simple conversion step — it’s a full optimization pipeline. You reshape the model, compress it, and compile it into an artifact that fits the phone’s thermal, memory, and power budget. This section explains the main techniques used to get Tiny LLMs and vision models ready for real on-device deployment.

3.1 Quantization Strategies

Quantization is the most important tool in the pipeline. Without it, even a “small” 3B parameter model would overwhelm most mobile devices. Quantization reduces precision in a controlled way so the model becomes faster, smaller, and more battery-friendly, while still producing good results.

3.1.1 Post-Training Quantization (PTQ)

PTQ is the quickest path to shrink a model because it doesn’t require retraining. You simply take a pretrained model and convert its weights:

- FP32 → FP16

- FP16 → INT8

- INT8 → INT4

Each step reduces storage size and memory bandwidth requirements. For mobile workloads, bandwidth savings often matter more than raw compute.

Example using PyTorch dynamic quantization:

import torch

from torch.quantization import quantize_dynamic

from transformers import AutoModelForCausalLM

model = AutoModelForCausalLM.from_pretrained("facebook/opt-1.3b")

quantized = quantize_dynamic(model, {torch.nn.Linear: torch.int8})

torch.save(quantized.state_dict(), "opt-1.3b-int8.pt")PTQ works well for most tasks, but large reasoning jumps may suffer a small accuracy drop — something developers must measure for their scenario.

3.1.2 Quantization-Aware Training (QAT)

QAT produces better results, especially for INT8 or INT4 models. It simulates quantized operations while training, helping the model adjust to lower precision. For on-device AI, QAT often yields noticeably more stable outputs, particularly for multilingual, code, or reasoning tasks.

Blueprint in PyTorch:

import torch

import torch.quantization as tq

model_fp32 = create_model()

model_fp32.qconfig = tq.get_default_qat_qconfig("fbgemm")

model_qat = tq.prepare_qat(model_fp32)

for data, label in training_loader:

optimizer.zero_grad()

loss = loss_fn(model_qat(data), label)

loss.backward()

optimizer.step()

model_int8 = tq.convert(model_qat)Because QAT takes more effort, most teams start with PTQ and introduce QAT only for models that need higher accuracy or are meant for sustained vision/LLM workloads.

3.1.3 The State of the Art: 4-bit vs. 2-bit quantization

Recent quantization methods — like GPTQ and AWQ — have made INT4 the sweet spot for mobile deployment. They allow 3B models to fit easily on-device while retaining most of their accuracy.

In practice:

- 4-bit AWQ: 90–95% accuracy retention, usable for general-purpose LLM tasks

- 2-bit techniques: still experimental; good for narrow, classification-oriented tasks but unreliable for generative outputs

Most on-device LLMs today use INT4 because it offers the best balance between speed, quality, and memory use.

3.2 Pruning and Distillation

Quantization isn’t the only tool. Pruning and distillation reshape the model architecture itself so it can run efficiently on real hardware.

3.2.1 Structural vs. Unstructured pruning

Pruning removes parts of a model that contribute little to the output. There are two approaches:

- Unstructured pruning removes individual weights. It creates sparse matrices but doesn’t always map well to mobile accelerators.

- Structured pruning removes entire neurons, channels, or attention heads. This produces smaller dense matrices that run efficiently on NPUs and GPUs — ideal for mobile deployment.

Example of unstructured pruning for demonstration:

import torch.nn.utils.prune as prune

prune.l1_unstructured(model.layer, name="weight", amount=0.3)For real production pipelines, structural pruning gives far better speedups and is easier to support across runtimes like CoreML and TFLite.

3.2.2 Knowledge Distillation

Distillation trains a smaller “student” model to imitate a larger “teacher.” For example:

- Teacher → Llama-3 70B

- Student → Phi-3 Mini or a custom 1B transformer

Distilled students perform surprisingly well, often punching far above their parameter count. For on-device inference where memory and thermal limits are tight, distillation is often what makes a model viable.

Basic distillation pattern:

teacher_output = teacher(input_ids)

student_output = student(input_ids)

loss = mse_loss(student_output.logits, teacher_output.logits)Nearly every strong 1B–3B model available today is some combination of pruning, distillation, and advanced quantization.

3.3 Compilation and Runtime Selection

Once the model is compressed and optimized, it still needs to run efficiently on the device. The runtime you choose matters just as much as the model architecture.

3.3.1 Apple Ecosystem: CoreML, palettization, MLX

Apple devices benefit from tight integration between software and the Apple Neural Engine (ANE). The main tools include:

- CoreML Tools — converts PyTorch or TensorFlow models into

.mlmodelfiles - Weight palettization — clusters weights into small palettes, reducing memory access and boosting speed

- MLX — Apple’s unified ML framework designed specifically for Apple Silicon

Example converting PyTorch to CoreML:

import coremltools as ct

import torch

traced = torch.jit.trace(model, example_input)

mlmodel = ct.convert(traced, inputs=[ct.TensorType(shape=example_input.shape)])

mlmodel.save("model.mlmodel")For Tiny LLMs, MLX-LM is emerging as a preferred runtime thanks to its speed and lightweight footprint.

3.3.2 Android/Cross-Platform: TFLite, ONNX Runtime, ExecuTorch

Android has a diverse hardware ecosystem, so cross-platform compatibility matters. The main options:

- TensorFlow Lite (TFLite) — best-in-class for quantized models; excellent hardware acceleration

- ONNX Runtime Mobile — flexible and good for custom operations

- ExecuTorch — PyTorch’s new edge runtime; strong NPU support and optimized for transformer architectures

Example converting to TFLite using INT8 optimizations:

converter = tf.lite.TFLiteConverter.from_saved_model("model")

converter.optimizations = [tf.lite.Optimize.DEFAULT]

tflite_model = converter.convert()

open("model.tflite", "wb").write(tflite_model)TFLite remains the most widely deployed inference engine for Android-based Tiny LLMs and mobile vision models.

3.3.3 WebGPU/WASM: Running models in mobile browsers

Mobile browsers are now capable of running LLMs and vision models using WebGPU. This allows developers to ship on-device AI without requiring a native app.

Common toolchains:

- WebLLM

- Transformers.js

- ONNX Runtime Web

Example (WebGPU text generation):

import { pipeline } from "@xenova/transformers";

const generator = await pipeline("text-generation", "Xenova/phi-2");

const output = await generator("Hello", { max_new_tokens: 30 });This approach makes on-device inference possible even in web environments, opening new opportunities for private, client-side AI experiences.

4 Generative AI on Mobile: Tiny LLMs and SLMs

Modern smartphones now have enough compute to run language models locally — something that only high-end GPUs could do a few years ago. These smaller LLMs, often called SLMs, are designed to fit the tight constraints of mobile hardware while still delivering useful capabilities such as summarization, intent classification, rewriting, and lightweight reasoning. The real challenge is selecting the right model and shaping prompts so the device stays fast, cool, and responsive.

4.1 Selecting the Right SLM (Small Language Model)

Tiny LLMs perform differently than their larger cloud counterparts. They are fast, memory-efficient, and far cheaper to run, but they have smaller context windows and less reasoning depth. Choosing the right model means understanding the device’s hardware and the types of queries the app must handle.

4.1.1 The <3 Billion Parameter Sweet Spot: Llama-3.2 (1B/3B), Phi-3.5 Mini, Gemma 2 (2B)

Most modern phones can comfortably run 1B–3B parameter models when quantized to INT4 or INT8. This size range hits a good balance: small enough to fit in memory and avoid throttling, but large enough to provide good-quality language output.

Common choices include:

- Llama-3.2 1B — great for classification, rewriting, and quick, predictable responses

- Llama-3.2 3B — noticeably stronger reasoning, still suitable for high-end devices

- Phi-3.5 Mini — compact and strong at code and general text tasks

- Gemma 2B — efficient architecture with low latency and energy usage

On high-end NPUs, a 3B INT4 model can generate tokens smoothly and remain within thermal limits for typical workloads. On mid-range phones, the same model may require more aggressive quantization or reduced context window sizes.

4.1.2 Performance benchmarks on iPhone 15 Pro vs. Pixel 9 Pro

Performance varies depending on hardware acceleration and heat conditions. The numbers below represent healthy devices under typical workloads. They help set realistic expectations for what an on-device assistant or summarizer can achieve.

Approximate throughput for a 3B INT4 model:

| Device | Runtime | Tokens/sec | Notes |

|---|---|---|---|

| iPhone 15 Pro | MLX-LM / CoreML | ~18–24 | Stable until thermal throttling begins |

| Pixel 9 Pro | ExecuTorch / Hexagon NPU | ~14–20 | Depends on NPU load and device temperature |

When running sustained tasks — long summaries, multiple back-to-back queries, or background generation — token speed may fall as the device warms up. For mobile apps, this reinforces why short, targeted generations work best on-device.

4.2 Prompt Engineering for Low-Compute

On-device models don’t have the same compute freedom as cloud models. Prompt design directly affects latency, battery use, and the device’s ability to stay within thermal limits. Good prompts reduce workload without hurting quality.

4.2.1 Why “Chain of Thought” might be too expensive on-device

Cloud-scale models can handle verbose reasoning because servers absorb the cost. On a smartphone, every extra token consumes energy and generates heat. Chain-of-Thought prompts can triple or quadruple the output length, which significantly slows token generation and increases battery draw.

Instead, mobile systems use more compact strategies:

- Ask the model to “explain briefly”

- Use one-sentence reasoning instead of detailed step-by-step chains

- Offload heavy reasoning to cloud when needed

- Use classifiers or heuristics to reduce reasoning load before generation

For example, an email triage feature doesn’t need a full reasoning tree.

Cloud-style prompt (inefficient for mobile):

Think step by step and explain your reasoning in detail.Mobile-optimized prompt:

Decide the priority and explain in one short sentence.The shorter version reduces compute cost drastically while preserving usefulness.

4.2.2 Token efficiency: Minimizing context window usage to save battery

The more tokens a prompt contains, the more memory bandwidth the model consumes — and memory access is a major source of energy use. Efficient prompts matter.

Common approaches:

- Use a rolling summary instead of feeding the full conversation

- Embed older messages and retrieve only relevant ones

- Keep system prompts short and focused

- Avoid overly verbose template prompts

With careful prompt tuning, developers often reduce energy per interaction by 40–60%. This directly improves battery life and allows the model to run longer without thermal throttling.

4.3 Fine-Tuning for Device (LoRA)

Fine-tuning an entire model is impractical on mobile devices because it requires storing many large variants. LoRA adapters solve this by applying tiny, domain-specific updates to a single shared base model. This lets the app switch personalities or domains without reloading large weights.

4.3.1 Using Low-Rank Adaptation (LoRA) adapters to switch contexts

A LoRA adapter contains only a small set of learned weight adjustments. Instead of storing a full model per domain, the app stores a large base model plus several small adapters.

Typical sizes:

- Base model (quantized): 1–2 GB

- Medical LoRA: ~30 MB

- Legal LoRA: ~25 MB

- Personal writing LoRA: ~15 MB

Because adapters are small, they can be downloaded on demand or bundled with specific app features. Switching them is fast — often under 100 ms — so the app can fluidly adjust its behavior.

Example using PEFT:

from transformers import AutoModelForCausalLM

from peft import PeftModel

base = AutoModelForCausalLM.from_pretrained("Phi-3.5-mini-int4")

model = PeftModel.from_pretrained(base, "lora-medical")

output = model.generate(

input_ids,

max_new_tokens=60,

)This approach keeps storage requirements low while still supporting many specialized behaviors.

4.4 Implementation Stack

Running on-device LLMs efficiently requires the right runtime. Most teams rely on specialized engines built around quantized inference and NPU acceleration.

4.4.1 Example workflow using llama.cpp (Android/iOS) and MLX-LM (Apple)

llama.cpp is one of the most widely-used mobile inference engines. It supports GGUF quantized models and offers native bindings for both Android (JNI) and iOS (Swift). Apple devices also benefit from MLX-LM, which takes advantage of the ANE for fast local generation.

Minimal llama.cpp example on Android (C++):

llama_context_params ctx_params = llama_context_default_params();

ctx_params.n_ctx = 2048;

ctx_params.n_threads = 4;

llama_model *model = llama_load_model_from_file("phi-3.5-mini-int4.gguf", ctx_params);

llama_context *ctx = llama_new_context(model, ctx_params);

std::string prompt = "Summarize this message in one sentence:";

llama_tokenize(model, prompt, ...);

// Generate tokens

while (generated < limit) {

llama_token token = llama_sample_top_p(...);

llama_eval(ctx, &token, 1, ...);

}And an Apple-specific MLX-LM example:

import mlx_lm

model = mlx_lm.load("mlx-community/llama3.2-1b-int4")

output = model.generate(

"Explain in 2 sentences why caching improves performance.",

max_tokens=50

)

print(output.text)Both runtimes are designed for low-latency quantized inference and form the core of most real-world Tiny LLM deployments today.

5 On-Device RAG: Private Knowledge Retrieval

Retrieval-Augmented Generation (RAG) strengthens model responses by grounding them in real information. When implemented on-device, RAG provides this benefit without sending any user data to the cloud. This is valuable for privacy, compliance, and offline use cases. In many ways, on-device RAG is the natural companion to Tiny LLMs: the model handles generation, and the vector store provides relevant context — all inside the user’s phone.

5.1 The Privacy Architecture

On-device RAG is built around a simple idea: everything stays local. This lets the app answer questions about documents, chats, notes, or workplace material stored on the device — without exposing sensitive content to external servers. For enterprise and consumer apps alike, this makes RAG suitable for cases where confidentiality matters.

5.1.1 Why sending vector embeddings to the cloud defeats Private AI

Even though embeddings look like anonymous numbers, they still contain meaning. If they leave the device, the system loses the privacy benefits of local inference. An embedding can reveal:

- The theme or intent of a message

- Whether the text mentions a person or event

- High-level semantics that could be re-identified

Sending embeddings to the cloud also introduces:

- GDPR/CCPA obligations

- Rules about data retention and residency

- The risk of future misuse or unauthorized inference

For apps that offer “private AI” or process personal notes, local embeddings and local similarity search are essential — not optional.

5.1.2 Local PII scrubbing before embedding

Even when everything runs on-device, many teams still apply lightweight PII scrubbing before embedding text. This reduces any risk of sensitive information being reused or misinterpreted later in the retrieval pipeline.

Example scrubbing step:

def scrub(text):

# Simple on-device PII removal

text = re.sub(r"\b\d{3}-\d{2}-\d{4}\b", "[SSN]", text)

text = re.sub(r"\b\d{10}\b", "[PHONE]", text)

text = re.sub(r"[A-Za-z0-9._%+-]+@[A-Za-z0-9.-]+\.[A-Za-z]{2,}", "[EMAIL]", text)

return textBecause this happens entirely on the device, the raw text never leaves the user’s control — one of the core promises of on-device RAG.

5.2 Embedded Vector Stores

The vector store is where embeddings live. On-device, it must be small, predictable, and efficient. It needs to handle thousands of documents without overwhelming storage or RAM.

5.2.1 Technology choices

Several embedded vector stores work well on mobile, each with its own strengths:

- SQLite + sqlite-vss — a natural fit because SQLite is already used across iOS and Android; easy to ship, easy to query

- ObjectBox — zero-copy design makes it fast, especially when embedding many small items

- Chroma (embedded mode) — portable across Python-based apps

- Voy (Rust/WASM) — extremely fast for hybrid or browser-based mobile apps

Most mobile app teams start with SQLite because it keeps the storage footprint small and avoids adding additional engine dependencies.

5.2.2 Managing index size on mobile storage

Index size matters more on a phone than on a cloud server. As users accumulate notes, emails, or calendar entries, embeddings grow. The app must proactively manage the index to avoid consuming hundreds of megabytes.

Typical approaches include:

- Chunking text into larger segments to reduce embedding count

- Using smaller embedding dimensions (e.g., 256–384)

- Pruning documents that haven’t been accessed in months

- Compressing or archiving older chunks

These choices strike a balance between retrieval accuracy and long-term device storage health.

5.3 The Retrieval Pipeline

On-device RAG follows the same logical pattern as cloud RAG, but everything is optimized for smaller models and limited resources. The goal isn’t to retrieve perfect context — it’s to retrieve useful enough context that a 1B–3B SLM can answer well.

5.3.1 On-device embedding models

Embedding models for mobile must be lightweight and fast. Good options include:

- Distilled MiniLM variants

- TinyBERT

- Compact sentence-transformer models

- Custom TFLite embedding models optimized for NPUs

They typically output vectors in the 256–384 dimension range, which keeps similarity search quick and storage manageable.

Example embedding step:

emb = embed_model.encode(scrubbed_text)

store.insert({"id": doc_id, "vector": emb, "content": scrubbed_text})This is the core of on-device RAG: embed → store → retrieve.

5.3.2 Hybrid Search: Combining keyword search (FTS) with semantic search (vectors)

Tiny LLMs perform best when they get the right context. Hybrid search boosts accuracy by combining:

- Keyword search (exact matches)

- Vector search (semantic matches)

SQLite example:

SELECT content, vss_score(embedding, :query_vector) AS score

FROM documents

WHERE content MATCH :keyword

ORDER BY score DESC

LIMIT 5;This hybrid approach allows the system to answer both specific and fuzzy questions without relying on a large cloud model.

5.4 Code Pattern: The “Local Context Window”

The final step is assembling retrieved results into the prompt for the Tiny LLM. Because mobile models handle smaller context windows, the app has to be selective with what it injects.

5.4.1 Fetching user data → Chunking → Embedding → Injection

A typical on-device workflow for personal data (e.g., meetings) looks like this:

# Fetch user data from a local API

events = local_api.get_calendar_events()

# Chunk text

chunks = []

for event in events:

chunks.extend(chunk_text(event.description, size=200))

# Embed and store

for idx, ch in enumerate(chunks):

vec = embed_model.encode(scrub(ch))

store.insert({"id": f"evt-{idx}", "vector": vec, "content": ch})

# Query workflow

query_vec = embed_model.encode("What meetings do I have tomorrow?")

results = store.search(query_vec, top_k=4)

# Build prompt

context = "\n".join([r["content"] for r in results])

prompt = f"Context:\n{context}\n\nQuestion: What is scheduled for tomorrow?"

response = slm.generate(prompt)This is the pattern that enables private assistants, offline knowledge tools, and productivity apps: everything happens on the device, nothing leaves it, and the user gets accurate, grounded responses.

6 Computer Vision: Beyond Basic Classification

Mobile apps increasingly expect more than simple image classification. They need segmentation, document understanding, object tracking, depth cues, and scene interpretation — often in real time. Running these workloads on-device keeps interactions responsive and avoids the privacy and bandwidth issues of streaming video to the cloud. Modern NPUs make this possible, but only with architectures and pipelines designed specifically for mobile hardware.

6.1 Modern Architectures

Computer vision models have evolved to balance accuracy with extremely low compute cost. These architectures are built to run on edge devices, making them a natural fit for on-device AI pipelines that pair Tiny LLMs with lightweight vision encoders.

6.1.1 Vision Transformers (ViT) on Mobile: MobileViT vs. EfficientFormer

Transformers have become dominant in vision tasks, but vanilla ViT models are too heavy for mobile use. Mobile variants reduce attention overhead and introduce convolution-based components to make them practical on NPUs.

Two common options:

- MobileViT — Combines convolution layers with small transformer blocks. Good for segmentation, scene understanding, and general-purpose classification.

- EfficientFormer — Designed explicitly for speed; scales well with INT8 quantization and is ideal for apps that must keep latency extremely low.

Both models can run comfortably on modern phone NPUs at real-time or near real-time speeds, even when processing camera frames continuously.

6.1.2 Object Detection: YOLOv10 (Nano versions) and RT-DETR optimizations

Mobile-friendly detectors such as YOLOv10-Nano or optimized RT-DETR variants offer a strong balance between detection quality and low compute overhead. On high-end devices, YOLOv10-Nano can handle 60 FPS or more, depending on resolution and quantization.

A minimal TFLite object detection example (Android/Kotlin):

val model = Interpreter(loadModelFile("yolov10n-int8.tflite"))

val input = preprocessFrame(frame)

val output = Array(1) { Array(100) { FloatArray(6) } }

model.run(input, output)

val boxes = parseDetections(output)These models work well for document boundary detection, barcode scanning, AR markers, or any task where the camera needs to understand its surroundings quickly and privately.

6.2 The Video Pipeline

Real-time video is one of the most demanding on-device workloads. Each frame requires decoding, preprocessing, running the model, and post-processing — often under tight thermal and battery constraints. Efficient pipelines reduce unnecessary work and scale output quality based on device conditions.

6.2.1 Frame skipping strategies and resolution scaling

A device does not need to process every frame at full resolution to deliver a good user experience. Many apps adopt adaptive strategies:

- Downscale 1080p streams to 320p for early detection

- Process only every 3–5 frames when movement is low

- Use low-resolution passes to detect objects, then trigger a high-resolution pass only when needed

These techniques significantly reduce heat buildup and power draw while maintaining accurate results.

6.2.2 Using OS-level APIs (Vision Framework on iOS, ML Kit on Android) vs. Custom Models

Both iOS and Android offer optimized on-device vision APIs:

- Apple Vision Framework — text detection, barcode recognition, face landmarks, document detection

- Google ML Kit — on-device OCR, pose estimation, face mesh tracking

These APIs often outperform custom models for foundational tasks because they take advantage of hardware-specific optimizations and years of tuning. Many production apps combine OS frameworks for quick tasks and rely on small custom models only for domain-specific features.

A balanced design might use:

- OS API → detect document corners

- Custom model → classify document type or extract structured information

- Tiny LLM → summarize or interpret extracted content

This hybrid pattern matches the design principles applied throughout the article: use local compute wisely, minimize energy cost, and keep workloads targeted.

6.3 Multimodal Inputs

As Tiny LLMs become faster, the next step is combining language and vision on-device. Vision-Language Models (VLMs) support tasks such as generating captions, answering questions about images, or assisting with accessibility. When designed carefully, these VLMs can run entirely offline.

6.3.1 Vision-Language Models (VLM): Running LLaVA or BakLLaVA on device

Mobile-optimized VLMs usually follow a simple architecture:

- A lightweight vision encoder (MobileNet, tiny ViT, or MobileViT)

- A projection layer that maps vision embeddings into the language model’s space

- A compact SLM (1B–3B parameters) to generate text

This modular design keeps memory usage manageable while allowing apps to combine visual information with language reasoning.

Example workflow (simplified Python):

vision_emb = vision_encoder(image_tensor)

projected = projector(vision_emb)

prompt = "Describe the main objects in this image."

output = slm.generate_with_embed(prompt, projected)

print(output)Running this entirely on-device enables:

- Offline captioning for photos or camera feeds

- Accessibility features such as describing scenes aloud

- AR assistance without cloud latency

- Private visual search over local images

These multimodal pipelines show how on-device AI is quickly expanding beyond text-only scenarios.

7 Production Patterns: Packaging, Security, and Updates

Getting on-device AI into production is very different from deploying a cloud model. You must fit large models into small app bundles, protect them from extraction, and update them without breaking user workflows. These are practical engineering concerns that determine whether an on-device AI feature succeeds in the real world. This section describes the patterns teams rely on to package models efficiently, secure them properly, and maintain them over time.

7.1 Packaging and Binary Size

Model size directly affects how users download and install your app. Even after quantization, Tiny LLMs can still be tens or hundreds of megabytes. App stores impose download limits, and users often hesitate to install large apps over cellular networks. This means the initial binary must stay lean, and models should load only when needed.

7.1.1 The “100MB Limit” problem: App Store download restrictions

App stores historically restrict how large an app can be when downloaded over mobile data. While the exact rules change over time, the safe assumption is that many users will hit a practical ceiling around 100–200MB. Because of this, teams typically:

- Keep the core install as small as possible

- Download large models only on Wi-Fi or when the user opts in

- Split optional AI features into separate model bundles

- Store only the base model locally, and fetch LoRA adapters on demand

For example, a productivity app might ship with a 1B general-purpose SLM included, but download domain-specific adapters (legal, medical, finance) the moment the user activates those features.

7.1.2 Asset Delivery: On-Demand Resources (ODR) on iOS and Play Asset Delivery on Android

Both platforms offer mechanisms to deliver large assets — like quantized models — without bloating the initial app package.

On iOS (ODR):

- Assets are grouped into lightweight “tags”

- The app requests tags when needed

- The system downloads and caches models transparently

On Android (Play Asset Delivery):

- Assets are packaged into modules

- Modules can be install-time, fast-follow, or on-demand

- Only the necessary model files are fetched

Example of pulling a model via ODR (Swift):

let request = NSBundleResourceRequest(tags: ["slm-int4-model"])

request.beginAccessingResources { error in

if let err = error {

print("Error loading model: \(err)")

return

}

loadModelFromBundle("slm_int4.gguf")

}This structure makes it possible to support multiple models — Tiny LLMs, embedding models, vision models — without forcing every user to download everything upfront.

7.2 Model Security and IP Protection

Once a model is on-device, it becomes accessible to attackers who can extract the app bundle and inspect its contents. Quantized weights often contain proprietary training data or expensive fine-tuning knowledge. Securing them is essential.

7.2.1 Encrypting model weights at rest

A standard pattern is to encrypt the model on disk and decrypt it only in memory using keys stored in secure hardware. This prevents casual extraction of .gguf, .tflite, or .onnx files.

Example (C# / MAUI-style pseudocode):

byte[] encrypted = File.ReadAllBytes("model.enc");

byte[] key = Keychain.GetKey("model_key"); // Secure Enclave / StrongBox

byte[] decrypted = AesDecrypt(encrypted, key);

var context = LlamaContext.LoadFromBuffer(decrypted);This ensures that even if someone pulls files from the app bundle, the model remains unreadable without the device-specific key.

7.2.2 Obfuscation techniques to prevent model theft / reverse engineering

Beyond encryption, teams use additional layers to make extraction harder:

- Splitting the model into multiple partial files

- Encoding fragments with custom compressors

- Storing separate fragments in different asset packages

- Generating per-device encryption keys at first launch

- Adding decoy or dummy weight files

In some deployments, the model is reassembled only at runtime, inside memory, using a combination of encrypted fragments. This creates a high barrier for reverse engineering while keeping load times manageable.

7.3 OTA (Over-the-Air) Model Updates

Models improve over time — new quantization methods, better fine-tuning, updated embeddings, and security patches. Updating models in production requires care: the update must be seamless, preserve user data, and avoid breaking the local vector store.

7.3.1 Implementing A/B testing for models

Updating a model without testing can degrade user experience or battery life. A/B testing lets you ship a new model to a subset of users and compare results.

Python-like logic:

bucket = hash(user_id) % 100

if bucket < 10:

active_model = load_model("v2-int4")

else:

active_model = load_model("v1-int4")Apps report anonymous telemetry like:

- token latency

- crash frequency

- model warm-load time

- battery impact

Because all inference happens locally, there’s no privacy concern — only high-level usage statistics leave the device.

7.3.2 Handling schema migrations for local vector stores when embedding models change

If the embedding model changes (dimension size, tokenizer, or training domain), all existing embeddings must be regenerated. Otherwise, retrieval accuracy drops.

A safe migration pipeline looks like this:

- Mark the current vector store as “legacy”

- Generate new embeddings in the background

- Swap the new index once complete

- Clean up old embeddings when safe

Example:

if store.schema_version < REQUIRED_VERSION:

for row in store.all():

new_vec = embed_model.encode(row["content"])

temp_store.insert({"id": row["id"], "vector": new_vec, "content": row["content"]})

store.replace_with(temp_store)

store.schema_version = REQUIRED_VERSIONThis process typically runs when the phone is charging or idle so it doesn’t interfere with normal usage.

8 The Orchestration Layer: Routing and Fallback

The orchestration layer decides how and where AI workloads run. Mobile devices operate under shifting conditions — the NPU may already be busy, the phone may be heating up, the network may be weak, or the user may request something that is too complex for an on-device model. A good orchestration layer keeps the experience smooth despite these changes. Instead of failing, the system adapts.

8.1 The “Graceful Fallback” Pattern

Fallback logic protects the user experience when the optimal compute path is unavailable. The idea is simple: start with the fastest, most efficient option, and step down only when necessary. This avoids timeouts, crashes, or “Sorry, I can’t do that right now” moments.

8.1.1 Tier 1: Try NPU-accelerated INT4 model

If the NPU is available and the device is within a safe thermal range, the app uses the NPU-optimized INT4 model. This provides the lowest latency and best energy efficiency — ideal for quick summaries, suggestions, or on-device assistants.

try:

model = load_npu_model("slm-int4.gguf")

output = model.generate(prompt)

except NPUUnavailableError:

fallback = TrueThis tier gives users the “instant response” feel expected from modern mobile AI.

8.1.2 Tier 2: Fallback to CPU/quantized model if NPU is busy or hot

If the NPU is tied up (for example, running a real-time vision model) or the device is starting to overheat, the app switches to a smaller CPU/INT8 model. It may adjust token limits or shorten responses to keep latency manageable.

if fallback:

model = load_cpu_model("slm-int8-minimal.gguf")

output = model.generate(prompt, max_tokens=60)The user still gets an answer, just slightly slower or shorter.

8.1.3 Tier 3: Rule-based heuristics if the model cannot run at all

If the device is severely constrained — extremely hot, very low battery, or under heavy load — even the smaller model may not be usable. In those cases, the system falls back to lightweight heuristics or templates.

Example (C#):

if (modelLoadFailed)

{

return SimpleHeuristics.Answer(prompt);

}These heuristics ensure the app never leaves the user hanging. It’s better to respond simply than not at all.

8.2 Hybrid Routing (The Router Model)

In many production apps, both on-device and cloud inference exist side-by-side. The orchestration layer decides which path to use for every request. The key is to make this decision quickly and consistently.

8.2.1 Using a tiny classifier model (20MB) to decide complexity

A small classifier — often a cheap INT8 model — predicts whether the user’s request is simple enough for on-device processing or requires cloud resources. This keeps cloud usage low while maintaining good accuracy on harder tasks.

cls = load_classifier("router-mini-int8.tflite")

score = cls.predict(prompt)

if score < 0.5:

return on_device_slm.generate(prompt)

else:

return cloud_api.generate(prompt)Typical “on-device friendly” tasks include rewriting text, extracting intent, summarizing short content, or answering factual questions with limited reasoning.

Deep reasoning, complex code generation, or long-context queries often route to the cloud.

8.2.2 Latency hiding: Start on-device generation while cloud output arrives

Cloud inference may take longer depending on network conditions. One effective pattern is to let the local SLM begin generating a response immediately while the cloud model works in parallel. If the cloud result arrives quickly, it supersedes the local output. If not, the user still sees progress.

local_future = executor.submit(local_model.generate, prompt)

cloud_future = executor.submit(cloud.generate, prompt)

local_output = local_future.result(timeout=300)

if cloud_future.done():

return cloud_future.result()

else:

return local_outputThis approach hides latency spikes and improves perceived responsiveness.

8.3 User Experience (UX) for On-Device AI

On-device AI introduces new states that users are not familiar with — model loading, warming up the NPU, throttling, battery-aware processing. Good UX helps users understand what’s happening without overwhelming them with technical details.

8.3.1 Progress indicators for model loading (“Warming up the brain…”)

Larger models take time to initialize, especially on first launch. Instead of freezing the UI, apps should communicate clearly and briefly:

- “Preparing your assistant…”

- “Loading AI model…”

- “Almost ready…”

The app can also preload models before they’re needed. For example, an email app might start warming up its summarization model as soon as the user opens the inbox.

8.3.2 Handling low-battery states (programmatically scaling down AI features)

AI workloads draw noticeable power. When the device enters low-battery mode (commonly below 15%), apps should scale down:

- Use smaller models

- Reduce token counts

- Disable continuous camera analysis

- Delay non-essential background AI tasks

Swift example:

if battery.level < 0.15 {

aiFeaturesEnabled = false

showMessage("AI features paused to save battery.")

} else {

aiFeaturesEnabled = true

}This prevents the app from draining the last bit of battery while remaining transparent about why certain features are paused.