1 Why URL Shorteners Are Deceptively Hard

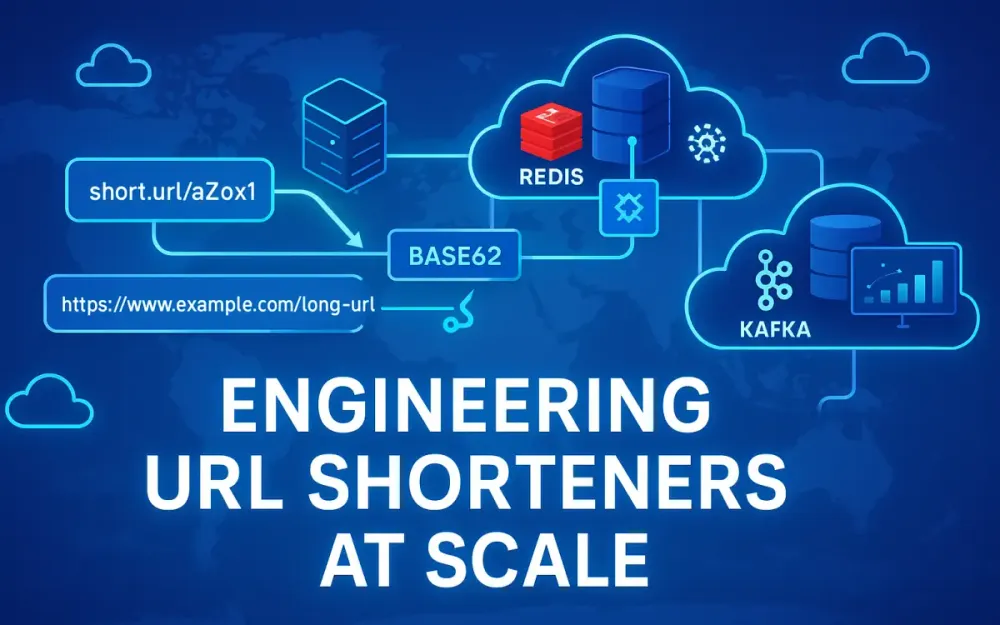

Building a URL shortener seems simple at first — map a short code to a long URL, redirect, and track clicks. But when you move beyond a prototype and aim for production-level scale — billions of redirects, multi-region latency budgets under 200 ms, sub-millisecond cache lookups, analytics aggregation, and fraud prevention — the problem space deepens rapidly. A production-grade URL shortener is a distributed system that touches almost every domain in modern backend engineering: globally unique ID generation, multi-tier caching, rate limiting, observability, and real-time analytics.

In this section, we’ll unpack why URL shorteners are challenging to engineer at scale and what constraints guide system design.

1.1 Problem Framing, SLOs, and Traffic Patterns

A shortener’s surface area hides two very different workloads — a read-dominated redirect path and a write-dominated analytics path.

1.1.1 Redirect Path – Read-Heavy, Latency Sensitive

The redirect path handles incoming HTTP requests for shortened URLs, e.g.

https://sho.rt/xYz123 → https://example.com/article?id=42.

At scale, this path must:

- Resolve the short code to its original URL, ideally from memory or a local cache.

- Respond with a 301 or 302 redirect in under 100 ms (p95) for global users.

- Absorb unpredictable spikes — think viral social media posts or marketing campaigns.

The workload is almost entirely read-only. Writes occur rarely (link creation, edit, or deletion). This asymmetry allows aggressive caching strategies: edge CDN caching, Redis, and in-process caches layered to absorb traffic bursts.

A realistic ratio in production is 1000 reads per write or higher. The design principle here: optimize for reads, isolate writes. Analytics collection, admin dashboards, and fraud scanning must never slow down redirects.

1.1.2 Analytics Path – Write-Heavy, Eventually Consistent

Every redirect also emits telemetry: IP, timestamp, device, referrer, country, campaign, etc. This data fuels dashboards, click counters, and anomaly detection. It’s write-heavy, append-only, and latency tolerant — users don’t expect dashboards to update instantly.

Separating this write-heavy path into a streaming pipeline (Kafka → ClickHouse, for instance) prevents analytics ingestion from backpressuring the redirect API. This is a classic CQRS (Command Query Responsibility Segregation) pattern:

- Reads = hot path (redirect).

- Writes = asynchronous analytics ingestion.

1.1.3 SLOs and System Boundaries

A typical service-level objective (SLO) stack for a high-scale URL shortener looks like:

| Path | Operation | p95 Latency | Error Budget | Data Path |

|---|---|---|---|---|

| Redirect | GET /{code} | < 200 ms global | 99.9% uptime | Edge CDN + cache + store |

| Create Short Link | POST /api/links | < 100 ms | 99.5% | App + DB + cache |

| Analytics | Stream insert | < 5 s lag | 99% processed | Kafka → ClickHouse |

A core architectural decision follows: treat redirect and analytics as separate services with independent scaling and failure domains. Even if the analytics pipeline fails, redirects must continue.

1.1.4 Example: Spike Traffic Patterns

Traffic to a shortener isn’t uniform — it’s bursting and skewed. A single viral link can produce 80% of the day’s traffic within minutes. Caching must absorb this without melting backend stores.

In practice:

- The top 1% of links account for 90%+ of requests.

- Long-tail links are rarely hit.

- Write volume (new links) stays stable; reads fluctuate dramatically.

Caching and edge distribution design must anticipate this Pareto pattern. Redis hot-key protection, CDN prewarming, and jittered TTLs are essential defenses.

1.2 Real-World Scale and Latency Expectations

When companies like Bitly, TinyURL, and Firebase Dynamic Links share scale stories, a few patterns repeat: billions of redirects per day, millisecond-level response times, and multi-region availability.

1.2.1 Scale Metrics from Industry Cases

- Bitly reported handling tens of billions of clicks per month globally.

- Twitter’s t.co operates at hundreds of thousands of requests per second during peaks.

- Firebase Dynamic Links integrates with Google infrastructure, serving redirects through Cloud CDN and Anycast routing — global sub-200 ms latency.

These numbers are realistic targets for modern infrastructure with edge caching and efficient stores.

1.2.2 Latency Budgets Breakdown

To hit a global p95 < 200 ms, we can allocate roughly:

| Layer | Target Budget |

|---|---|

| Edge/CDN lookup | 20 – 40 ms |

| App server (cache hit) | 10 – 30 ms |

| Redis/DB lookup (miss) | 50 – 80 ms |

| Network variance | 20 – 50 ms |

This budget leaves almost no room for synchronous dependencies like analytics inserts or complex DB joins. The redirect path must be pure lookup + redirect — every extra call or serialization hurts.

1.2.3 Global Distribution and Multi-Region Consistency

Global latency under 200 ms implies multi-region read replicas and eventual consistency. Redirects must succeed even if a region’s write DB is lagging.

For example:

- Create short links in a primary region (e.g., East US).

- Propagate mappings asynchronously to read replicas (e.g., EU, APAC).

- Serve redirects from the nearest region using Azure Front Door or global load balancers.

This separation of strongly consistent writes and eventually consistent reads is a fundamental trade-off.

1.3 Minimal Viable Components

A scalable shortener architecture has several minimal but critical components.

1.3.1 Short Code Generation Service

Generates unique, sortable IDs for every link. This is typically a Snowflake-style ID generator (discussed in Section 2). The generator ensures global uniqueness without coordination and preserves creation order for efficient database clustering.

1.3.2 Mapping Store

Stores the mapping from short code → long URL. It must offer:

- Sub-millisecond reads.

- High cache hit ratio.

- Regional replication for read locality.

Choices range from Redis (for cached mappings) to Cosmos DB, DynamoDB, or Cassandra for persistent storage.

1.3.3 Edge/CDN Layer

Offloads the majority of redirect requests by caching 301 responses. Azure Front Door or Cloudflare Workers can act as programmable edges for rules-based redirects, header manipulation, and cache invalidation.

1.3.4 Cache Tiers

Multi-level caching mitigates backend load:

- L1: In-memory dictionary in ASP.NET Core.

- L2: Distributed Redis cluster.

- Edge: CDN or Front Door cache.

Each layer uses short TTLs and request coalescing to handle bursts efficiently.

1.3.5 Analytics Ingestion Pipeline

Captures click events asynchronously via message queues (Kafka, Event Hubs). The backend writes these to ClickHouse for aggregation (see Section 6).

1.3.6 Rate Limiting and Observability

Rate limits protect APIs from abuse. Observability ensures the redirect path remains performant and healthy under load — using OpenTelemetry tracing, structured logs, and latency histograms.

1.3.7 Minimal Service Dependency Graph

Client → Edge/CDN → Redirect API

↘ Cache → Mapping Store

↘ Event → Kafka → Analytics StoreThis separation of synchronous vs asynchronous paths defines a resilient, observable baseline for scaling.

2 Globally Unique, Sortable IDs: Snowflake in .NET

Short URL generation starts with unique ID creation. These IDs must be:

- Globally unique (no collisions across servers or regions)

- Sortable by time (for cache locality and analytics ordering)

- Compact enough to encode efficiently in Base62

- Fast to generate (millions per second under load)

That’s why most distributed systems use a Snowflake-like algorithm.

2.1 Snowflake ID Format Refresher

Twitter’s original Snowflake (2010) popularized a clever 64-bit ID layout:

| 1 unused bit | 41 bits timestamp | 10 bits worker id | 12 bits sequence |2.1.1 Bit Allocation Breakdown

- 41 bits timestamp: milliseconds since a custom epoch. → ~69 years of lifetime.

- 10 bits worker ID: supports 1024 concurrent generators.

- 12 bits sequence: allows 4096 IDs per millisecond per worker.

This yields ~4 million unique IDs per second per region without coordination.

2.1.2 Why Sortable IDs Matter

Unlike random GUIDs, Snowflake IDs are monotonically increasing, which enables:

- Efficient clustered indexes in databases (reduced fragmentation).

- Temporal ordering for analytics.

- Cache warm-up patterns (recent links are adjacent in ID space).

Sequential locality improves cache and disk performance — adjacent inserts are co-located, which helps both relational and NoSQL stores.

2.1.3 Snowflake vs Random IDs

GUIDs are easy but expensive:

- 16 bytes instead of 8 bytes.

- Non-sequential → cause B-tree splits and fragmentation.

- Harder to compress or encode compactly in Base62.

Snowflake balances uniqueness, time order, and efficiency, making it ideal for URL shorteners.

2.2 Choosing a .NET Implementation

Several mature .NET libraries implement Snowflake-like algorithms efficiently.

2.2.1 IdGen (NuGet)

A popular, actively maintained library supporting configurable bit layouts and epochs.

Example configuration:

using IdGen;

var epoch = new DateTime(2020, 1, 1, 0, 0, 0, DateTimeKind.Utc);

var generator = new IdGenerator(0, new IdGeneratorOptions(timeSource: new DefaultTimeSource(epoch)));

var id = generator.CreateId();

Console.WriteLine(id); // 64-bit integerYou can customize:

- WorkerId bits (e.g., 10 bits → 1024 nodes)

- Sequence bits

- Custom epoch to extend lifetime

IdGen ensures monotonicity within the same millisecond and auto-waits for the next tick when exhausted.

2.2.2 SnowflakeIDGenerator

A lightweight alternative with simpler configuration. However, it lacks span-based APIs and may allocate more under high throughput. For .NET 8 services aiming for 10M+ IDs/sec, IdGen is preferred due to its performance and low GC overhead.

2.2.3 Time Epoch Selection

Choosing a custom epoch (e.g., 2020-01-01) reduces timestamp bits earlier in the lifecycle, keeping IDs smaller for years before overflow.

Always fix the epoch in configuration and avoid runtime variation — changing it mid-deployment causes collisions.

2.3 Worker ID Assignment at Scale

Each generator instance must have a unique worker ID across all running nodes. Several strategies exist:

2.3.1 Static Configuration

Simplest option for small clusters:

{

"Snowflake": {

"WorkerId": 7

}

}Downside: operational overhead — must ensure no duplicates.

2.3.2 Redis or etcd Lease Allocation

A central coordinator (Redis/etcd) hands out unique worker IDs dynamically:

SETNX worker:{id} instance1 --expire 60The node renews its lease periodically. If it crashes, another node can reclaim the ID after TTL expiry. This provides elasticity for auto-scaling environments.

2.3.3 Kubernetes StatefulSets

In K8s, StatefulSet pods get deterministic ordinal indices (pod-0, pod-1, …).

You can derive workerId = ordinal.

This avoids central coordination entirely.

2.3.4 Azure VMSS Metadata

In Azure, instance metadata (VMSS instance ID or scale set slot) can provide deterministic IDs at startup:

string instanceId = Environment.GetEnvironmentVariable("WEBSITE_INSTANCE_ID");

var workerId = Math.Abs(instanceId.GetHashCode()) % 1024;2.3.5 Recommendation

For cloud-native environments:

- Use Kubernetes ordinal IDs for predictability.

- Fall back to Redis leases for auto-scaling pools.

2.4 Defending Against Clock Skew and Restarts

Clock drift or NTP jumps can cause catastrophic collisions if timestamps go backward.

2.4.1 Monotonic Time Enforcement

Use a monotonic clock instead of DateTime.UtcNow.

private long _lastTimestamp = -1L;

private long CurrentTimestamp()

{

var ts = DateTimeOffset.UtcNow.ToUnixTimeMilliseconds();

if (ts < _lastTimestamp)

ts = _lastTimestamp; // force monotonic

return _lastTimestamp = ts;

}2.4.2 Sequence Exhaustion Handling

If all 4096 sequence slots within a millisecond are used, block until the next millisecond.

while (timestamp <= lastTimestamp)

timestamp = DateTimeOffset.UtcNow.ToUnixTimeMilliseconds();In production, this blocking time is typically sub-millisecond and tolerable.

2.4.3 Handling NTP Time Jumps

Guardrails:

- Compare timestamps against persisted last ID at startup.

- If clock moves backward > 1 s, sleep until caught up.

- Use chrony or Azure Time Sync for minimal skew (< 1 ms typical).

2.4.4 Restart Safety

Persist the last timestamp or simply allow wrap-around — Snowflake guarantees uniqueness as long as worker IDs don’t overlap and clock monotonicity holds.

2.5 Benchmarking Snowflake Throughput in ASP.NET Core

In high-traffic APIs, generating IDs must not become a bottleneck.

2.5.1 Singleton Generator

Instantiate IdGenerator as a singleton (e.g., via DI):

services.AddSingleton<IIdGenerator>(_ =>

{

var epoch = new DateTime(2020, 1, 1, 0, 0, 0, DateTimeKind.Utc);

return new IdGenerator(1, new IdGeneratorOptions(timeSource: new DefaultTimeSource(epoch)));

});Avoid per-request instantiation — allocations and locks kill throughput.

2.5.2 Allocation-Free Usage

IdGen uses simple long return values — no heap allocations.

Encoding into Base62 adds minimal overhead (we’ll cover it in Section 3).

2.5.3 Performance Baseline

On a 2023 Azure D4ds v5 (4 vCPU), you can expect:

- ~5–10 million IDs/sec per instance

- < 0.2 µs per ID (99th percentile)

- GC = 0 due to struct-based design

2.5.4 Thread Safety

The generator internally synchronizes sequence increments. For extreme throughput (e.g., batch inserts), consider a small pool of generators partitioned by worker IDs.

2.6 Alternatives and Trade-Offs

Snowflake is a strong default, but alternatives exist with distinct trade-offs.

2.6.1 ULIDs

- 128 bits (UUID compatible)

- Lexicographically sortable

- Encoded in Crockford Base32 (26 chars)

Pros: widely interoperable, human-safe. Cons: larger payload → longer short codes (~2 extra chars).

2.6.2 KSUIDs

- 160 bits

- 32-char Base62 encoding

- Embeds 4-byte timestamp

Better cross-language portability, but overhead is higher and IDs aren’t native longs.

2.6.3 Database Sequences

Simple but centralized — hard to scale horizontally. Good for prototypes, poor for distributed systems.

2.6.4 GUIDs / UUIDv4

- Collision probability negligible.

- Non-sequential → poor cache and index performance.

2.6.5 Recommendation

For high-volume .NET systems:

- Use Snowflake IDs (IdGen) for main link IDs.

- Optionally expose ULIDs for external APIs if human readability matters.

3 Turning IDs into Human-Readable Short Codes

Now that we have unique numeric IDs, the next step is converting them into compact, URL-safe short codes.

3.1 Base Alphabet Choices: Base62 vs Base58 vs Base36

Encoding numeric IDs efficiently requires choosing a character alphabet. Let’s analyze the trade-offs.

3.1.1 Base62 – Default for Modern Shorteners

Alphabet: [0-9A-Za-z] → 62 symbols.

Max density → shortest possible code length.

Example:

- 64-bit ID → ~11 characters in Base62.

- Fast to encode/decode using integer arithmetic.

3.1.2 Base58 – Human-Friendly Variant

Alphabet: excludes ambiguous characters (0, O, I, l, +, /).

Common in Bitcoin addresses.

Pros:

- Easier to read/enter manually. Cons:

- Slightly longer output (~12–13 chars).

- Slower encoding due to 58-base math.

3.1.3 Base36 – Simpler, Case-Insensitive

Alphabet: [0-9A-Z].

Pros:

- Case-insensitive URLs (good for brand links).

- Easy to store and search in databases. Cons:

- Less dense (36 symbols) → longer codes (~13 chars for 64-bit).

3.1.4 Practical Recommendations

| Use Case | Encoding | Rationale |

|---|---|---|

| Public shortener | Base62 | Shortest codes |

| Human-entered codes | Base58 | Avoid confusion |

| Corporate internal links | Base36 | Simplicity, lowercase |

3.2 Production-Grade Base62 in .NET

In .NET 8, we can encode 64-bit integers into Base62 without allocations or lookups using Span<char>.

3.2.1 Recommended Libraries

- Base62 (

System.Base62) - Base62-Net

- Base62.Conversion

All available on NuGet with near-identical APIs. Example using Base62.Net:

using Base62;

long id = 1538472938472;

string code = id.ToBase62();

Console.WriteLine(code); // e.g. "4gfE92A"To decode:

long decoded = code.FromBase62();

3.2.2 Performance Notes

A span-based encoder avoids allocations:

Span<char> buffer = stackalloc char[11];

int len = Base62Converter.Encode(id, buffer);

return new string(buffer[..len]);This pattern avoids heap churn under millions of redirects per second.

3.2.3 Custom Alphabets and Profanity Filters

You can define a custom alphabet to avoid accidental words:

var alphabet = "0123456789ABCDEFGHJKLMNPQRSTUVWXYZabcdefghijkmnopqrstuvwxyz"; // skip I, l, O

var base62 = new Base62Converter(alphabet);Maintaining brand-safe short codes is a real operational need for consumer-facing systems.

3.3 Canonicalization and Normalization

Encoding isn’t enough — you must define deterministic URL behavior.

3.3.1 Case Sensitivity

Base62 is case-sensitive. However, many organizations normalize codes to lowercase for user-friendliness, sacrificing density.

Policy options:

- Case-sensitive: shortest possible codes, but requires exact match.

- Case-insensitive: safer for typing; convert all to lowercase.

Enforce via canonical redirect middleware:

if (code != code.ToLowerInvariant())

return RedirectPermanent($"https://sho.rt/{code.ToLowerInvariant()}");3.3.2 Reserved Word Filtering

Avoid generating offensive or misleading codes (e.g., BAD123, ADMIN).

Approach:

- Maintain a Bloom filter of forbidden substrings.

- Regenerate code until it passes filter.

3.3.3 Deterministic Redirects (301 vs 302)

- 301 (Permanent) → cacheable by browsers/CDNs; better for static links.

- 302 (Temporary) → no cache; used during testing or analytics A/B routing.

For production shorteners, use 301 once destination URL is stable. This allows CDN-level caching of redirects for massive scale gains.

3.3.4 CDN Cacheability

Ensure redirect responses include:

Cache-Control: public, max-age=86400

Location: https://example.comThis lets Azure Front Door or Cloudflare cache responses safely. Case normalization also improves cache hit ratio — one canonical form = one cache key.

3.4 Two Mapping Patterns

Two dominant design patterns exist for generating and resolving short codes.

3.4.1 ID → Code → URL (Recommended)

Process:

- Generate Snowflake ID → 64-bit integer.

- Encode to Base62 → short code.

- Store mapping

{id → long URL}.

This method guarantees uniqueness and predictable length.

Example:

var id = idGenerator.CreateId();

var code = id.ToBase62();

await db.SetAsync($"code:{code}", originalUrl);Redirect lookup:

var url = await cache.GetAsync($"code:{code}");

return RedirectPermanent(url);Pros:

- No collisions.

- Sortable IDs → good data clustering.

- Easy replication and sharding.

Cons:

- Codes are predictable (sequential). Add random prefix if obfuscation is needed.

3.4.2 Hash → Collision Resolution

Alternative: compute a hash (e.g., SipHash or Murmur3) of the long URL.

ulong hash = SipHasher.Hash(url);

string code = hash.ToBase62();If collision:

- Append retry counter or rehash until unique.

Pros:

- Deterministic for identical URLs.

- No global ID generator needed.

Cons:

- Collisions must be handled carefully.

- Harder to shard or sort.

- Reuse of same short code for different URLs may cause confusion if URLs change.

3.4.3 Operational Comparison

| Pattern | Pros | Cons |

|---|---|---|

| Snowflake + Base62 | Predictable, high throughput, sortable | Sequential, needs worker IDs |

| Hash + Retry | Stateless, simple deploy | Collision handling, inconsistent latency |

For distributed shorteners with high write throughput and analytics correlation, Snowflake → Base62 remains the gold standard.

4 Storage & Multi-Tier Caching for the Redirect Hot Path

By this point, we have short codes that are compact, globally unique, and easily encodable. The next challenge is serving those codes fast — ideally within tens of milliseconds — even under extreme load. This means designing a storage layer and caching hierarchy that can handle billions of lookups per day with predictable latency.

The redirect hot path should never touch persistent storage unless necessary. Each additional hop increases tail latency and can amplify downstream failures. To avoid this, production systems use multi-tier caches that absorb the majority of requests before they ever reach a database.

4.1 The Mapping Store: Simple Key→URL

At its core, a URL shortener’s data model is deceptively small:

CREATE TABLE UrlMappings (

Code VARCHAR(12) PRIMARY KEY,

DestinationUrl NVARCHAR(2048) NOT NULL,

CreatedAt DATETIME2 NOT NULL,

OwnerId BIGINT NULL,

IsActive BIT NOT NULL DEFAULT 1

);The design goal is simple: given a short code, return the original URL in constant time. But how you store and replicate this mapping determines your latency ceiling.

4.1.1 Backing Store Options

Relational (SQL) – Good for transactional consistency (link creation, edits).

Azure SQL or PostgreSQL work well up to hundreds of millions of records, especially with SSD-backed read replicas. Use indexed lookups by Code.

Wide-column / NoSQL (Cosmos DB, DynamoDB, Cassandra) – Ideal for globally distributed reads. Each region can serve lookups locally while asynchronously replicating writes. Cosmos DB’s multi-region read replicas can achieve sub-10 ms latency for cached partitions.

Cloud Key-Value Stores – At hyperscale, many systems migrate to dedicated KVs (e.g., Google Cloud Spanner, Redis Enterprise, FoundationDB). Bitly, for example, moved its link data into a globally replicated KV to prioritize read latency and elasticity. KV stores excel when your access pattern is a single key lookup and the schema is stable.

4.1.2 Designing for Read Amplification

Reads vastly outnumber writes, so every design decision must favor lookup speed. Avoid:

- Overfetching unnecessary columns

- Secondary index lookups

- Joins or filtering logic

Keep payloads small and hot in cache:

- Compress long URLs at rest using Brotli or GZip (optional)

- Store metadata (owner, analytics ID) separately to avoid bloating cache entries

4.1.3 Regional Replication

Global users shouldn’t incur transcontinental hops for lookups. Configure multi-region replicas where reads occur closest to the user:

- In Azure, use Geo-replicated SQL or Cosmos DB multi-region writes.

- Deploy cache and API in the same region as the replica.

Replication lag is acceptable for this workload — a few seconds delay in a new link appearing globally won’t affect existing redirects.

4.2 Cache Hierarchy Design

Caching absorbs bursty traffic and isolates the database from hot spots. A multi-tier approach ensures each layer handles different time scales of volatility.

4.2.1 L1 – In-Process Cache

Every API instance maintains a lightweight dictionary for the hottest keys:

private readonly MemoryCache _cache = new(new MemoryCacheOptions

{

SizeLimit = 100_000

});

public async Task<string?> GetUrlAsync(string code)

{

if (_cache.TryGetValue(code, out string url))

return url;

url = await redis.GetStringAsync($"code:{code}");

if (url != null)

_cache.Set(code, url, new MemoryCacheEntryOptions { AbsoluteExpirationRelativeToNow = TimeSpan.FromSeconds(10), Size = 1 });

return url;

}The TTL is short (5–15 seconds). This small buffer smooths out microbursts of repeated hits for the same short code, especially during viral traffic spikes.

4.2.2 L2 – Redis Cluster

Cross-node cache sharing is handled by Redis. Azure Cache for Redis (Enterprise tier) supports clustering and modules such as RedisBloom for probabilistic filtering.

Each app instance queries Redis when its local cache misses. Redis then holds tens of millions of entries, often sharded by hash slot to distribute load.

Key conventions:

code:{shortCode} → destination URL

meta:{shortCode} → metadata JSON (owner, createdAt)TTL values are tuned based on URL churn rate (commonly 1–6 hours). Updates use cache-aside or write-through patterns (covered later in 4.7).

4.2.3 L3 – Edge Cache

Finally, the edge layer — Azure Front Door, Cloudflare, or another CDN — caches 301 redirect responses themselves. This eliminates backend calls entirely for popular links.

Combined, these layers yield a miss ratio typically below 0.1% during peak events.

4.3 Azure Front Door/CDN Specifics for Redirects

Edge caching of redirects isn’t identical to static asset caching.

Permanent redirects (301) are cacheable by default; temporary (302) are not.

Knowing how Azure Front Door (AFD) and Azure CDN handle these differences is key to maintaining predictable cache behavior.

4.3.1 Cache Behavior

When returning a 301 response, include explicit headers:

Cache-Control: public, max-age=86400

Location: https://example.com/article/123AFD will cache this response by default, keyed by URL path. If your redirect endpoint includes query parameters (e.g., tracking tags), configure query-string keying:

az network front-door routing-rule update \

--front-door-name myshortener \

--resource-group prod \

--query-parameter-strip-behavior StripNone4.3.2 Rules Engine Overrides

AFD’s Rules Engine can override cache duration or force bypass for specific paths.

Example: keep static redirects cached for a day but bypass /admin/*:

{

"name": "RedirectCacheRules",

"actions": [

{ "name": "CacheExpiration", "parameters": { "cacheDuration": "1.00:00:00" } }

],

"conditions": [

{ "name": "RequestPath", "parameters": { "operator": "BeginsWith", "matchValues": ["/api/admin"] }, "negateCondition": true }

]

}4.3.3 Purge APIs and Propagation

Use the purge API to invalidate a specific redirect globally:

az network front-door purge-endpoint \

--resource-group prod \

--name myshortener \

--content-paths "/abc123"Expect ~30–90 seconds propagation globally. For campaign-scale updates (hundreds of links), batch purges to minimize latency.

4.3.4 Permanent Redirects Improve Hit Ratio

Using 301s allows browsers, CDNs, and edge PoPs to cache responses independently. If your application’s URLs rarely change, prefer 301s — the improvement in hit ratio often exceeds 80–90%.

4.4 Cache Warming (Pre-Load) Strategies

Cold caches can cause severe latency spikes at launch. Warming high-traffic short codes before campaigns ensures users see consistent performance from the first hit.

4.4.1 Pre-Warming High-QPS Codes

Before launching a marketing event:

-

Identify top short codes expected to receive heavy load.

-

Issue synthetic GET requests to populate caches:

curl -I https://sho.rt/abc123 -

Validate with CDN cache inspection (

X-Cache: TCP_HITheaders).

4.4.2 Azure CDN “Preload”

Azure CDN (Premium tier) includes a Preload API that forces content into edge PoPs before real traffic arrives:

az cdn endpoint load \

--endpoint-name shortener \

--profile-name shortener-profile \

--resource-group prod \

--content-paths "/abc123"This reduces first-request latency for global launches.

4.4.3 Fallbacks When Not Available

If using Front Door (which doesn’t support Preload), simulate traffic via distributed probes — for example, with k6 or a custom Azure Function that periodically “pings” known hot links.

For micro-campaigns or time-limited events, this warming often saves thousands of cold-start misses in Redis and DB.

4.5 Preventing Cache Stampedes & Hot-Key Meltdown

Even the best cache can fail under heavy concentration on a few keys. A cache stampede happens when multiple threads or instances recompute the same expired key simultaneously. Hot keys can overload Redis shards and cause cascading latency.

4.5.1 Single-Flight Locks

Use Redis-based locks so only one thread refreshes an expired entry:

var lockKey = $"lock:{code}";

if (await db.StringSetAsync(lockKey, "1", TimeSpan.FromSeconds(5), When.NotExists))

{

// Only one request refreshes

var url = await FetchFromDbAsync(code);

await db.StringSetAsync($"code:{code}", url, TimeSpan.FromHours(1));

await db.KeyDeleteAsync(lockKey);

return url;

}

else

{

// Wait for the other request

await Task.Delay(50);

return await db.StringGetAsync($"code:{code}");

}4.5.2 Request Coalescing

In ASP.NET Core, use a concurrent dictionary of tasks to avoid duplicate work within the same process:

private readonly ConcurrentDictionary<string, Task<string?>> _inflight = new();

public Task<string?> GetUrlAsync(string code)

{

return _inflight.GetOrAdd(code, async c =>

{

try { return await FetchAndCacheAsync(c); }

finally { _inflight.TryRemove(c, out _); }

});

}4.5.3 Jittered TTLs

Prevent synchronized cache expirations by randomizing TTL slightly:

var ttl = TimeSpan.FromHours(1) + TimeSpan.FromSeconds(Random.Shared.Next(-60, 60));

await redis.SetStringAsync($"code:{code}", url, ttl);4.5.4 Soft TTL + Background Refresh

Serve stale entries briefly while refreshing asynchronously:

if (IsExpiredSoft(entry))

{

_ = Task.Run(() => RefreshCacheAsync(code));

return entry.Value; // serve stale

}This pattern maintains low tail latency during refresh storms.

4.5.5 Bloom Filters for Negative Caching

Add RedisBloom to filter invalid codes quickly:

BF.ADD known_codes abc123

BF.EXISTS known_codes xyz999If Bloom filter says “not present,” you can short-circuit before hitting storage. This saves I/O for invalid or expired links that bots often spam.

4.6 Redis on Azure: What to Choose and Why

Redis tiers differ in performance, features, and SLAs. For URL shorteners, the right tier depends on traffic volume and feature use.

4.6.1 Tier Selection

| Tier | Best For | Features |

|---|---|---|

| Basic/Standard | Dev, test | Single node, no clustering |

| Premium | Mid-scale | Clustering, persistence, VNet |

| Enterprise | High-scale | RedisBloom, RedisTimeSeries, RedisJSON |

| Enterprise Flash | Cost efficiency for >1 TB datasets | SSD-backed, lower memory cost |

Most production workloads fit in Enterprise for module support and HA clustering.

4.6.2 Clustering and Scaling

Enable clustering with multiple shards for horizontal scaling:

az redis create --name shortener-cache --resource-group prod \

--sku Enterprise --shard-count 6Redis Cluster automatically partitions keys by hash slot.

Use client libraries that support cluster awareness — StackExchange.Redis handles slot routing transparently.

4.6.3 Using Modules

- RedisBloom → efficient negative caching (e.g., known/unknown short codes)

- RedisTimeSeries → lightweight operational metrics (cache hit ratio, latency histograms)

4.6.4 Shard Awareness

Avoid multi-key operations (MGET, MSET) unless all keys share a slot tag like {code}:

code:{abc123}, code:{xyz789}This ensures atomic multi-key ops on a single shard.

4.6.5 Throughput Benchmarks

Enterprise Redis clusters scale almost linearly:

- 1M ops/sec per shard typical

- < 1 ms latency for 90th percentile gets

- Built-in autoscaling via Azure portal

4.7 Operational Knobs

Caching is not “set and forget.” Fine-tuning key formats, TTLs, and refresh behavior ensures stability during peak loads.

4.7.1 Key Design

Follow consistent prefixes for clarity and grouping:

code:{shortCode} → destination

meta:{shortCode} → metadata

bloom:known → Bloom filterAvoid random prefixes that spread hot keys across shards unnecessarily.

4.7.2 Value Size and TTL

Keep payloads small (<1 KB). Large payloads reduce Redis throughput and increase memory fragmentation. TTL recommendations:

- Hot links: 1–6 hours

- Long-tail links: up to 24 hours

- Inactive/deleted links: 15–30 minutes negative cache TTL

4.7.3 Cache-Aside vs Write-Through

Cache-aside (lazy load): Application loads data on miss and writes back to cache — simplest, minimal coordination.

Write-through: Every DB write also updates cache immediately. Good for low write frequency (e.g., link creation).

4.7.4 Versioned Entries on URL Edits

When URLs change, use versioned keys to avoid serving stale cache entries:

code:{shortCode}:v2 → new destinationUpdate version pointer in DB, not the cached value. This prevents stale 301 responses from being reused indefinitely by the CDN.

5 Edge and Network: Azure Front Door vs Azure CDN in 2025

The edge layer defines your global latency and cache efficiency. In 2025, Microsoft has consolidated most CDN and Front Door offerings into a unified global platform with integrated WAF, DDoS protection, and rules engines. Choosing the right combination of Front Door and CDN services is crucial for balancing latency, cost, and control.

5.1 When to Prefer Front Door vs CDN (and When to Stack Them)

5.1.1 Azure Front Door – The Default Edge for Dynamic Sites

Front Door is the recommended default for globally distributed web applications and APIs. It provides:

- Anycast global entry points with automatic load balancing

- Rules Engine for path-based routing

- WAF integration

- Edge caching for both static and dynamic responses

Front Door excels at redirect-heavy workloads since it handles dynamic routes and cacheable 301 responses gracefully.

Example configuration via ARM:

{

"frontendEndpoints": ["sho.rt"],

"routingRules": [

{

"routeConfiguration": {

"@odata.type": "#Microsoft.Azure.FrontDoor.Models.FrontdoorForwardingConfiguration",

"backendPool": "redirect-api"

},

"cachingConfiguration": {

"queryParameterStripDirective": "StripNone",

"cacheDuration": "00:10:00"

}

}

]

}5.1.2 Azure CDN – Best for Bulk Static or Media Loads

Azure CDN (Verizon, Akamai, or Microsoft POPs) remains powerful for serving static content at scale: marketing pages, landing assets, or embedded campaign banners linked to short URLs.

For most modern systems:

- Use Front Door for dynamic redirect API

- Use CDN for static marketing assets

- Optionally stack both (CDN behind Front Door) for unified domain and WAF

5.1.3 Stacking Front Door and CDN

Stacking can be beneficial:

- Front Door handles routing, WAF, and TLS termination.

- CDN caches static pages or heavy assets behind it.

Request flow:

Client → Front Door (rules, WAF, TLS)

→ Azure CDN (cached content)

→ Origin (App Service)This approach minimizes origin hits while maintaining control and observability.

5.2 Cache-Key Design at the Edge

Cache-key design determines your edge cache hit ratio. Poor keying leads to duplicated entries and unnecessary backend calls.

5.2.1 Key Components

Default key:

scheme + host + path + query5.2.2 Query and Header Variations

For shorteners, path is usually enough:

https://sho.rt/{code}Avoid varying on headers unless necessary.

If localization is required, vary on Accept-Language but not on Cookie.

Azure Front Door allows fine-grained cache-key control:

az network front-door rules-engine rule add \

--front-door-name shortener \

--action-name "ModifyCachingKey" \

--header-action "Accept-Language"5.2.3 Stripping Set-Cookie Headers

By default, cached responses that contain Set-Cookie are treated as uncacheable.

Ensure your redirect API never sets cookies. Use:

Response.Headers.Remove("Set-Cookie");or configure AFD’s response header rewrite to strip them.

5.3 Invalidations & Automation

Stale redirects occasionally need purging when URLs are edited or disabled. Manual invalidations don’t scale; automate them via CI/CD or event hooks.

5.3.1 Purge APIs

Use Azure Front Door purge endpoint to invalidate paths:

az network front-door purge-endpoint \

--name shortener \

--content-paths "/abc123" "/xyz999"For batch updates, push paths from a CI/CD pipeline step post-deployment.

5.3.2 CI/CD Hooks

In Azure DevOps or GitHub Actions:

- name: Purge CDN

run: az network front-door purge-endpoint \

--name shortener \

--content-paths "/$(UpdatedCodes)"This ensures cache invalidation after link edits or deactivations.

5.3.3 Partial Key Patterns

AFD supports wildcard purges:

/campaign/*Use with care — large pattern purges can temporarily drop hit ratios system-wide.

5.3.4 Observing Cache Hit Rates

Inspect response headers:

X-Cache: TCP_HIT

X-Azure-Ref: [trace-id]Automate monitoring via Application Insights logs or synthetic probes to maintain 95%+ edge hit ratios for hot content.

5.4 Pre-Launch Playbook

Launching a large marketing campaign or product drop often involves millions of initial hits to a few short URLs. Pre-launch preparation

can prevent catastrophic cache misses and cold-edge latency.

5.4.1 Prime Hot Prefixes

Run prelaunch warmers to access each high-traffic short code from multiple regions:

k6 run warmup.js --vus 100 --duration 2mThis ensures each PoP (Point of Presence) has cached responses.

5.4.2 Synthetic Traffic to Warm PoPs

Schedule distributed probes across Azure regions to simulate traffic before real launch. Each probe requests a representative sample of codes.

5.4.3 Validate SLOs

Use Azure Load Testing or k6 to confirm:

- 95th percentile latency < 200 ms globally

- Redis hit ratio > 99%

- Edge cache hit ratio > 80%

5.4.4 Regional Readiness Checks

Before opening traffic globally:

- Verify DNS propagation for the short domain

- Confirm SSL certificates are renewed and propagated

- Run

curl -Ifrom multiple regions to validateX-Cache: TCP_HIT

With these preparations, the shortener stays resilient even when a campaign goes viral within seconds — no cold caches, no regional lag, and consistent 301 performance worldwide.

6 Real-Time Analytics Pipeline in ClickHouse

The redirect service only needs to respond fast, but what happens afterward—those billions of clicks per day—drives business insight, abuse detection, and product metrics. You can’t feed this into a transactional database without destroying latency budgets. The analytics pipeline must ingest high-volume events in near real time, deduplicate them safely, and store them efficiently for query and retention.

ClickHouse is a perfect fit for this workload: columnar, append-optimized, highly compressible, and capable of handling millions of inserts per second when paired with Kafka.

6.1 Requirements

Real-time analytics for a shortener service come with several operational expectations.

6.1.1 Near-Real-Time Visibility

Business stakeholders often want click metrics aggregated within seconds—“How many clicks came from France in the last minute?”—so ingestion delay must stay under 5–10 seconds even under bursty traffic.

6.1.2 Deduplication and Late Arrivals

Duplicate click events can occur due to retries or client reconnections. Late arrivals—events reaching the system minutes later—must still be processed accurately. Every event should have a unique ID (for example, the Snowflake ID from the redirect log) to allow exact-once semantics.

6.1.3 Geo and Device Breakdown

Every click should include derived attributes: country, city, device type, OS, referrer, and campaign ID. These are lightweight enrichments that happen asynchronously but are essential for analytics.

6.1.4 Graceful Degradation

When the analytics pipeline is unavailable, redirects should continue unhindered. Events can be queued in Kafka for hours if needed, and ingestion can catch up later. This principle—redirects first, analytics eventually—keeps the hot path predictable.

6.2 Ingestion Patterns

The canonical ClickHouse ingestion pipeline uses Kafka as the message backbone.

6.2.1 Canonical Topology

The recommended pattern is:

Redirect Service → Kafka Topic → ClickHouse Kafka Engine → Materialized View → MergeTreeKafka acts as the durable buffer. ClickHouse’s Kafka engine consumes messages in batches and feeds them into a Materialized View, which writes to a standard MergeTree table for query performance.

Example setup:

CREATE TABLE kafka_clicks

(

short_code String,

user_agent String,

ip String,

referrer String,

timestamp DateTime64(3),

click_id UInt64

)

ENGINE = Kafka

SETTINGS kafka_broker_list = 'kafka01:9092',

kafka_topic_list = 'click-events',

kafka_group_name = 'clickhouse-ingest',

kafka_format = 'JSONEachRow';Then attach a materialized view:

CREATE MATERIALIZED VIEW mv_clicks TO clicks_raw AS

SELECT *

FROM kafka_clicks;This pattern offers built-in offset management and fault tolerance.

6.2.2 Advantages Over Alternatives

Direct HTTP Inserts: simpler but risky under bursty load—clients can overwhelm ClickHouse’s insert pool. Batch Loads (CSV, Parquet): efficient but adds latency, making real-time dashboards lag. Kafka Engine: the sweet spot—absorbs bursts, provides durability, and ensures ordering within partitions.

6.2.3 Async Inserts for Burst Absorption

In modern ClickHouse (v24+), you can also use async inserts from API services directly:

INSERT INTO clicks_raw

FORMAT JSONEachRow

SETTINGS async_insert=1, wait_for_async_insert=0;The client gets a fast acknowledgment while ingestion completes in background threads—ideal for smoothing transient bursts.

6.3 Table Engines and Deduplication

Deduplication ensures that retries or duplicates from Kafka don’t inflate counts.

6.3.1 ReplacingMergeTree

For immutable events with potential duplicates:

CREATE TABLE clicks_raw

(

click_id UInt64,

short_code String,

timestamp DateTime64(3),

ip String,

referrer String,

device String

)

ENGINE = ReplacingMergeTree(click_id)

PARTITION BY toDate(timestamp)

ORDER BY (short_code, timestamp);Each unique click_id replaces previous versions automatically during background merges.

You can query without FINAL for speed and apply it only when strict deduplication is required.

6.3.2 CollapsingMergeTree

For updatable events (e.g., session start/stop pairs), CollapsingMergeTree can fold up and down records, but for URL shorteners, events are typically append-only, so ReplacingMergeTree suffices.

6.3.3 Idempotency with Snowflake IDs

Every redirect generates a globally unique Snowflake ID. That same ID becomes the deduplication token across Kafka, ClickHouse, and downstream analytics.

In C#:

var clickEvent = new

{

ClickId = idGenerator.CreateId(),

ShortCode = code,

Timestamp = DateTime.UtcNow,

Ip = httpContext.Connection.RemoteIpAddress?.ToString(),

Referrer = httpContext.Request.Headers.Referer.ToString(),

UserAgent = httpContext.Request.Headers.UserAgent.ToString()

};

await kafkaProducer.ProduceAsync("click-events", clickEvent);This guarantees that even if a request is retried, the dedup key remains identical.

6.4 Schema Design

Schema design in ClickHouse must balance ingestion speed, compression, and query shape.

6.4.1 Raw Events Table

The base table is append-only and optimized for inserts:

CREATE TABLE clicks_raw

(

short_code String,

click_id UInt64,

timestamp DateTime64(3),

ip String,

device LowCardinality(String),

referrer LowCardinality(String),

country FixedString(2),

city String

)

ENGINE = MergeTree

PARTITION BY toYYYYMMDD(timestamp)

ORDER BY (short_code, timestamp);Use LowCardinality for string fields with limited unique values (e.g., device, referrer domain).

This improves compression and speeds up aggregation.

6.4.2 Rollup Tables

To reduce query latency, create rollups for hourly and daily aggregations:

CREATE MATERIALIZED VIEW mv_clicks_hourly

ENGINE = SummingMergeTree

PARTITION BY toYYYYMMDD(hour)

ORDER BY (short_code, hour)

AS

SELECT

short_code,

toStartOfHour(timestamp) AS hour,

count() AS clicks,

uniqExact(ip) AS unique_visitors

FROM clicks_raw

GROUP BY short_code, hour;Queries like “top 10 links this hour” or “unique clicks today” hit these rollup tables instead of scanning billions of raw events.

6.4.3 Partition and Key Strategy

Partition by day for manageable data files (~50–200M rows/day).

Order by (short_code, timestamp) for efficient range scans per link.

This ordering aligns perfectly with Snowflake’s time-sorted IDs.

6.5 TTL and Storage Policies

ClickHouse handles large retention windows efficiently through TTL rules.

6.5.1 Hot/Warm/Cold Storage

Typical retention policy:

- Hot (NVMe): last 90 days

- Warm (HDD or cloud blob): 3–12 months

- Cold (archive): beyond 12 months, optional export to Parquet/S3

Example configuration:

ALTER TABLE clicks_raw

MODIFY TTL timestamp + INTERVAL 90 DAY TO VOLUME 'hot',

timestamp + INTERVAL 13 MONTH TO VOLUME 'warm',

timestamp + INTERVAL 15 MONTH DELETE;6.5.2 Policy Benefits

- Automated lifecycle management

- Cost-efficient storage scaling

- Queries automatically route to relevant volumes

With large-scale datasets, this reduces operational burden—no manual partition pruning or export scripts.

6.6 IP Geolocation and Enrichment

Each click event can be enriched with geographic and device data directly within ClickHouse.

6.6.1 IP Trie Dictionaries

Use an external dictionary backed by MaxMind GeoLite2 data:

CREATE DICTIONARY geoip

(

network String,

country_code FixedString(2),

city String

)

PRIMARY KEY network

SOURCE(FILE(PATH '/opt/clickhouse/dictionaries/GeoLite2-City.csv' FORMAT 'CSVWithNames'))

LAYOUT(IP_TRIE())

LIFETIME(3600);Enrich on insert:

INSERT INTO clicks_raw

SELECT

short_code,

click_id,

timestamp,

ip,

device,

referrer,

dictGetString('geoip', 'country_code', toIPv6(ip)),

dictGetString('geoip', 'city', toIPv6(ip))

FROM kafka_clicks;This enables country-level rollups with no external service calls.

6.6.2 Handling IPv4/IPv6 Uniformly

Always normalize IPs to IPv6 internally:

SELECT toIPv6('192.168.1.1'); -- ::ffff:192.168.1.1This avoids type mismatches and allows uniform dictionary lookups.

6.7 Query Patterns and Dashboards

ClickHouse shines when queries are aligned with its table structure.

6.7.1 Real-Time Counters

Example: real-time click count per short code:

SELECT

short_code,

count() AS clicks,

uniqExact(ip) AS unique_visitors

FROM clicks_raw

WHERE timestamp >= now() - INTERVAL 5 MINUTE

GROUP BY short_code

ORDER BY clicks DESC

LIMIT 20;This query scans only recent partitions, enabling sub-second results.

6.7.2 Cohort Funnels

For marketing analytics, cohort funnels (first vs repeat clicks) can be built using materialized views or window functions.

SELECT short_code,

countIf(is_first_click = 1) AS new_visitors,

countIf(is_first_click = 0) AS returning

FROM (

SELECT short_code, ip,

min(timestamp) OVER (PARTITION BY short_code, ip) = timestamp AS is_first_click

FROM clicks_raw

)

GROUP BY short_code;6.7.3 Top Referrers and Devices

SELECT

referrer,

count() AS clicks

FROM clicks_raw

WHERE timestamp >= today()

GROUP BY referrer

ORDER BY clicks DESC

LIMIT 10;6.7.4 Grafana and Visualization

Grafana’s ClickHouse plugin integrates natively, allowing dashboards like:

- Top links per region

- Hourly unique visitors

- Clicks by device category

Configure asynchronous inserts in the ClickHouse cluster for smooth ingestion during queries.

6.8 Backpressure and Durability

High-throughput streaming systems must defend against overload and data loss.

6.8.1 Kafka Consumer Groups

ClickHouse’s Kafka engine uses consumer groups to balance partitions across replicas. You can scale horizontally by adding replicas:

CREATE TABLE kafka_clicks ON CLUSTER shortener_cluster ...

SETTINGS kafka_group_name = 'clickhouse-ingest-group';Each replica will process distinct Kafka partitions, ensuring parallelism without duplication.

6.8.2 Dead Letter Queues (DLQ)

Malformed or oversized messages can break ingestion. Configure a secondary Kafka topic (click-events-dlq) for failures.

A background processor can parse, repair, or log those events for inspection.

6.8.3 Exactly-Once Semantics

ClickHouse achieves “exactly-once” at the analytical level by using unique click_id dedup keys.

Even if Kafka replays messages, duplicates merge safely.

6.8.4 Handling Backpressure

If ingestion lags (e.g., due to merge backlog):

- Increase

kafka_max_block_sizefor larger batch efficiency. - Add ingestion nodes to the cluster.

- Monitor

KafkaConsumerLagmetrics to ensure catch-up time stays under defined SLA (e.g., < 60s).

7 Rate Limiting at the Edge and Service Layer

A robust shortener must withstand abuse—automated bots probing links, API spamming, and high-volume scraping. Rate limiting, both at the edge and service levels, is the key to protecting latency and data integrity without blocking legitimate users.

7.1 Threat Model

The most common abuse vectors are:

- Bot floods: massive redirect traffic from compromised devices or crawlers.

- Credential stuffing: repeated API calls using stolen API keys.

- Domain abuse: malicious users creating thousands of short links rapidly.

Effective rate limiting differentiates legitimate bursts from malicious floods, ideally responding with clear backoff signals instead of blanket blocking.

7.2 Sliding-Window Counters in Redis (Recommended)

7.2.1 Data Model

Use Redis as a distributed rate-limiting backend. Each client (IP, API key, or domain) maintains a sliding time window of request timestamps.

Key structure:

rate:{scope}:{id} → [timestamps...]Each request appends a timestamp and trims old entries.

7.2.2 Lua Script for Atomicity

Redis ensures atomic evaluation of the sliding window logic:

local key = KEYS[1]

local now = tonumber(ARGV[1])

local window = tonumber(ARGV[2])

local limit = tonumber(ARGV[3])

redis.call('ZREMRANGEBYSCORE', key, 0, now - window)

local count = redis.call('ZCARD', key)

if count >= limit then

return 0

end

redis.call('ZADD', key, now, now)

redis.call('EXPIRE', key, window)

return 1Invoke via C#:

var now = DateTimeOffset.UtcNow.ToUnixTimeMilliseconds();

var allowed = (int)await redis.EvalAsync(luaScript, new[] {$"rate:ip:{clientIp}"}, now, 60000, 100);

if (allowed == 0)

return StatusCode(429);This enforces, for example, 100 requests per minute per IP.

7.2.3 Eviction and TTL Strategy

Use automatic expiry equal to the window size (e.g., 60 seconds). Redis handles cleanup; you never need to delete keys manually.

7.2.4 Multiple Dimensions

Implement different buckets:

- per-IP

- per-API key

- per-domain (for link creation)

Each bucket has its own threshold and window, combined for final decision.

7.3 ASP.NET Core Implementation Recipe

ASP.NET Core 8 introduced a native rate-limiting middleware that can integrate with Redis.

7.3.1 Middleware Setup

builder.Services.AddRateLimiter(options =>

{

options.GlobalLimiter = PartitionedRateLimiter.Create<HttpContext, string>(context =>

{

var ip = context.Connection.RemoteIpAddress?.ToString() ?? "unknown";

return RateLimitPartition.GetSlidingWindowLimiter(ip, _ =>

new SlidingWindowRateLimiterOptions

{

PermitLimit = 100,

Window = TimeSpan.FromMinutes(1),

SegmentsPerWindow = 3

});

});

});7.3.2 Redis-Backed Store

Extend PartitionedRateLimiter with Redis integration for distributed deployments.

You can wrap the Lua logic shown earlier into a reusable RedisRateLimitStore.

7.3.3 Retry-After and Backoff

Respond with informative headers for client-side throttling:

if (!allowed)

{

Response.Headers["Retry-After"] = "10";

return StatusCode(429, "Rate limit exceeded");

}Clients then apply exponential backoff to avoid hammering the service.

7.3.4 Testing

Use tools like wrk or k6 to simulate high-traffic scenarios and validate that limits trigger predictably while maintaining low latency for allowed users.

7.4 Coordinating with Front Door/CDN

Edge throttling and application throttling must complement, not conflict.

7.4.1 Edge-Level Protection

Configure Azure Front Door’s WAF rules:

- Rate-limit by IP at 100 requests per 10 seconds.

- Block known bot agents.

- Allow known search crawler IP ranges.

This protects your origin servers from floods before they reach your API.

7.4.2 Correlation and Observability

Propagate request identifiers between Front Door and your backend:

X-Azure-RequestIdLog this in Application Insights to trace which requests were throttled at the edge vs at the service.

7.4.3 Coordinated Messaging

Ensure consistent user feedback. If Front Door returns 429, your backend shouldn’t also respond with generic 500s. Use harmonized retry-after messaging across both layers.

8 Observability, Validation, and Ops Readiness

Even the best-engineered system is only as reliable as its visibility and operational discipline. Observability, continuous validation, and defined runbooks turn a shortener from “fast” into “production-grade.”

8.1 End-to-End Tracing and Metrics

OpenTelemetry provides consistent, low-overhead tracing across all components.

8.1.1 Setup in ASP.NET Core

builder.Services.AddOpenTelemetry()

.WithTracing(b =>

{

b.AddAspNetCoreInstrumentation();

b.AddHttpClientInstrumentation();

b.AddRedisInstrumentation();

b.AddSource("UrlShortener");

b.AddAzureMonitorTraceExporter();

})

.WithMetrics(b =>

{

b.AddMeter("UrlShortener");

b.AddRuntimeInstrumentation();

b.AddAspNetCoreInstrumentation();

b.AddAzureMonitorMetricExporter();

});Each redirect traces through:

- ID generation

- Cache lookup (L1/L2)

- Database fallback

- Kafka publish (for analytics)

8.1.2 Sampling and Aggregation

Use head-based sampling (e.g., 1%) for high-volume requests and full sampling for admin APIs. Aggregate latency histograms and error counts in Azure Monitor.

8.2 Synthetic Testing and Load Tests

8.2.1 Geo-Distributed Probes

Deploy probes in multiple Azure regions to hit random short codes and verify redirect health and latency. Store metrics in Application Insights with regional tags.

8.2.2 Load Tests

Use k6 to simulate realistic redirect traffic:

k6 run --vus 1000 --duration 1m redirect_test.jsScript example:

import http from "k6/http";

export default function () {

http.get("https://sho.rt/abc123");

}Run failure drills—simulate Redis failover or origin outage—to confirm graceful degradation.

8.3 Capacity Planning and Cost Guards

8.3.1 Redis Shards

Monitor cache hit ratio and scale shards when hit ratio drops below 90%. Use RedisTimeSeries to track key-level metrics.

8.3.2 Front Door and Egress Costs

Egress charges grow quickly with viral content. Cache effectively and compress redirects (301s) to reduce cost. Set egress alerts in Azure Cost Management.

8.3.3 ClickHouse Growth

Track storage by partition and adjust TTLs before outgrowing hot volumes. Automated partition pruning ensures long-term cost stability.

8.4 Security and Trust-and-Safety Hooks

8.4.1 URL Scanning

Before activating new short links, scan targets against Google Web Risk or other APIs:

def scan_url(url):

response = requests.post(

"https://webrisk.googleapis.com/v1/uris:search",

params={"threatTypes": ["MALWARE", "SOCIAL_ENGINEERING"], "uri": url}

)

return response.okBlock or flag links before publishing.

8.4.2 Signed Admin Actions

Require signed JWTs or Azure AD credentials for link edits, deletions, and purges. All admin operations should be auditable.

8.4.3 Key Rotation

Rotate service and storage keys regularly, especially Redis and Kafka credentials, using Azure Key Vault automation.

8.5 Runbooks and Incident Response

8.5.1 Cache Purge

Document clear procedures for cache purging:

az network front-door purge-endpoint --content-paths "/abc123"8.5.2 Hot-Key Mitigation

If a key floods Redis:

- Apply temporary shorter TTL

- Use Bloom filter gating

- Push the key to CDN-only cache for cooling

8.5.3 Redirect Rollbacks

Maintain versioned entries; rollback simply updates pointer to previous URL version.

8.5.4 Analytics Shadow Pipelines

Run a secondary Kafka→ClickHouse ingestion path shadowing production to verify schema or config changes without disrupting main flow.

A disciplined observability and ops framework turns a high-performance shortener into a resilient, auditable, and trustworthy global service. With these layers—real-time analytics, rate limiting, and full-spectrum observability—you can confidently operate at internet scale while keeping latency and cost under control.