1 Introduction: The Billion-Dollar “Ping”

1.1 The Universal Trigger

A text message notification hits differently than almost any other digital signal. The short vibration or sound cuts through whatever you’re doing and demands attention. Most people don’t consciously decide to check a text. They just do. That reaction is fast, emotional, and largely automatic.

This didn’t happen by accident. SMS was built for quick, direct communication. Over time, people learned that texts usually mean something matters right now. Friends coordinating plans, banks sending one-time codes, delivery updates, appointment reminders. Texts became the channel for things that shouldn’t wait.

Email lives in a different mental category. Inboxes are crowded with newsletters, promotions, and automated updates. People skim email when they have time. They expect delay. With texts, the expectation flips. When a message arrives, your brain assumes there’s a reason it showed up at this exact moment.

That expectation shows up clearly in behavior. Text messages are opened at dramatically higher rates than email, often within seconds of arrival. This isn’t about better technology. It’s about habit and conditioning. The sound of a text creates urgency before you’ve read a single word.

Scammers target that brief window. They don’t need to persuade you with a detailed argument. They just need to trigger action before you slow down. That’s why scam texts are short, direct, and framed as urgent. And it’s why they often arrive when people are distracted, busy, or tired.

The “ping” isn’t just a notification. It’s a psychological switch. Once you understand how that switch works, it becomes easier to see why SMS scams are so effective.

1.2 The Scope of the Problem (2025 Data)

By 2025, text-based scams are no longer a niche problem. They are one of the most common and costly forms of consumer fraud worldwide. Regulators, banks, and security teams all see the same pattern: criminals are shifting away from email and focusing on mobile messaging.

The reasons are practical. SMS messages are opened more often, trusted more quickly, and verified less rigorously. They also reach almost everyone. Teens, older adults, and people who rarely check email all read texts. That makes SMS an efficient channel for large-scale fraud.

The financial impact is enormous. When you add up direct theft, account takeovers, identity fraud, and recovery costs, global losses from text-based scams reach into the tens of billions of dollars each year. In many countries, smishing now ranks among the top categories of reported fraud by volume, and the numbers continue to rise.

What’s changed isn’t just how many scams exist, but what they look like. Early online scams were easy to dismiss. They relied on far-fetched stories and obvious red flags. The modern scam text does the opposite. It blends into everyday life.

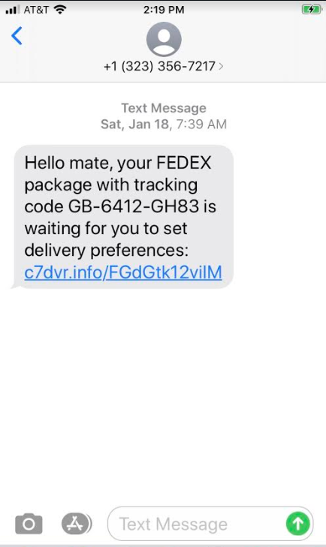

Common examples include:

- Notices about unpaid tolls

- Missed package delivery alerts

- Bank or card security warnings

- Subscription renewal messages

- Government or utility notifications

These messages don’t ask you to believe something extraordinary. They ask you to confirm something routine. Most people have driven on a toll road, ordered a package, or logged into a bank account recently. The scam only needs to feel plausible, not universally true.

Scale is the final piece. Scam texts are sent in massive batches, often millions at a time. Automation makes this cheap. Even if only a tiny fraction of recipients respond, the operation is profitable. A low success rate doesn’t mean failure when volume is high enough.

This is no longer about a few bad actors sending messages from a laptop. It’s an organized, industrial system built around speed, scale, and human psychology.

1.3 The “Anatomy” Concept

To really understand scam texts, it helps to stop thinking of them as random messages and start thinking of them as systems. A successful scam isn’t accidental. It’s assembled from parts, each designed to do a specific job.

Every scam text, no matter how simple, follows a similar structure:

- A delivery method that gets the message onto your phone

- A sender identity that looks familiar or authoritative

- A short message that creates urgency, fear, curiosity, or opportunity

- A call to action, usually a link or reply

- A trap that captures money, credentials, or access

This article takes the same approach an engineer would take when analyzing a system failure. We take one scam text and break it apart. We look at how it was delivered, why it caught attention, how it hid its intent, and what happened after the click or reply.

The goal isn’t just to spot individual red flags. It’s to understand the structure underneath them. Once you see that structure, variations become easier to recognize. The wording may change. The brand name may change. The technology will continue to evolve.

But the anatomy stays the same. And once you understand that anatomy, scam texts lose much of their power.

2 The Delivery Mechanism: How They Reach You

Before a scam text can scare you, confuse you, or trick you into clicking a link, it has to land on your phone in the first place. That part is easy to overlook because SMS feels simple and familiar. But behind every text message is a global delivery system that was never designed with modern fraud in mind.

Scammers take advantage of that gap. They don’t break the system; they use it exactly as it was built. Once you understand how messages move from sender to phone, it becomes clear why blocking a single number rarely works and why the same scams keep coming back with slight variations.

2.1 The “Sender ID” Illusion (Spoofing)

2.1.1 Built on Trust, Not Verification

When SMS was created, mobile networks were smaller and more controlled. The assumption was simple: if a message entered the network, it probably came from someone legitimate. There was little concern about large-scale impersonation.

Because of that, the sender information in an SMS was treated more like a label than proof. The network would display whatever sender name or number it was given. There was no built-in system to confirm that the sender was who they claimed to be.

This design choice made SMS flexible and easy to use. Businesses could send branded alerts, and carriers could exchange messages across countries without complex checks. But it also created a long-lasting weakness. The sender ID could be faked, and the system had no native way to stop it.

That’s why a text can appear to come from your bank, a delivery service, or even the same thread as previous legitimate messages. The phone trusts the label it’s given.

2.1.2 How VoIP Enables Sender Spoofing

Today, most large-scale texting doesn’t come directly from phones. It flows through Voice over IP systems and SMS gateways connected to the internet. These gateways accept messages through software interfaces and hand them off to mobile carriers.

Many of these systems allow the sender field to be set freely. A legitimate company might use this to display a name like “Bank Alert” instead of a phone number. A scammer can do the same thing with the same tools.

From your perspective, there’s no visible difference. The text arrives, the sender looks familiar, and the phone displays it without warning. Unless the carrier identifies and blocks it before delivery, the message looks completely normal.

This is also why deleting or blocking one sender often feels pointless. The scammer can simply change the sender ID and send the same message again.

2.1.3 Smishing Gateways and Grey Routes

Legitimate companies usually send texts through regulated carrier routes. These routes have rules, monitoring, and higher costs. They exist to reduce abuse and ensure reliability.

Scammers avoid those routes. Instead, they use what are often called grey routes. These are cheaper pathways through intermediary providers, sometimes operating in regions with weaker oversight or enforcement.

Grey routes reduce cost and increase volume. They also make it harder to trace where messages really came from. By the time a carrier notices a campaign and blocks it, the infrastructure behind it may already be gone or moved elsewhere.

This is why SMS fraud feels persistent. You’re not dealing with a single sender. You’re dealing with a constantly shifting delivery network.

2.2 Mass Automation vs. Spear Phishing

Not every scam text is sent the same way. Some are sprayed broadly across millions of numbers. Others are carefully targeted. Most campaigns fall somewhere in between.

2.2.1 Auto-Dialers and Number Enumeration

At the simplest level, scammers use automated systems that generate phone numbers and send messages in sequence. These systems don’t know who you are. They only know that your number fits a valid pattern.

If a message goes through or gets a response, that number is flagged as active. Active numbers are valuable because they confirm there’s a real person on the other end.

This approach is cheap and efficient. It doesn’t require stolen data or detailed planning. It relies on volume. Even if 99.9 percent of recipients ignore the message, the remaining fraction can still make the campaign profitable.

That’s why many scam texts feel generic. They aren’t meant for you specifically. They’re meant for anyone who might react.

2.2.2 Data Breach Feeds and Targeted Campaigns

More advanced scams use stolen data. When companies are breached, phone numbers, names, and other details often end up for sale. Criminals combine these leaks into large datasets.

With this information, scammers can send texts that feel personal. Seeing your name, city, or a service you recognize lowers your guard. The message doesn’t feel random anymore.

The details don’t have to be perfect. Even partial accuracy makes the message feel legitimate. Your brain fills in the missing pieces and assumes the rest must be real.

Once someone responds to a targeted scam, they become even more valuable. Their number is now confirmed as responsive and may be resold or reused in future campaigns.

2.3 SIM Farms

2.3.1 Physical Infrastructure for Digital Fraud

Some scam operations don’t rely only on internet gateways. They use physical hardware known as SIM farms. These are racks or banks of real SIM cards, each acting like a separate mobile phone.

To a carrier, each SIM looks like a normal subscriber. To the scammer, the entire setup functions as a high-volume messaging system.

By spreading messages across hundreds or thousands of SIMs, scammers avoid triggering volume-based spam detection. Instead of one number sending thousands of texts, many numbers each send a few.

This makes the activity look more like normal human behavior.

2.3.2 Why SIM Farms Are Hard to Stop

Blocking one SIM card is easy. Stopping an entire farm is not. SIMs can be replaced quickly, activated under fake identities, or sourced from regions with weak verification rules.

SIM farms also blend in with legitimate traffic. Messages are sent at realistic speeds, from many different numbers, across different carriers. Automated systems struggle to separate this from normal use without causing false positives.

As long as SMS remains a global system with uneven rules and enforcement, SIM farms will continue to be an effective tool for scammers.

3 The Hook: Psychology and Content Construction

Once a scam text lands on your phone, the hard technical work is already done. The message made it through carrier filters, spoofed a sender, and showed up where you’d see it. At that point, the scam lives or dies based on one thing: whether you act.

This is where psychology takes over. Scam texts are short on purpose. It’s not because scammers can’t write more. It’s because every extra sentence gives your brain more time to slow down, question what it’s reading, or decide to ignore it. Short messages create momentum.

A good scam text is designed to be processed in seconds. It leans on mental shortcuts your brain uses when it’s busy or under pressure. Instead of explaining details, it pushes emotional buttons that move you toward clicking, replying, or calling before you’ve had time to think it through.

3.1 The “Urgency Loops”

Most scam texts follow a predictable pattern called an urgency loop. The message presents a situation, suggests consequences, and offers a single way to fix the problem. The loop only ends if you take the action they want.

There are different emotional flavors, but the structure stays the same. The message narrows your options until clicking feels like the only reasonable choice.

3.1.1 Fear-Based Scripts

Fear is the most common lever. These texts tell you something is wrong or about to go wrong. The language is intentionally vague: “Your account has been suspended,” “Unusual activity detected,” or “Immediate action required.”

Details are missing on purpose. If the message explained exactly what happened, you might stop and verify it. Instead, it creates anxiety first and answers questions later—after you’ve clicked.

Fear works because it shifts priorities. When people think their money, identity, or access is at risk, accuracy takes a back seat to speed. The message usually points to a single solution, such as a link or phone number, and frames it as urgent.

Even when no deadline is stated, the tone implies one. Words like “now,” “today,” or “before it’s too late” signal that waiting is dangerous. The goal is to keep you from checking another source or asking someone else.

3.1.2 Greed and Opportunity Scripts

Not all scams threaten loss. Some promise gain. These messages talk about refunds, credits, prizes, or accidental overpayments waiting to be claimed.

On the surface, these feel less risky. A refund text doesn’t trigger the same alarm bells as a security warning. That’s exactly why they work. The message sounds helpful, not hostile.

The pressure comes from scarcity. The opportunity is framed as temporary or conditional. You’re told to act quickly to “confirm eligibility” or avoid missing out. Even small amounts can be effective, because curiosity and optimism fill in the gaps.

The scammer doesn’t need you to be greedy. They just need you to think, “I should check that.”

3.1.3 Curiosity-Based Scripts

Curiosity-driven texts are built around ambiguity. They give you just enough information to raise a question, but not enough to answer it. Messages like “Is this you?” or “You were mentioned in a photo” are classic examples.

These texts create mental discomfort. Your brain wants closure. Clicking the link feels like the fastest way to resolve the uncertainty.

This approach works especially well on phones, where people are often multitasking. A quick tap seems harmless in the moment. By the time doubt catches up, the interaction has already moved forward.

3.2 The “Hi Mum” / “Hi Dad” Phenomenon

Some scams don’t rely on links at all. Instead, they target personal relationships. Messages that start with “Hi Mum” or “Hi Dad” are among the most emotionally effective formats in use today.

The setup is simple. The sender claims to be a child or close family member using a new phone. There’s a problem—usually a broken phone, lost wallet, or urgent bill—and they need help quickly.

This works because it bypasses rational checks. Parents are wired to respond to perceived emergencies involving their children. That reaction is fast and emotional, not analytical.

The story usually includes a reason not to call. The phone is broken. They’re in a meeting. They can only text. This removes the easiest way to verify the claim and keeps the conversation contained.

Unlike mass scam texts, these messages often turn into short back-and-forth exchanges. Each reply deepens engagement and commitment. By the time money is requested, the situation already feels real.

3.3 The Role of AI in 2025

One reason scam texts feel more convincing today is the use of AI. Older scams were easy to spot because of bad grammar, awkward phrasing, or strange tone. Those signals are fading fast.

Modern language models can generate clear, natural-sounding messages in many languages and styles. Scammers can match tone to region, age group, or context with very little effort. The result is messages that sound normal, even professional.

AI also helps scammers avoid detection. Instead of sending the same message repeatedly, they generate thousands of small variations. This makes it harder for filters to catch patterns.

Personalization has improved as well. AI can combine leaked data—names, partial account numbers, locations—into messages that feel specific. Even when the details are incomplete, the human brain fills in the rest.

The key takeaway is this: good writing is no longer a sign of safety. Scam texts don’t work because they’re sloppy. They work because they’re designed to match how people think and react under pressure.

4 The Camouflage: Link Obfuscation and Redirects

Once a scam text convinces someone to take the next step, the scammer has a new problem to solve: getting the victim to a malicious site without raising alarms. At this stage, the message has done its job. Now the link has to do the rest.

Links are where most scams succeed or fail. They are the bridge between a harmless-looking text and real damage. Because of that, scammers put a lot of effort into hiding where those links actually go. The camouflage isn’t just for people. It’s also designed to slip past automated scanners, reputation systems, and security tools.

What makes modern scams dangerous is that links don’t behave the same way for everyone. The same URL can look safe to one system and malicious to another. That flexibility is intentional.

4.1 The Link Shortener Trick

Link shorteners are everyday tools. People use them to make long URLs easier to share in texts and social posts. A short link redirects you to the real destination behind the scenes. That layer of indirection is exactly what scammers want.

When a shortened link appears in a text message, you can’t tell where it leads just by looking at it. Instead of judging the destination, you end up judging the service that shortened it. If the shortener looks familiar or professional, many people assume the link must be safe.

Scammers also create their own shortening services. These behave like legitimate ones but have no oversight or abuse controls. The visible domain looks neutral and unremarkable, which removes useful context for decision-making.

Typosquatting builds on this idea. Instead of hiding the destination completely, scammers create domains that look trustworthy at a glance. A link like usps.track-package-24.com works because people read URLs left to right. They see “usps” and “track-package” and mentally check the box.

The important part of the domain is actually at the end. In this case, the site is owned by whoever controls track-package-24.com, not USPS. On a phone screen, where URLs may be cut off or wrapped, that distinction is easy to miss.

4.2 Homoglyphs and IDN Attacks

Some scams don’t rely on clever wording at all. They rely on how letters look. Homoglyph attacks take advantage of characters from different alphabets that appear identical on screen.

Modern domain systems allow international characters. That’s useful for global languages, but it also creates an opening for abuse. A character from one alphabet can look exactly like a familiar letter from another.

To your browser, these characters are different. To your eyes, they’re the same. A fake domain can appear identical to a real one unless you inspect it carefully, which most people don’t do—especially on a phone.

This technique works best when people rely on visual recognition rather than reading each character. Small screens, small fonts, and quick glances all favor the attacker. Even technically skilled users can miss this under time pressure.

Browsers try to reduce this risk by displaying encoded versions of suspicious domains in some cases. But the behavior isn’t consistent, and most users don’t know what they’re looking at when it happens. That uncertainty keeps homoglyph attacks effective.

4.3 The Redirect Chain

The link you tap in a scam text is almost never the final stop. Instead, it starts a chain of redirects that move you through multiple servers before landing on the real trap.

Each step in the chain serves a role. One server might record basic details about your device. Another might decide whether you’re worth targeting. A final server hosts the fake login page or payment form.

This structure makes scams harder to shut down. If one server is blocked or taken offline, the others can be swapped out. The visible link doesn’t change, but what happens behind it does.

Redirects also make investigation harder. By the time someone analyzes the final page, it’s difficult to tell where the traffic came from. This breaks simple blocking strategies and slows response.

4.3.1 Geo-Fencing and Device Filtering

Advanced scams don’t show the same content to everyone who clicks. The server checks details like your IP address, country, device type, and sometimes even your operating system.

If the request looks like it’s coming from a security scanner, a desktop computer, or the wrong region, the server may show a harmless page or nothing at all. This makes the link appear clean during automated checks.

If the request matches the target profile—usually a mobile phone in a specific country—the real scam page loads. To the victim, everything looks normal and immediate.

This split behavior is deliberate. It allows scams to hide in plain sight. A link can look inactive or harmless to researchers while actively harming real users at the same time.

5 The Trap: The Fake Landing Page & Phishing Kits

Once someone clicks a scam link, the interaction changes completely. The scammer no longer needs to persuade or rush the victim. At this point, the only goal is extraction—getting passwords, payment details, or live access to an account as quickly and quietly as possible.

This is where many people are surprised. The page that loads is often polished, fast, and familiar. It doesn’t look like something thrown together by an amateur. That’s because, in most cases, it isn’t. Modern scams rely on professional-grade tooling built specifically to imitate real services.

5.1 Phishing-as-a-Service (PaaS)

Phishing today looks a lot like legitimate software-as-a-service. Instead of building fake sites from scratch, scammers rent ready-made phishing kits. They don’t need to know how login systems work or how websites are built. The hard parts are already done for them.

These kits are sold through private chat groups and underground markets. Access is usually subscription-based. Pay the fee, and you get a control panel, setup instructions, hosting recommendations, and sometimes even technical support.

The person selling the kit keeps it updated. When a bank or email provider changes its login page, the kit is updated to match. The buyer’s job is simply to drive traffic—sending texts, emails, or messages that push victims toward the link.

Some kits focus on specific brands like banks or cloud email services. Others are designed for more advanced attacks that capture session data in real time. From the attacker’s side, this is plug-and-play fraud. From the victim’s side, it feels like a normal login experience.

Because many attackers use the same kits, shutting down one instance doesn’t solve the problem. New copies pop up quickly, often hosted somewhere else. The ecosystem survives because no single actor controls the whole operation.

5.2 The “Perfect” Clone

The realism of modern phishing pages comes from how they’re built. Instead of copying a website once and hosting it forever, many kits pull live content from the real service every time the page loads.

Logos, fonts, layout styles, and even interactive scripts are fetched directly from the legitimate site. Your browser renders the same visuals you’d see on the real page. That’s why everything looks correct, even down to small design details.

Behind the scenes, though, the form behaves differently. When you type your username and password, the data isn’t sent to the real company. It’s sent straight to the attacker’s server.

Some kits go further with dynamic branding. They look at the email address you enter and adjust the page to match. Enter a work email, and the page may suddenly display your company’s logo and branding. What started as a generic login now looks customized.

This makes the experience feel inevitable. The page appears to know who you are and where you belong. Many people take that as proof of legitimacy, without realizing the personalization is automated and purely cosmetic.

5.3 Adversary-in-the-Middle (AiTM) Attacks

Two-factor authentication was once a strong barrier against phishing. Even if a password was stolen, attackers couldn’t log in without the second factor. AiTM attacks were created to get around that.

In an AiTM setup, the fake site sits between you and the real service. It acts like a relay. When you enter your credentials, they are instantly passed to the real login system. When the real site asks for a one-time code, the fake page asks you for it too.

The key is speed. The attacker doesn’t save the code for later. A bot uses it immediately to finish logging in. By the time you see a loading screen, error message, or redirect, the attacker is already inside your account.

This works against SMS codes, authenticator apps, and even push approvals. The system assumes you’re talking directly to the service you trust. AiTM breaks that assumption without triggering obvious warnings.

After a successful login, attackers often grab session cookies. These let them stay logged in without re-entering credentials. Even if you later change your password, the attacker may still have access until the session expires or is manually revoked.

6 The Long Con: “Pig Butchering” (Sha Zhu Pan)

Not every scam is built for speed. Some are designed to play out slowly, over weeks or even months, with no obvious pressure at the beginning. These are often called “pig butchering” scams, a term that reflects how victims are deliberately cultivated before being exploited.

These scams look very different from typical scam texts. There’s no urgent link, no immediate demand, and often no clear sign that anything is wrong. The harm comes later, after trust has been carefully built.

6.1 The Innocent Start

The first message usually feels accidental. It might say, “Are we still on for coffee?” or “Sorry, I think I have the wrong number.” There’s nothing to click and nothing to fix.

Most people respond out of courtesy. Ignoring a wrong-number text can feel rude. That reply, however small, is the moment the scam begins. It confirms the number is active and that the person on the other end is willing to engage.

From there, the conversation softens. The sender apologizes, introduces themselves, and keeps things light. The exchange feels natural because it often is. Many of these operations mix automation with real people handling conversations.

At this point, nothing is being asked for. The scammer is observing. How quickly do you reply? Are you friendly? Do you share personal details? This information shapes what happens next.

6.2 The Grooming Phase

Once basic rapport exists, the scammer suggests moving the conversation to another platform like WhatsApp or Telegram. This feels normal and convenient, but it serves important purposes.

Messaging apps offer more privacy, richer features, and fewer automated filters. They also create a sense of closeness. The conversation no longer feels like a random text. It feels like a personal chat.

Over time, the scammer shares stories about their life, routines, and interests. Photos may be exchanged. The tone becomes warmer and more familiar. The victim is encouraged to share as well.

Money doesn’t come up right away. When it does, it’s casual. The scammer might mention investing success or a side hobby in crypto or trading. There’s no pressure. The idea is planted and allowed to grow on its own.

This slow pacing is deliberate. By the time an opportunity is discussed seriously, trust has already been established. It doesn’t feel like a sales pitch. It feels like advice from someone you know.

6.3 The Fake Platform

Eventually, the victim is introduced to a trading platform or investment app. It looks polished and professional, with charts, balances, and transaction histories that resemble real financial tools.

The first investment is usually small. Almost immediately, the account shows gains. Sometimes the victim can even withdraw a small amount. This early success is crucial. It builds confidence and lowers skepticism.

As trust increases, so do the stakes. The victim is encouraged to invest more. Savings, retirement funds, loans, and borrowed money are common. The platform continues to show impressive returns.

At some point, withdrawals stop working. The victim is told there are fees, taxes, or verification steps required before funds can be released. Each payment is framed as the final hurdle.

Eventually, communication fades or stops entirely. The platform disappears or locks the account. There was never real trading. The numbers were just part of a controlled simulation.

The damage goes beyond money. Victims often feel embarrassed, isolated, and reluctant to talk about what happened. That emotional impact is part of why these scams are so effective and so devastating.

7 Digital Forensics: How to Dissect a Scam Text

By now, the moving parts of a scam text should feel familiar. You’ve seen how messages get delivered, how they create urgency, how links are hidden, and what happens after a click. Digital forensics is about slowing all of that down and looking at the message with intention.

This isn’t about proving, with absolute certainty, that a text is malicious. In real life, you rarely get that luxury. The practical goal is simpler: decide whether it’s safe to engage or whether you should stop and disengage.

The techniques in this section are meant for real-world use. They work when you’re busy, distracted, or reading a message on a small screen. Some rely on habits you can build. Others are optional tools for when you want to dig a little deeper.

7.1 Pattern Recognition (Human Defense)

The most important defense is still you. Filters, warnings, and blocking help, but the final decision almost always happens in your head. Pattern recognition is about knowing which details deserve attention and which ones are meant to rush or distract you.

A critical habit is learning how to read URLs correctly. What matters is the registered domain at the end, not familiar words at the beginning. A link like amazon.secure-login.com is owned by whoever controls secure-login.com. The word “amazon” is just decoration.

Scammers rely on how people skim. Most users read URLs left to right and stop once they see a brand name. On phones, this gets worse. URLs are often shortened, wrapped, or hidden behind preview text. Taking a second to identify the real domain breaks a lot of scams.

Phone numbers offer similar clues. A message claiming to be from a local business or government office may come from an unexpected area code or even another country. While number portability means this isn’t always conclusive, mismatches are still useful context.

Language cues still matter, but not in the old way. Bad grammar is no longer a reliable signal. Instead, look at structure. Does the message push you to bypass normal steps? Does it ask for actions that don’t match how the real organization usually operates? Does the urgency feel out of place for the situation?

The more examples you see, the easier this gets. Over time, patterns stand out faster. The goal isn’t deep analysis of every message. It’s having a short mental checklist you can run through quickly before acting.

7.2 Tools for the Curious (Practical Tech)

For people who want more certainty, there are tools that let you inspect suspicious messages without interacting directly. These aren’t required for everyday safety, but they’re useful for learning and confirmation.

OSINT services let you analyze links without opening them in your own browser. Platforms like Urlscan.io load the page in an isolated environment, record redirect behavior, and capture screenshots. You can see what the link does without exposing your device.

VirusTotal works differently. It checks a URL or domain against many security engines at once. If several engines flag it, that’s a strong warning sign. If none do, it doesn’t mean the link is safe—it just means it may be new or not widely reported yet.

Phone numbers can be examined too. Libraries like phonenumbers help parse number formats, identify country codes, and spot inconsistencies. This can confirm whether a number matches the story being told in the message.

More advanced users sometimes follow redirect chains using tools like requests. This shows how many hops a link takes and which domains are involved. This should never be done casually. Always use a sandbox, virtual machine, or third-party service.

That warning matters. Even passive checks can leak information. Clicking, loading, or probing a malicious system from your personal device can expose you to tracking or future targeting. Curiosity is useful, but only when paired with isolation.

7.3 Carrier Technologies

Mobile carriers are actively trying to reduce fraud. For voice calls, major progress has been made using protocols like STIR and SHAKEN. These systems cryptographically verify caller ID information so carriers can tell whether a call is likely spoofed.

For phone calls, this has helped. Many spoofed calls are now labeled or blocked before they ever reach users. People get clearer signals about whether a call can be trusted.

Text messages are a harder problem. SMS travels through a more fragmented system with many intermediaries and legacy components. There is no widely adopted equivalent of STIR/SHAKEN for SMS.

Because of that, carriers rely on indirect methods. They analyze sending patterns, watch for known infrastructure, and block based on reputation and volume. These defenses help, but they’re reactive.

Scammers adapt quickly. They rotate numbers, routes, and hardware to stay ahead of detection. That’s why network-level protections can reduce risk but can’t eliminate it.

In practice, this means user judgment still matters. Even as carrier technology improves, the final decision often comes down to whether you pause, notice a pattern, and choose not to engage.

8 Prevention and the “Zero Trust” Mindset

Prevention isn’t about never making a mistake. Everyone clicks the wrong thing eventually. The real goal is to make sure one bad click doesn’t turn into a drained account, a stolen identity, or months of cleanup.

That’s where a zero trust mindset comes in. In this context, zero trust simply means this: don’t assume a message is legitimate just because it looks right. Treat unsolicited texts as unverified until you prove otherwise. This mirrors how secure systems work and gives you a safer way to interact with messages in a world where appearances are easy to fake.

8.1 The Golden Rule: Verify Out of Band

If there’s one rule that stops most scams, this is it: never verify a message using the same channel it arrived on.

If a text claims to be from your bank, don’t tap the link or reply to the message. Use a separate, trusted path. Call the number on the back of your card. Open the bank’s official app yourself. Type the website address manually into your browser.

This breaks the scammer’s control over the interaction. Scam texts are designed to keep you inside a closed loop where every option leads back to them. Out-of-band verification forces the conversation into a space they can’t manipulate.

This rule applies even when everything looks perfect. Correct logos, clean language, and familiar sender names no longer mean much. What matters is whether you chose the channel, not whether the message looks convincing.

There’s another benefit here: time. Verifying out of band slows things down. That pause weakens urgency and gives your brain space to switch from reaction to reasoning. Many scams fall apart the moment you stop rushing.

8.2 Reporting Mechanisms

Reporting scam texts may feel pointless in the moment, but it plays an important role in the larger defense system. When you report a message, you’re adding a data point that helps carriers and platforms spot patterns.

Most mobile carriers support reporting spam by forwarding the message to 7726 (which spells SPAM). This sends the message content and sender details directly to carrier abuse teams.

Smartphones also make this easier than it used to be. Both iOS and Android messaging apps let you report messages as spam or junk with a few taps. These reports feed into automated systems that flag similar messages in the future.

Reporting won’t make scams disappear overnight. You may still see similar texts tomorrow. The benefit is cumulative. Large-scale filtering depends on volume and patterns, not individual reports.

It’s also worth reporting messages even if you didn’t click anything. Ignoring a scam protects you, but reporting it helps protect others by exposing active infrastructure.

8.3 Future Outlook

Scams evolve alongside communication technology. As messaging platforms become richer and more interactive, attackers adapt quickly. Rich Communication Services (RCS), for example, adds features like verified sender profiles, branding, and interactive buttons.

These features improve legitimate communication, but they also raise the stakes. A message with a verified checkmark or branded layout can feel even more trustworthy. If those signals are abused or misunderstood, the impact of scams increases.

Early signs already show attackers experimenting with RCS-based scams, especially in regions where adoption is growing. The format changes, but the underlying tactics remain the same.

Defenders are responding with AI-driven tools that analyze behavior, detect anomalies, and react faster than humans can. Attackers are doing the same, using AI to scale, personalize, and adapt their messages.

This creates an ongoing arms race. Technology helps on both sides, but it doesn’t remove the need for judgment. Users still make the final call on whether to trust a message.

The strongest position isn’t relying on one safeguard. It’s layering defenses: carrier filtering, platform tools, and personal habits that assume nothing by default.

Zero trust isn’t a setting you turn on. It’s a way of thinking. And once it becomes a habit, scam texts lose much of their power.